Anthropic was the “don’t be evil” of AI for developers. Now he’s squeezing them all

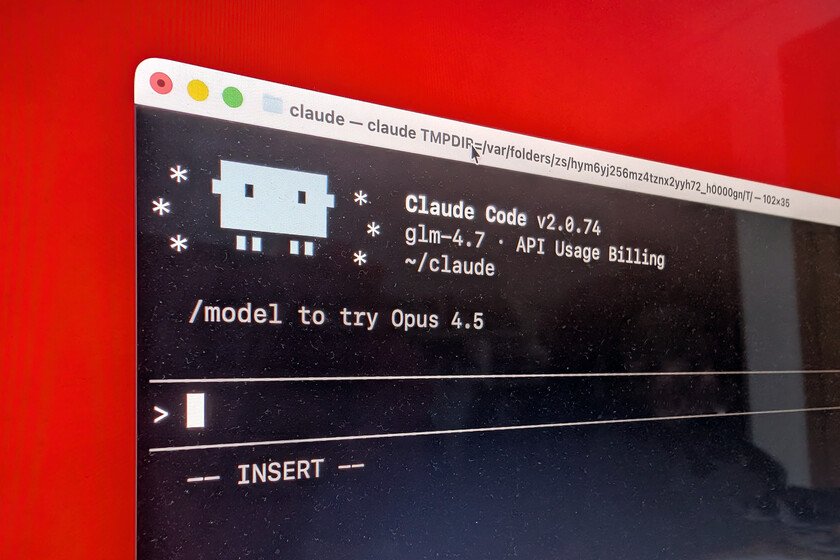

Claude Code and Claude Opus 4.6 sparked a golden era for developers, who found themselves with a fantastic AI agent and model for their work. Suddenly OpenAI was no longer the trendy company: Anthropic was, which users and developers fell in love and became in the pretty girl of AI. Months later we are seeing how Anthropic is making changes that are being highly criticized and that point to something that we have already seen repeatedly: platforms conquer you and inevitably then the platforms squeeze you. The trigger. On April 2, 2026, Stella Laurenzo, Senior Director in AMD’s AI group, published a text in Claude Code’s GitHub repository titled “Claude Code is useless for complex engineering tasks with February updates.” This directive included a meticulous analysis of almost 6,600 real Claude Code sessions with nearly 235,000 tool calls and about 18,000 reasoning blocks in four different projects. The conclusions were obvious to her: the performance of Claude Code and Claude Opus 4.6 had degraded. The numbers. In this analysis, two periods are shown according to Laurenzo. In the good period, from January to mid-February, the model read 6.6 files for every file it edited. In the theoretically degraded period, from March onwards, that rate had fallen to 2.0 files read. Code edits in files that Claude had not recently reviewed went from 6.2% to 33.7%: one in three changes to the code were being made “blindly.” In addition, the visibility of the reasoning was reduced, from 2,200 characters to only 600 on average, but there is something more. The costs of the process multiplied by 122 in the same period, although it is true that in that period they went from using 1-3 concurrent agents to using 5-10, which complicates the interpretation of the data. Anthropic tries to clarify what happened. Anthropic’s official response It was published by Boris Chernyresponsible for Claude Code. This engineer confirmed two actual product changes: On February 9, Opus 4.6 switched to using so-called “adaptive reasoning” by default. On March 3, the default effort level moved from high to medium, sitting at level 85, which Anthropic describes as “the best balance of intelligence, latency, and cost for most users.” Closed debate. Cherny also spoke of that suspicion that Claude was now hiding “how he thought.” He explained that the change in visible reasoning records is not a real degradation, and the detected header was simply a user interface modification that hid intermediate reasoning to reduce latency without affecting model performance. Laurenzo herself had already foreseen something like this and tried to implement solutions to avoid it, but her data confirmed this drop in performance. Cherny closed the debate as if the issue had been resolved, but it doesn’t seem like it really is. Computing capacity crisis. Thariq Shihipar of Claude Code’s team revealed in March that Anthropic was adjusting session limits to 5 hours during peak hours. That is to say: if there was a lot of demand, your Claude tokens would probably run out faster. He pointed out that the measure would actually only be noticed by 7% of users (the most intensive during those peak hours), and confessed “I know this is frustrating. We will continue to invest in scaling efficiency.” This is contradicted by a comment in the debate on Laurenzo’s post in which explained that “we do not degrade our models to better serve demand, I have said this many times before.” More degradations. They appeared other discoveries and criticismssuch as how Claude Code’s prompt cache had also been drastically reduced (from one hour to five minutes), triggering quota consumption in long programming sessions. Anthropic he indicated to VentureBeat that Team and Enterprise accounts are not affected by these session limits, but the pattern seems increasingly clear: computing is scarce and must be rationed… or at least that is what all these Anthropic measures seem to point to. What remains unclear is whether the quality of the model has actually been degraded, although there are Reddit “megathreads” that also point in that direction. “Nerfing”, nothing. When a company deliberately degrades its service, it is often called “nerfing.” on social networksand criticism in this sense was increasing in the case of Anthropic. Numerous publications of users in X and in media of technology have done reference to Laurenzo’s studio and accused Anthropic of this voluntary degradation of its models. Boris Cherny intervened in at least one case to flatly say that “That’s false” and to explain that they reported the changes and in fact gave users the option to disable it. But rationing exists. In The Wall Street Journal they confirmed that this rationing of computing is certainly occurring among AI platforms due to high demand. We have a good example of the consequences in David Hsu, founder and CEO of Retool. He explained in said newspaper that although he preferred Claude Opus 4.6 to power his AI agent, he recently had to switch to the OpenAI model because “Anthropic keeps crashing all the time.” Prices change (silently). The Information indicated yesterday that Anthropic is changing the way it bills users of Enterprise plans. Instead of a subscription of $200 per month with a “flat rate” for using their AI models, what they will do is charge a base rate of $20 per user per month and to that they will add the consumption of each user with the standard price of their API. Your own updated documentation points it out (“Use is not included in the per-seat rate”) and it is estimated that the change could double or even triple the cost of using Claude for heavy users. The discounts of 10 to 15% on the API that were included in the past and that allowed companies to scale this token consumption in a more affordable way also disappear. Prices per million tokens have not changed, but we went from a “flat rate” (with usage fees) to a pay-per-use model, much more expensive for heavy users. It’s not just Anthropic. … Read more