This European alternative to Google Drive has up to 5TB of storage at a 90% discount

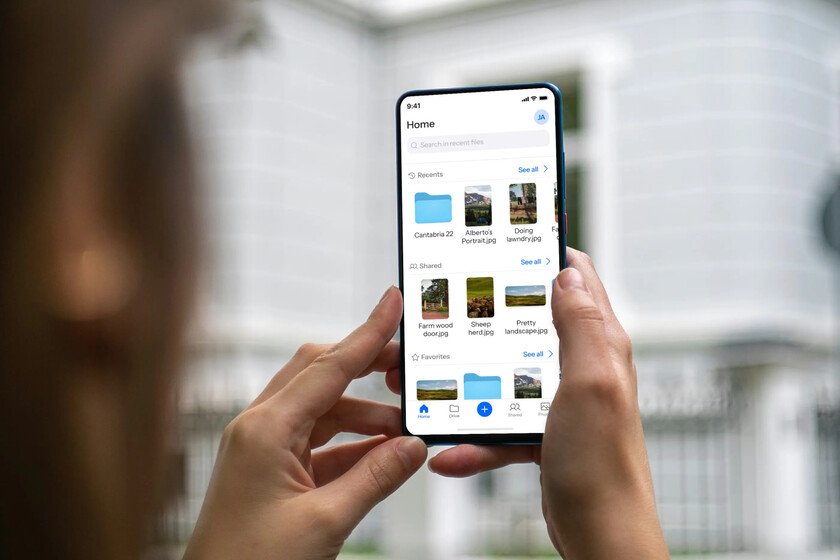

We live in a time where having a lot of subscriptions at the same time has become the most normal thing in the world. Streaming platforms, Spotify or even VPN may be some of those you have in your portfolio. What’s more, it is very possible that, if you like football, You have already subscribed to DAZN to watch the World Cup which starts in a few days. For this reason, when it comes to adding one more subscription to the ones we already have, it costs. If you are looking for cloud storage, there are options that you can pay once and forget about monthly subscriptions or price increases. One of the most interesting right now is Internxt, which is on sale: Using the code ‘XATAKA’ you get a 90% discount. So, you can have 1 TB of storage for life per 190 euros. The price could vary. We earn commission from these links Lifetime cloud storage that includes even a VPN This company of Spanish origin is a European alternative to US clouds like Google Drive or iCloud. This plan with 1 TB, called Essential, is the most basic that Internxt has (we will talk about the other two a little further down). In this way, you pay only once and you ensure service for life without having to pay every month. Beyond the price and being a European cloud, Internxt is an option that has several interesting assets. The first thing is that it is a secure option that has end-to-end encryption, as well as zero-knowledge encryption. This means that both the company and any third party will not be able to access or intercept your data, so it meets a high level of privacy. Internxt is also open source and transparent. That cloud storage meets this characteristic is important, because it means that anyone can audit and review it. This prevents possible back doors through which our data ends up in the hands of third parties. In addition, the interface and application that Internxt has are quite simple and intuitive to use. Not only can you do well to store photos or videos to free up your phone’s storage, but also to make a backup from your teams directly. In addition, any of the plans that Internxt has comes with VPN and antivirus, so if you have a subscription to either of these two services, you can save money. This Internxt Essential plan includes, as we say above, 1 TB of cloud storage. If you want or need more, then perhaps one of the other plans that this company has available will suit you better. We detail them below, although remember that the code ‘XATAKA‘ also allows you to get them with a 90% discount: Premium Plan: 3 TB of storage, VPN, antivirus, cleaner and other additional features per 290 euros lifelong. Ultimate Plan: 5 TB of storage, VPN, antivirus, cleaner, meet and other additional features per 390 euros lifelong. Some of the links in this article are affiliated and may provide a benefit to Xataka. In case of non-availability, offers may vary. Images | Internxt In Xataka | Google Drive alternatives: the best cloud storage services for your files In Xataka | 61 European alternatives to Google, X, Gmail, Chrome, Maps, DropBox, Google Drive, WhatsApp and other popular services