The CEO of logistics gives way to the CEO of engineering

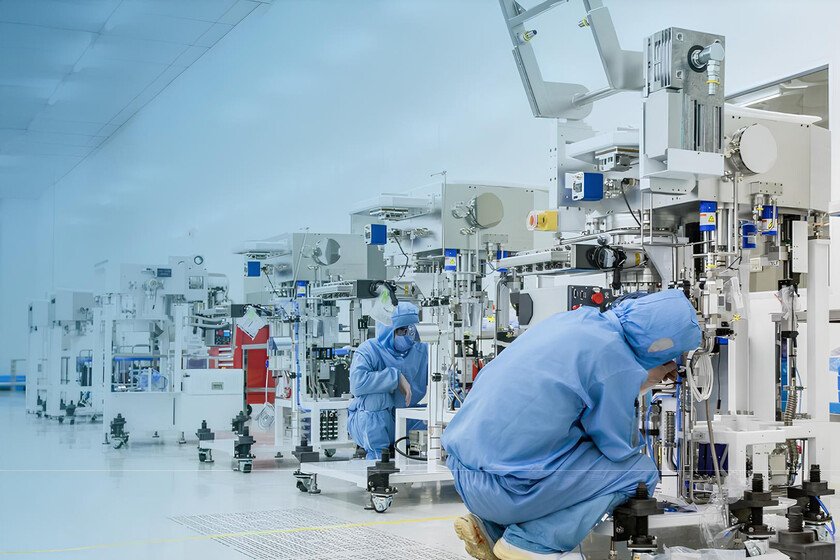

Tim Cook has announced that will step down as Apple CEO on September 1. will replace you John Ternusits senior vice president of hardware engineering. This long-awaited generational change represents an important change in the DNA of the leadership of one of the most valuable companies in the world. Why is it important. Cook was a genius of logistics, supply chain and business diplomacy. Ternus is very different: we are talking about a mechanical engineer who has spent 25 years (half of his life) designing, testing and manufacturing Apple products. Apple goes from a leader who optimized how products are made and sold to one who decides how they are conceived and built. The sign that anticipated everything. In January 2026 we say that Cook had put Ternus in charge of Apple’s design teams. The move was not officially announced, but Mark Gurman made it public in Bloomberg. It was the definitive signal and Cook’s succession had been on the agenda for some time… and Ternus was the number 1 favorite. Until then, design at Apple had functioned as an independent fiefdom, a direct inheritance from the Jony Ive era. That it became dependent on hardware engineering meant that in Ternus’ Apple, technical execution rules over aesthetics. It’s not that design stops mattering. He is no longer the king as he once was. What Ternus has achieved and what he hasn’t. Its footprint is on practically all of Apple’s current hardware catalog: Apple Silicon on the Mac. Intel’s transition to its own chips has probably been Apple’s most important technical decision in the last decade. In chip architecture, the main merit is attributable to Johny Srouji, Ternus’ replacement. In product execution (a MacBook Air without a fan, sustained performance, record autonomy, coherent integration with the SoC…), the credit goes to Ternus. We are possibly in the best Mac cycle in history. iPhone. Not everything in the iPhone is yours, but the build quality, thermal management, choice of materials, and internal integration are. iPad, AirPods, Apple Watch. He has participated in the launch of several new generations and product lines. What is not his fault is the stagnation of the iPad as a platform. That is a software and strategy problem, not the hardware, which is excellent, so we have to ask Craig Federighi and Tim Cook about it. Between the lines. The best comparison we can make here is not so much between Cook and Ternus but between Cook and… Steve Ballmer. Steve Ballmer was a sales and operations CEO who multiplied Microsoft’s revenue but missed the mobile revolution. Cook has been an operations and services CEO who has multiplied Apple’s revenue, but whose tenure has not produced a game-changing new product on the level of the iPhone or iPod. The Apple Watch took several generations to find its place, AirPods are a resounding success almost ten years later, but conceptually they are not a new category. The Vision Pro are in a limbo from which we will see how they emerge. Ternus arrives with a profile closer to the product. And that, in a product company, matters. Besides, Apple has appointed Johny Srouji as Chief Hardware Officera new position that unifies hardware engineering and hardware technologies under his command. It is important for two reasons: Srouji was about to leave. Months ago it was learned that he had informed Cook that he was seriously considering leaving the company. Apple has retained him with more power and responsibility. Confirms that Apple Silicon is the central strategic bet. Ternus’s first big decision as incoming CEO has been to shield his most valuable piece. Yes, but. Ternus inherits a company with pending tasks that cannot be resolved with good hardware alone: AI. Apple Intelligence has arrived with a notable delay (in various senses) with respect to Google, Microsoft and OpenAI. AI is fundamentally software, models and services. Ternus comes from iron. Regulation. The App Store is more controlled than ever and not only in the EU. Commissions, alternative payments and third-party stores are going to define a good part of the coming years. Tariffs and supply chain. The manufacturing structure in China that Cook has built and optimized for many years is now threatened by the Trump administration’s trade policy. The need to surprise. Apple hasn’t launched anything that evokes the ‘effect’ for a while. wow‘so common in the Jobs era. And now what. Cook, as has happened several times with the old guard, is not leaving completely. He will be executive president, focused on the relationship with governments and regulators: the same diplomacy that he has managed with reasonable success for 15 years remains in his hands. Apple does not lose Cook. It relocates it where it can provide the most value now. Ternus is 51 years old. Cook was 50 when he took office.. If Apple maintains its pattern of long tenures, Ternus may be at the helm for a decade or more. Apple’s commitment is to believe that its difference compared to Google, Microsoft and OpenAI will not be in the most powerful AI model, but in how it integrates AI into hardware that people touch, carry and use every day. That’s where Ternus has an advantage that no one else has. If that bet is correct, Apple has chosen the perfect CEO. If the AI battle is won in the cloud and in models, you may have a problem. In Xataka | The foldable iPhone is getting closer every day: this is everything we know about it so far Featured image | Xataka