AI chips have always wanted to become more and more powerful. TSMC has just pointed out the true limit: efficiency

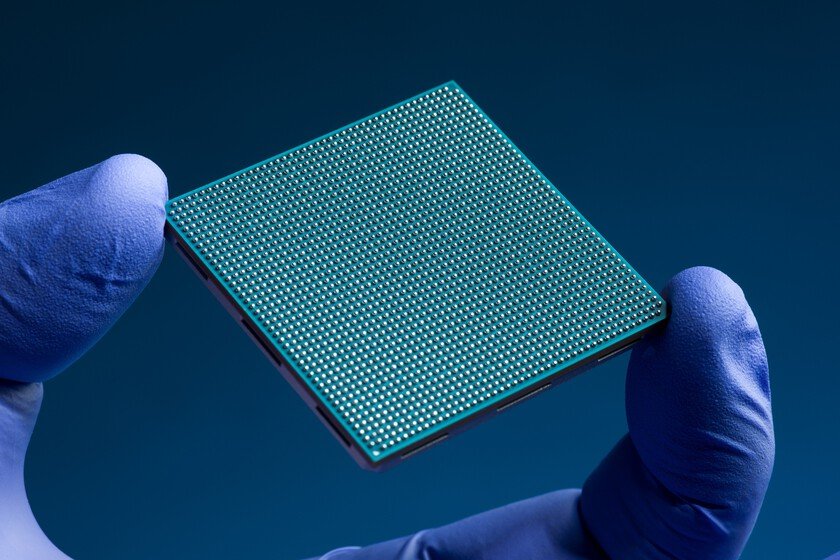

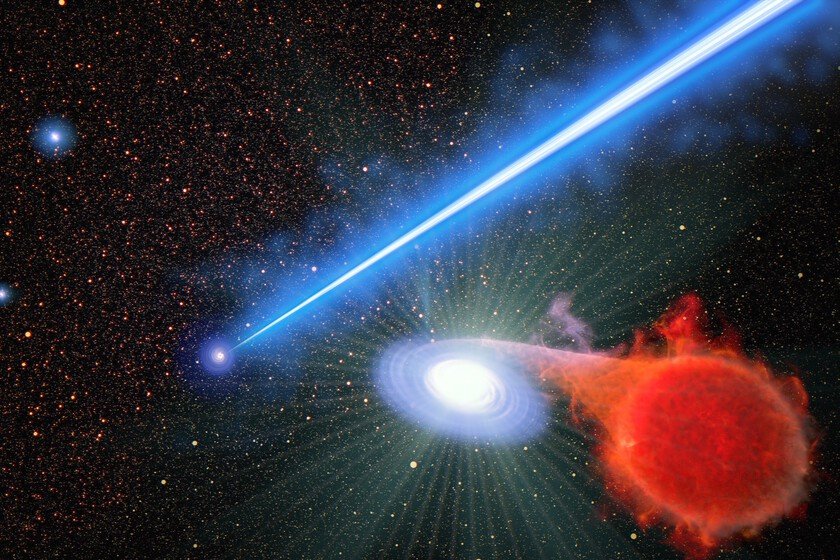

More performance? It is the first thing we usually ask of a new chip, almost without thinking about it. We have done it for years with the processors in our devices and we do it now with the chips that support much of the deployment of AI. More computing power, more speed, more scope to do things that previously seemed out of reach. But this logic begins to encounter a very specific limit: energy. What is making its way now is a less flashy idea, but increasingly difficult to ignore: progress will not only be measured by how much a chip calculates, but also by how much energy it needs to do it. The clearest clue comes from TSMC. We are talking about the largest contract chip manufacturer in the world, a company that does not sell processors under its own brand, but rather produces semiconductors designed by other players in the industry. According to ReutersKevin Zhang, senior vice president of business development, explained at a conference in Amsterdam that his customers are paying more and more attention to performance improvements that do not increase consumption. The pressure comes from very different profiles, from smartphone manufacturers to AI data center operators, all with a concern that we have seen growing in recent times: electricity cost and energy availability. The key is in the manufacturing. TSMC has not simply described a change in priorities. He has also placed it on his technological calendar with A14a future manufacturing technology planned around 2028. The firm expects that this process offers more than a 20% improvement in performance and, at the same time, reduces consumption by up to 30% compared to N2, the process that the company takes as a reference in that comparison. The key is that we are not talking about a specific processor, but rather the method with which subsequent chips can be manufactured. Not everything is about miniaturizing. For decades, reducing the size of transistors has been one of the great ways to gain performance and efficiency in chips. That logic doesn’t go away: transistor density remains within TSMC’s roadmap. What Zhang points out is that in the face of energy pressure from AI, other solutions, such as advanced packaging, chip stacking, and photonics, are also gaining weight. In parallel, as we pointed out a few weeks agoTSMC has decided not to use High-NA EUV, the lithography associated with ASML’s most advanced and ambitious equipment, in its A13 and A12 processes planned for 2029. The battle is also in the data. Huawei enters this conversation with Tau Scaling Lawa proposal that seeks to improve performance by accelerating the movement of data within the chips. The idea shifts part of the focus from the transistor to architecture and integration, two areas that gain weight when manufacturing smaller components is not enough. Along the same lines appears LogicFolding, which Huawei presents as a possible step beyond traditional 3D stacking, but which will depend on new design tools for folded architectures and better dissipation solutions for devices ranging from smartphones to AI data centers. Where are we going? TSMC does not speak for the entire industry, but its position makes the message carry. The firm suggests that, at least in its roadmap and in conversations with its clients, energy efficiency is gaining prominence that was previously more hidden behind performance. And it’s not a concern limited to AI data centers. Huawei, for its part, shows that the problem is also being addressed from architecture and integration, not just from the manufacturing process. The common point is not a closed conclusion, but an increasingly visible tension: chips will have to continue to be more capable, but each leap will be more difficult to justify if it increases consumption, heat or costs. Images | Xataka with Nano Banana In Xataka | Samsung has just achieved a milestone that has not been recorded for eight years. The problem is that it is a mirage