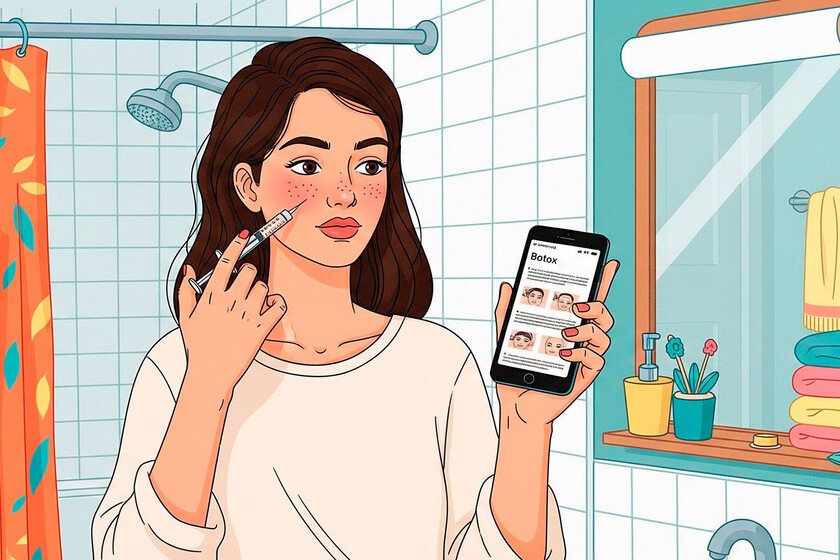

“Espejito, Espejito, who is the most beautiful in the kingdom?”, That famous question that was addressed to a enchanted mirror today is done to Chatgpt. The most curious thing about this “popular Prompt”It is the disposition of many people to follow the advice it can offer. Honesty. A 32 -year -old Australian woman, Ania Rucinski, Interviewed by The Washington PostHe said he asked Chatgpt how his partner could be more “attractive”, due to the lack of sincerity in his surroundings. The answer was direct and bluntly: curtain bangs. However, this is nothing new and is gaining popularity in social networks. A silent tendency. One of the videos that is gaining popularity in Tiktok is published by Marina (@marinagudov)which has reached more than half a million visits. It explained how chatbot has used to make a complete analysis of its style and aesthetics, from a selfie without makeup. The AI indicated his ideal color palette, evaluated his hair tone, advised makeup changes with concrete marks and tones, and even designed a shadow look adapted to the shape of his eyes. The same did a journalist from Indy100which decided to follow the trend after watching multiple videos on social networks, and the same thing happened to influencer. The most surprising, as he recounts, was that the bot also offered a visually generated image with the result. Behind the virality. Why prefer the opinion of a bot rather than that of a human being? According to some users, AI is more honest without being cruel. Kayla Drew, too Interviewed by The Washington PostHe has claimed that he resorts to Chatgpt for everything, even beauty tips, because his direct way of speaking does not hurt as much as the criticism of a close person. For its part, for the same medium, the criticism of beauty Jessica Defined has offered a deeper explanation: “Humans have emotional ties that affect our perceptions. A bot, on the other hand, is not influenced by love, charisma or personality. Only analyzes data and gives their verdict. For those who seek clear answers about their appearance, that feels like an advantage.” There is something else. AI can contribute a more real future vision; It’s like launching a full pool. INDY100 journalist He has found In chatgpt a way of experiencing whether real consequences. The ability to prove, adjust and visualize before making a decision has become one of the main attractions of this trend. What do experts think? Some users interviewed by The Washington Post They have trusted In ChatGPT because it offers a “neutral” opinion, but specialists have warned that it is only an illusion. In the same medium, Emily Pfeiffer, an analyst at Forrester, stressed that “AI simply reflects what you see on the Internet, and much of that has been designed to make people feel bad about themselves and buy more products.” That is, their answers may be conditioned by a market logic that favors consumption, not necessarily the user’s well -being. For its part, Alex Hanna (Distributed AI Research Institute) and Emily Bender (computational linguist) go further by warning that training these models with content as forums that qualify the attractiveness (such as R/Rateme either Hot or Not) implies that we are “automating the male look.” Thus, the chatbot could perpetuate sexist beauty standards, instead of offering a fair or empathic evaluation. Along the same lines, as has detailed for the Argentine media writing Marzyeh Ghassemi, MIT teacher in computational medicine, her concern for how AI can offer harmful advice on sensitive issues. In a documented case, an AI recommended dangerous behaviors to people with eating disorders. This emphasizes that, without ethical supervision, these tools can cause damage even when they do not pretend it. The danger of digital culture. Beauty has always been changing, cultural and deeply subjective. However, artificial intelligence tends to reduce it to repeated and predictable patterns: skin without imperfections, thin bodies, Eurocentric features. That is, dominant standards that are not born from the individual, but from the market. As has pointed out The analyst Emily Pfeiffer, much of the content that trains these models has been designed to make us feel bad with ourselves and push ourselves to consumption. The AI, thus, not only offers advice: recommends products, suggests procedures, encourages spending. We convert the desire to feel better into a mathematical operation oriented to optimization. But optimization for what? To fit into an idealized image that others – or an algorithm – They have built? A study He has shown That systems such as Chatgpt reproduce systemic gender and race biases even in technical tasks such as personnel selection. If that happens in “neutral” contexts, what will not happen when IA evaluates something as culturally charged as physical attractiveness? Many of these models drink online forums and communities where the appearance, or darker spaces of the PERICA and The environments INCEL. These ecosystems not only normalize symbolic violence against bodies that do not fit in their canon, but now feed the databases with which we train artificial intelligences. Thus, what seems like an “objective” tool is, in fact, a deformed mirror: it returns not only idealized images, but the prejudices of an entire digital culture deeply marked by male desire, extreme individualism and the logic of competition. Image | Ecole Polytechnique Xataka | The Klarna CEO dismissed 700 employees to replace them with AI. Now he has replaced himself … with an avatar