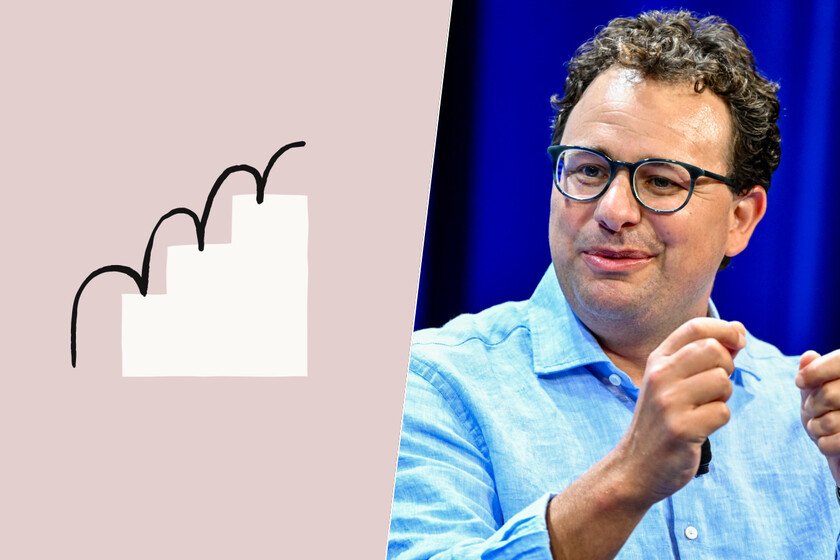

Anthropic has moved ahead of OpenAI in its race to go public. This is very bad news for Sam Altman

Anthropic confirmed on Monday which has formally registered its application for its long-awaited IPO. The operation may become the largest in the history of its type, and reminds us of another singular moment. In August 1995, Netscape went public and marked the beginning of the era of the Internet and dotcom fever. That turned out to be a bubble, but “good”. The question is if it will be repeated what happened then. The original Netscape moment. When Netscape went public, the company had only been on the market for 16 months and had not made a profit in all that time. It didn’t matter. The shares went on the market on August 9, 1995 with an initial price of $28. On its first day of trading, the value skyrocketed quicklyreaching a high of $75 before closing at $58.25. In December of that year it would reach its maximum value, $171 per share. The rest, as they say, it’s history. Netscape’s IPO sent the Nasdaq technology index soaring… until the dot-com bubble hit in 2000. Source: Reuters. Anthropic could break all records. Anthropic’s spectacular growth in recent months has made the company in the pretty girl of the AI sector. The recent investment round has raised its valuation to $965 billionan incredible figure considering that the company is barely five years old. It has also overtaken OpenAI, whose valuation It is currently around $850 billion.. Both were moving to go public this year, but Anthropic has gone ahead again, something that at first glance seems like another victory against its main rival. What Netscape taught us. The explosion of Netscape in 1995 gave rise to fierce competition: companies promising gold and moro did not stop appearing, and the dotcom bubble grew. Too many companies managed to attract investment without a clear business plan and the situation ended up leading to the bursting of the bubble. A few companies survived and managed to become the great giants of today’s technology. good bubbles. That bubble could be described as “good” because although many companies failed, those that remained and those that were created later ended up leading this revolution called the internet. For many, the AI bubble exists, but it is similar to the dotcom bubble in that: many companies could disappear if it bursts, but the final result, they say, will be positive for the evolution of our planet. But Anthropic is very different from Netscape. Although these IPOs present certain analogies, the situation of these companies is very different. Netscape suffered greatly to monetize its software and would end up in the hands of AOL in 1999 when its stage was closing. Anthropic has shown that its approach to businesses works, and in fact this past quarter it surprised by achieving profits (with small print) when everyone expected losses. And still, total uncertainty. Anthropic’s projection—like that of OpenAI—is spectacular on paper, but we are talking about companies that in recent years have not stopped burning money to achieve the most powerful models on the market. All technology companies have been devoured by the AI fever, but today the only ones who win (a lot) money are those that provide components for AI infrastructure. Milestone. The bet is that this infrastructure will be necessary because we will all use AI models on a massive scale, but it is not at all clear that this expectation will be met. It may not, but Anthropic’s IPO will certainly mark a milestone in the dizzying growth of this segment. And victory for Amodei. This year we will likely see three historic IPOs. SpaceX seems to be the first in breaking records, but both Anthropic and OpenAI follow in their footsteps. That the company led by Dario Amodei has formally confirmed its preparation for that exit is a symbolic victory against its great rival, Sam Altman, who is also planning the IPO of OpenAI. In recent months Anthropic has managed to turn the tables, and has gone from being the pursuer to the leader of a race that certainly is not over yet. Image | Wikimedia In Xataka | Anthropic is one step away from being worth as much as Samsung. And what the market is buying is not Claude