What is Gemini Spark, what you can do with it, how it works and who can use it

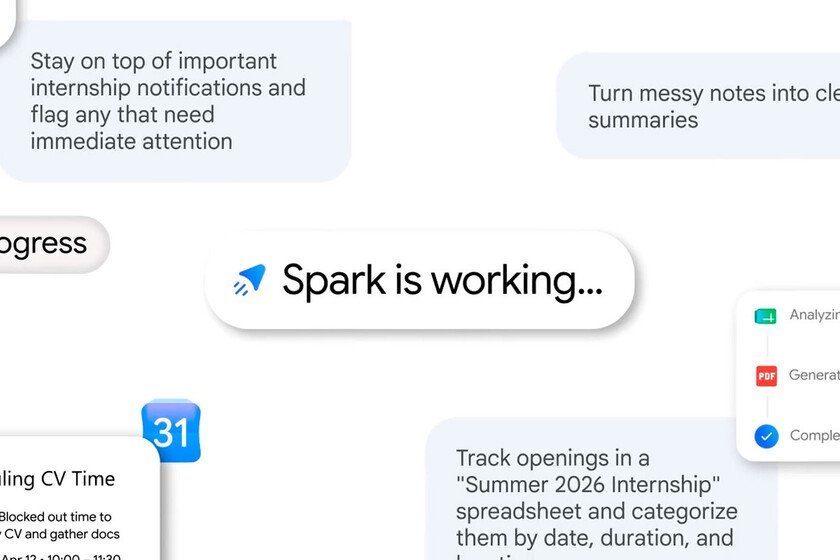

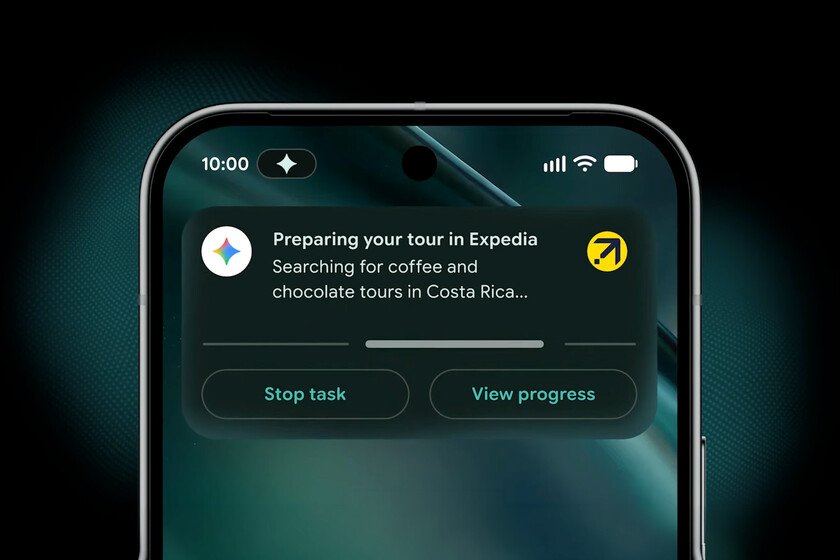

Let’s explain to you What is Gemini Spark and how does it work?the new function of artificial intelligence integrated within the application Gemini. This is a response by the company to OpenClaw and others AI agentsbut aimed at helping us manage our digital life. The idea is not so much to do things for us on the computer as to be able to carry out online tasks autonomously, and even when our mobile phone or computer is turned off. We are going to explain this concept to you so that you understand it well, and then we will tell you how it works. What is Gemini Spark Gemini Spark is an artificial intelligence agent created by Google, and integrated into the Gemini application. AI agents are artificial intelligence systems capable of carry out actions autonomously. You ask them to do a task, and they carry out all the steps interacting with third-party services without the need for your supervision. Come on, it’s like a secretary who does tasks for you. In the case of Spark, it will be able to reason based on what you ask of it and the information it obtains from your connected applications. With this, then will take actions on your behalf interacting with these services. With Gemini Spark you can assign it a task and it will start working on it autonomously, even with your phone and computer turned off. And as one of the aspects that always worries security agents, Google assures that Spark is designed to consult you before taking important actions. Come on, you will have the final decision in these things. Spark can be working in the background 24 hours a day, seven days a week when you ask it to do something. It runs on the Gemini 3.5 model, uses the Antigravity harness, and integrates with Google Workspace tools such as Gmail, Slides, Docs, and all the tools in the company’s office suite. The difference with the normal Gemini is the following. AI chatbots require you to have it open for it to do things. Then, you ask them to do something, they respond and do it, and that’s it. The session ends there. Spark for his part maintain a persistent presence in your apps and digital environment, when you give it the order it can work in several steps and continue doing it in the background while you do other things, or while you sleep. How it works and what you can do with Gemini Spark Gemini Spark doesn’t live inside your device, but on dedicated Google virtual machines. This is what makes keep running in the background even if you close your laptop or lock your mobile. It connects to Gmail, Docs, Slides, and other Workspace tools with structured API integrations, making it more predictable than agents navigating the desktop pixel by pixel. This agent can connect natively with Gmail, Calendar, Drive, Docs, Sheets, Slides, YouTube and Google Maps. It is important that you know that these connections are disabled by defaultand you will be the one who decides which of them you want to activate to work with Spark. Spark can use a remote browser to leave the Google ecosystem. This way, they can enter a website and interact with it to perform tasks such as adding products to a shopping cart on your behalf. This tool will be able to do complex jobs for you. You can set recurring tasks to be done as often as you decide, teach it new skills, and let it execute multi-step jobs on your behalf. You can also organize your Google Drive files, even adding them to a spreadsheet by adding tags and notes. It can also automatically extract data from emails, create folders in your Drive cloud, etc. You can also teach it personalized behaviors or “Skills” to repeat, such as reading your last 50 emails sent, generating a style guide with your writing style and applying it when you ask it to write an email, and more. Another thing you can do is configure schedules to organize tasks with schedules or when conditions are met. For example, you can ask that every Monday at nine in the morning scan your inbox and summarize your emails, propose a list of priority tasks, or block gaps in your work calendar. You will also be able to create written documents or presentations from a prompt in tools such as Docs or Slides. It can review events, respond to invitations or schedule calendar meetings, convert email chains into a travel plan, record expenses in a spreadsheet, analyze past bills to anticipate future household payments, add recurring reminders, browse websites and compare options, help you complete reservations, and much more. The most important thing is that all this is going to do under your supervision. It’s as if Spark were an employee, who will send you updates on steps it deems critical, and will require your explicit approval to perform high-risk actions, such as sending emails. Who can use Gemini Spark Gemini Spark is part of the Google AI Ultra subscription. This means that if you want to use it you will have to go for the most expensive subscriptionwhich in Spain means paying a minimum of 100 euros per month. Additionally, Spark can only be used in the United States at the moment, because It is a tool that is still in beta phase. There is still no confirmation on when it will reach other languages and countries, although when it finally arrives it will be able to be used on Gemini websites and apps of Android and iOS. They also plan to integrate it directly into Chrome in the future. In Xataka Basics | 64 free courses for AI with Claude, ChatGPT, Gemini and Copilot created by your own companies