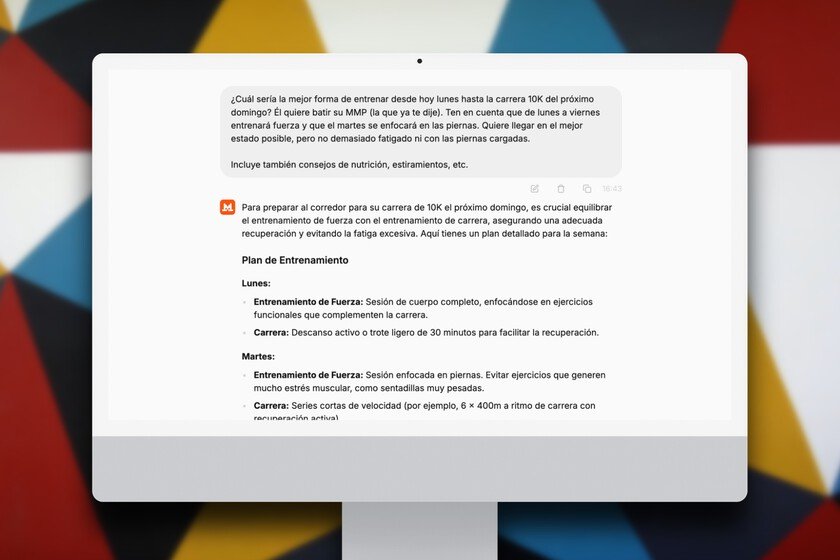

Mistral recently launched the mobile app and the new web version of Le Chat, his AI assistant that seeks a European approach (and with a French accent) for chatbots that are already something everyday in our lives. Incidentally, that launch has been accompanied by an important update of its web platform. As a regular user of Chatgpt and ClaudeI wanted to test it in several scenarios, also comparing it with its rivals. The first impression impacts: It is deviably fastmuch more than any model we have seen so far. Of course he has even more crumb. Speed changes the rules The most striking is still your speed in responding. Reach 1,000 words per second thanks to Its integration with brain processors. This, in practice, means long virtually snapshot responses. Not that GPT-4O Or 3.5 Sonnet on duty are too slow, it is a first world problem, but I think we all prefer to wait a second to wait fifteen. In my tests comparing Le Chat with Chatgpt and Claude I have noticed that difference. For example, by asking them to write a long and complete analysis about the tariff policy of the United States, Le chat did it in a couple of seconds, with a well structured text and even with appointments. His rivals offered quite similar quality responses, but it took longer. And if we want to spin fine, Claude was the one who was above Chatgpt: his answers are usually better in pure writing (apart from code, for example), he has a somewhat more refined, natural and professional style, all at the same time. In fact That’s where chat loses against Claude: The most convincing style, more similar to that of a competent human, the Anthropic chatbot wins. Chatgpt maintains a good general balance, but here does not stand out in speed or style. It is also worth commenting on something: their answers are backed by consultations to reputed media and agencies such as AFP, his countryman, with whom he maintains a collaboration agreement. In my translation and content analysis tests, the model has shown to be at the height of Chatgpt and Claude in quality, although with a more concise style. A somewhat trivial example made me glimpse that Le chat seems somewhat more assertive than chatgpt or claude. Especially the first (and sometimes also the second) sin of being too influenced by the human who talks with them. The example that said: I asked him if Xataka was a Spanish soccer club, what would it be. His answer was Barcelona. He argued reasonably well, but I retended in an incisive way: “Why not Real Madrid? Xataka is the most read technology of Spain at a distance of the second, and Real Madrid is the Spanish club with more titles.” This was your answer. Colors out, I liked it to be reaffirmed and that it was not complacent with my re -question: The choice of FC Barcelona to compare with Xataka is based on several factors that go beyond the number of titles. Here are some reasons why I opted for Barcelona instead of Real Madrid: Style and philosophy: FC Barcelona is known for its distinctive game style, often associated with the “Tiki-Taka”, which is innovative and different. Xataka, being a technology blog, also stands out for its innovative approach and its ability to adapt to new technological trends. Identity and community: FC Barcelona has a strong Catalan identity and a very united community of followers. Xataka also has a community of very compromised and passionate readers by technology, which reinforces that sense of community. Adaptability and avant -garde: Although Real Madrid is a huge club with many titles, FC Barcelona has been historically known for its ability to adapt and be at the forefront in terms of play style and talent development. This aligns with how Xataka remains at the forefront of technology. Global recognition: Both Xataka and FC Barcelona have significant global recognition in their respective fields. Although Real Madrid is also globally recognized, the way in which FC Barcelona has built its unique brand and style resonates more with the identity of Xataka. These reasons do not imply that Real Madrid is less relevant, but simply highlight how certain aspects of FC Barcelona resonate more with Xataka’s identity and approach as a technology blog. By the way, when I asked who Real Madrid would be, he gave an argument argued in his prestige, global scope, awards, etc: The country. It sounded at least reasonable, but when, already restless, I asked who Valencia would be, he told me The world. Twisting the gesture, I asked him who was then Atlético de Madrid. Answer: “Public“. End of the test. Personalized agents and automation One of Le Chat’s most interesting characteristics is his agents system, which allows Create specialized attendees invoking them with “@”. This functionality, similar to OpenAI GPTS or Claude projects, but with a different approach, allows you to automate specific tasks and create personalized workflows. Yes indeed: As with GPTs and projects, this is a feature that requires a Pro subscriptionno free plans. Image: Xataka. Agents can be configured in two ways: through the visual interface of the plataforme or through the API for developers. The interesting thing is that you can customize aspects such as: The base model (Mistral Large 2Mistral Nemo or Codestral). The “temperature” or the tone of the answers (to make them more creative or more precise). Specific behavioral instructions. Examples of use to improve your performance. Unlike ChatGPT GPTS, which are more oriented to the end user, Le Chat agents seem to be designed also thinking about business integrations and automated workflows. While Claude does not yet offer similar functionality (although Claude Pro with extended context), Mistral approach seems to be halfway between the ease of use of chatgpt and flexibility They are looking for developers. In my experience, the creation of agents is more technical than with GPTS, but also more powerful in terms of customization and … Read more