I have a chatgpt at home

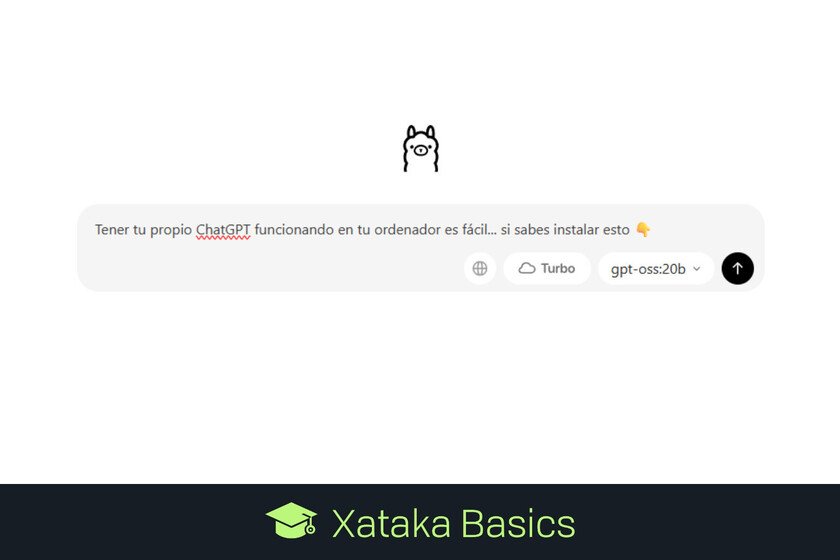

To use Chatgpt In the cloud it is fantastic. It is always there, available, remembering our previous chats and responding quickly and efficiently. But depending on that service also has disadvantages (cost, privacy), And that is where a fantastic possibility enters: Execute AI models at home. For example, Assemble a local chatpt. This is what we have been able to verify in Xataka when trying the New Open Openai models. In our case we wanted to try the GPT-Oss-20B model, which can be used theoretically without too many problems with 16 GB of memory. That is at least what Sam Altman presumed yesterday, which after launch He affirmed That the upper model (120b) can be executed in a high -end laptop, while the smallest can be executed on a mobile. Our experience, which has gone to trompicones, confirms those words. First tests: failure After trying the model for a couple of hours it seemed to me that this statement was exaggerated. My tests were simple: I have a MAC Mini M4 with 16 GB of unified memory, and I have been testing AI models for months through months Ollamaan application that makes it especially easy to download and execute them at home. In this case, the process to prove that new “small” model of OpenAi was simple: Install Ollama In my Mac (I already had it installed) Term a terminal in macOS Download and execute the OpenAI model with a simple command: “OLLAMA RUN GPT-Oss: 20B “ In doing so, the tool begins to Download the model, which weighs about 13 GBalready then execute it. Throw it to be able to use it already takes a bit: it is necessary to move those 13 GB of the model and pass them from the disk to the unified memory of the Mac. After one or two minutes, the indicator appears that you can already write and chat with GPT-Oss-20B. That’s when I started trying to ask some things, like that already traditional test of counting Erres. Thus, I started asking the model to answer me to the question “How many” R “are there in the phrase” San Roque’s dog has no tail because Ramón Ramírez has cut it? “ There GPT-Oss-20b began to “think” and showed his chain of thought (Chain of Thought) in a more off gray color. In doing so one discovers that, in effect, this model answered the question perfectly, and was separating by words and then break -in each word to find out how many erres there were in each one. He added them at the end, and obtained the correct result. The problem? That was slow. Very slow. Not only that: in the first execution of this model, two Firefox instances had open with about 15 tabs each, in addition to a Slack session in macOS. That was a problem, because GPT-Oss-20B needs at least 13 GB of RAM, and both Firefox and Slack and the background services themselves already consume a lot. That made trying to use it, the collapse system. Suddenly my Mac Mini M4 with 16 GB of unified memory was completely hung, without responding to any bracket or mouse movement. I was dead, so I had to restart it to the hard ones. In the following restart I simply opened the terminal to execute Ollama, and in that case I could use the GPT-Oss-20B model, although as I say, limited by the slowness of the answers. That caused that many more evidence could happen either. I tried to start an unimportant conversation, but there I made an error: This model is a reasoning modeland therefore try to always respond better than a model that does not reason, but That implies that it takes even more to respond and consume more resources. And in a team like this, which is already just starting, that is a problem. In the end, total success After commenting on the experience in X some Messages in x They encouraged me To try again, but this time with LM Studio, which directly offers a graphic interface much more in line with which Chatgpt offers in the browser. Of the 16 GB of unified memory of my Mac Mini M4, LM Studio indicates that 10.67 are dedicated to graphic memory right now. That data was key to use Openai’s open model without problems. After installing it and downloading the model again I prepared to try it, but when I tried it, I gave me a mistake saying that I did not have enough resources to start the model. The problem: the assigned graphic memory, which was insufficient. When navigating the application configuration I proved that unified graphic memory had been distributed in a special way, assigning in this session 10.67 GB to graphic memory. The key is to “lighten” the execution of the model. For this it is possible to reduce the level of “GPU offload” – how many layers of the model are loaded in the GPU. The more we load faster, but also more graphic memory consumes. Locating that limit in 10, for example, was a good option. There are other options such as deactivating “Offload KV Cache to Gpu Memory” (cachea intermediate results) or reduce the “evaluation batch size”, how many token are processed in parallel, which we can download from 512 to 256 or even 128. Once these parameters were established, I got the model finally charged in memory (it takes a few seconds) and being able to use it. And there the thing changed, because I met a chatgpt more than decent that he answered quite quickly to the questions and that was, in essence, very usable. Thus, I asked him about the problem of the Erres (he replied perfectly) and then I also asked that he made a table with the five countries that most championships and runners -up in the world of football have won. This test is relatively simple —The correct data They are on Wikipedia– But the IAS are … Read more