Nvidia has just presented the definitive chip against Intel and AMD. There is a problem: Windows

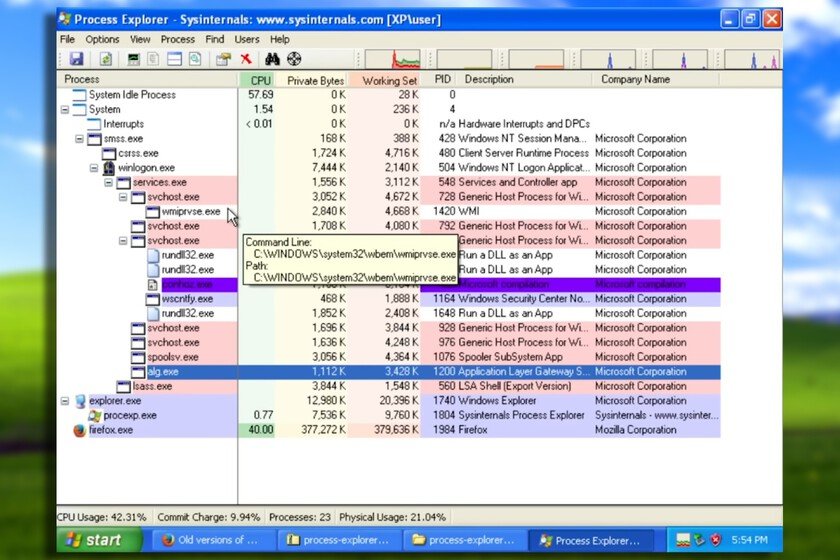

The Nvidia processor for PC is the “the wolf is coming” of consumer technology. The company has been the reference for years in GPUs for gamers and flirted with SoCs thanks to the Tegra chips (which are what give life to both nintendo switch like to nintendo switch 2), but for computers they still couldn’t find a way to get equipment with 100% Nvidia guts. That just changed with the presentation of RTX Spark chips. It is a SoC that directly attacks the binomial Windows PC = Intel or AMD CPUone that is positioned as the alternative to those traditional options and that is specifically designed to compete for the heart of the consumer PC. Specifically, for laptops. Now, although Microsoft and Nvidia have been generating excitement for a few days and pointing out that it is the new era of the PC, there is a problem. Windows. The brake is no longer silicon, it could be Windows The theory is very interesting. RTX Spark combines a CPU Grace up to 20 cores that it has developed together with MediaTek (this is curious) with an RTX Blackwell GPU with 6,144 cores. TSMC (how could it not be otherwise) has given life to chip in a 3 nanometer lithography. Not only is it powerful, but it has up to 128 GB of unified memory (the same design that we see in Apple Silicon) and an interface NVLink which allows communication between RAM, CPU and GPU to be very, very fast. Nvidia talks about rendering heavy 3D scenes on laptops, running models with 120 billion parameters, and at the same time running games at 1,440p above 100 FPS with DLSS and ray tracing. The best? That Jensen Huang stood out at the Computex conference showing this in very thin and light laptops. It is the same strategy that Qualcomm follows. own Microsoft has already presented a Surface with RTX Spark and it is an architecture that makes a lot of sense in the universe of current light but powerful laptops… and also in desktop computers like a mac mini or of a mac studio. And, compared to the more traditional PC industry, the GPU is estimated to be in the range of a RTX 5070 for laptops. In the absence of testing it, it is undeniable that it looks good and that, although there are data that are not so favorable (such as bandwidth when compared to the most powerful Apple), it is a good addition to a segment in which, if we left the Intel/AMD duo, the only one that was trying was Qualcomm with devices like the Snapdragon X Elite. And there is the key: RTX Spark, like Qualcomm chips, is focused on being the heart of a Windows that is at its brightest. Because RTX Spark is a chip with ARM architecture and, although in office tasks Windows ARM It moves well, under more demanding tasks is when it begins to not be up to par. Microsoft’s system, which they themselves know is not at its best level of popularity due to the whole issue of AI features, has many shortcomings in its ARM version when it comes to gaming, precisely what Nvidia is promoting. It is also not the best optimized on laptop computers, something that is being seen with type machines. Steam Deck. The heart of the new Surface We are seeing it in recent years with PC-console asus, MSI either Lenovo: The hardware is good, but Windows drags down the experience significantly. The paradox is that the Steam Deck, being the least capable on paper, is usually more recommended precisely because it avoids Windows and relies on a system much more fine-tuned for that format. With RTX Spark, the two companies say they have been working for a long time to solve those problems and make this time, Windows on an ARM chip feel different with support for games with anticheat and native for personal agents. We will see in practice what ends up arriving, but two things are clear here. The first is that Microsoft gains aggressive hardware to compete face to face against Apple in the field of very powerful laptops with long battery life. The second is that Qualcomm is no longer alone in that corral and now it will be very interesting to see what hardware it responds with. Because Nvidia already has the chip, the CUDA ecosystem and agreements with all manufacturers, as well as the support of the giant TSMC. The “weak” link, therefore, is not silicon, it is a Windows on ARM that has improved a lot in recent yearsbut that is the element that will have the most to prove. In Xataka | Graphic muscle for Windows and a slam of the door on Android: the exclusivity toll that Nvidia demands with its new ARM architecture