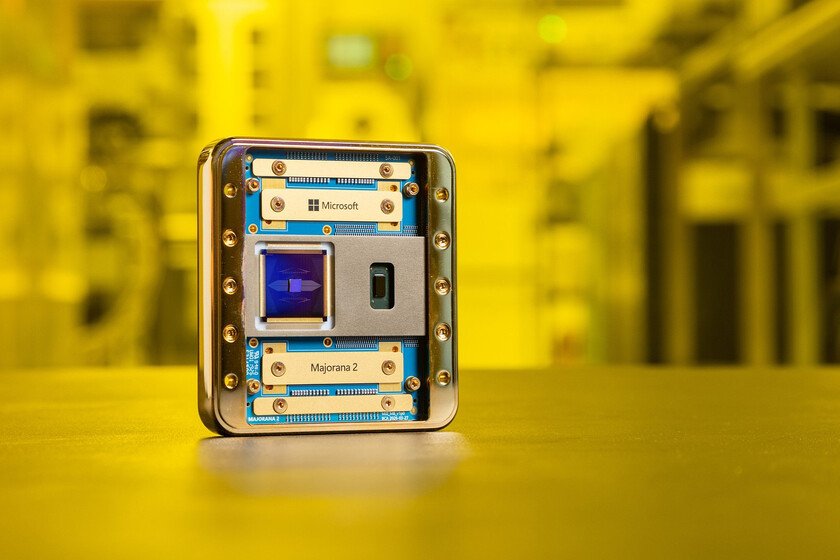

Microsoft believed it would take decades to have a useful quantum computer. Majorana 2 just pushed that deadline to 2029

Finding the Majorana particle would be the best thing that could happen to them. quantum computers. The Italian physicist Ettore Majorana mathematically described its existence in 1937, and since then many researchers have become obsessed with it because it has a characteristic that makes it unique: it is both a particle and its own antiparticle. What makes it very attractive for quantum computing is that, when it appears, it does so in pairs and its topological nature gives it a resistance to external noise that conventional qubits do not have. This distribution of information at two separate points means that local errors triggered by vibrations, temperature or radiation cannot easily erase it. The coincidence of this duplicity and its stability suggests that these particles could be used to make qubits that are more stable and less prone to external perturbations than the qubits used in current quantum computers. Or that, at least, is what Microsoft is pursuing, although with an important nuance: it sounds very good, but after the cold water of 2021 physicists are extraordinarily careful when dealing with them. Microsoft promises to have a functional quantum computer in 2029 Microsoft does not work with Majorana fermions in the strict sense of the elementary particle predicted by Ettore Majorana. What you are looking for are Majorana modes or Majorana quasiparticles: collective excitations that emerge in certain topological superconducting materials and that behave as if they were Majorana fermions. They are not fundamental particles; They are emerging phenomena in the field of condensed matter. This strategy allowed Microsoft officially present in February 2025 Majorana 1, the first topological quantum processor. However, the scientific community received it with skepticism. And it did so because the Redmond company claimed to have created a state of matter in silicon that until then it only existed in theory. His proposal was to use Majorana modes as a basis for more stable quantum computing. Majorana 2 has been developed with the help of Discovery artificial intelligence The problem is that Microsoft had tried to demonstrate something similar before, in 2018, and the scientific article that supported it ended up being retracted by Nature three years later. Majorana 1 was, in that sense, both a technical advance and an attempt to regain credibility. And now Majorana 2 arrives. Microsoft has confirmed that this new quantum processor has been developed with the help of its artificial intelligence (IA) Discovery, and has also explained that it incorporates new materials with the purpose of accelerating the arrival of an error-resistant, and therefore fully functional, quantum computer. Chetan Nayak, CTO and Corporate Vice President of Quantum Hardware, has explained that the Microsoft Quantum team has improved the materials stack used in Majorana 1 for the purpose of create a more stable topological phase. Majorana 2 replaces aluminum with lead, and upgrades the semiconducting active region to a combination of indium arsenide and indium arsenide-antimonide. This change in materials has triggered, according to Microsoftsignificant performance improvements. And it also helps protect the fragile qubits of cosmic disturbances that can destabilize them. Be that as it may, this statement from Nayak summarizes the impact that Microsoft believes Majorana 2 will have on its roadmap: “Based on this rapid progress, we are accelerating our plan toward a scalable and practical quantum computer: we have cut our schedule in half and now aim to reach this goal in 2029.” It is an ambitious promise. And with Microsoft’s track record in quantum computing, the scientific community has reason to continue to be demanding when evaluating it. Image | Microsoft More information | Microsoft In Xataka | 38% of AI experts in the US have been trained in China. They are essential to sustain your leadership