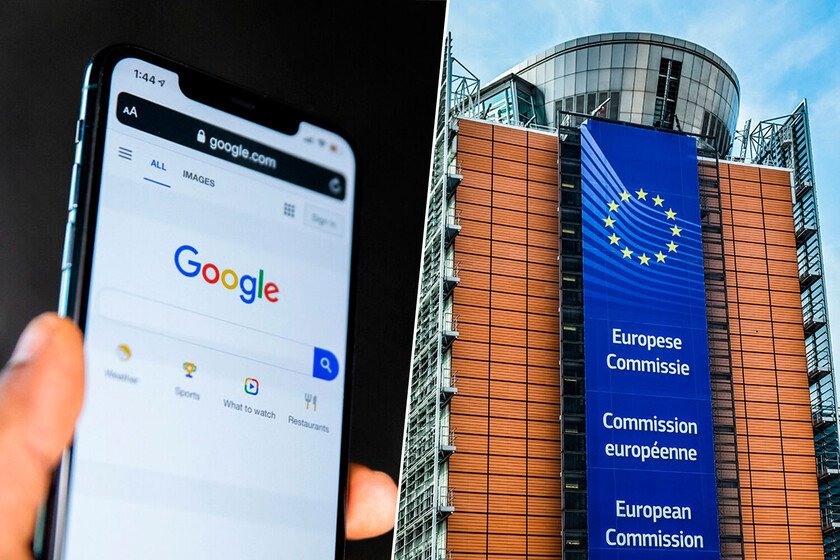

Google changed the news to summaries made with AI. Now the European Commission has something to tell you

In March of this year an earthquake shook European publishing houses. The reason was that Google implemented AI Overviews in your search engine. This means that, where links to media news previously appeared, a summary made with AI now appears, with the detriment that this entails for the media, which in some cases They have lost up to 50% of traffic. Now the European Commission has taken action on the matter. What has happened? The European Commission has formally opened a new antitrust investigation against Google. The reason this time is the use of content from media outlets and YouTube creators to feed their AI summaries, all without compensating the creators. The investigation will try to elucidate whether Google is distorting competition by placing unfair rules on the media, while its access to content (especially in the case of YouTube) displaces other competitors of AI companies. In the words of Teresa Ribera, Executive Vice President for a Clean, Fair and Competitive Transition at the European Commission: “AI is bringing remarkable innovation and many benefits to people and businesses across Europe, but this progress cannot come at the expense of the fundamental principles of our societies. That is why we are investigating whether Google has imposed unfair conditions on publishers and content creators, while putting developers of rival AI models at a disadvantage, in breach of EU competition rules.” Why is it important. The research involves questioning the model that Google has built around its generative AI, but it also calls into question the entire problem of the use of foreign content by these tools. Opens the door to reconfiguring the AI market, imposing limits and compensation for original content creators The impact. As we said, the arrival of AI summaries has had a huge impact on media traffic. If readers receive the response without having to make a single click, that traffic is lost and not only that: it is unrecoverable. The worst thing is that to give that answer, Google drinks from the information published by those same media. In the case of YouTube, creators are required to accept a clause so that their content can be used for different purposes, including train your AI. Consequences. The investigation has just begun and there is no set date for its conclusion, which could take years. They will study whether Google has violated the article 102 of the Treaty on the Functioning of the EU and the article 54 of the Agreement on the European Economic Area, which prohibit the abuse of a dominant position. If Google is eventually found to have breached these rules, the Commission could force them to take measures to comply with the law, such as compensating creators, allowing them to opt out of having their content appear in summaries, or even removing summaries across the EU, in addition to a possible fine. And now they go… It is not the first time that Google has faced monopoly accusations in the EU. In fact, it is the technology company that accumulates the highest fines. The highest was 4.3 billion for abuse of dominant position with Androidfollowed by 2,950 million for their abuse in the advertising market. He also had to pay 2,420 million for Google Shopping and 1,490 million for AdSense. Images | UnsplashEuropean Commission In Xataka | The EU has spent years fiercely fighting monopolies. Teresa Ribera has other plans for telecos