TSMC and SK Hynix are suffocating Samsung. To defend itself, it is already preparing a brutal weapon: 1 nm chips

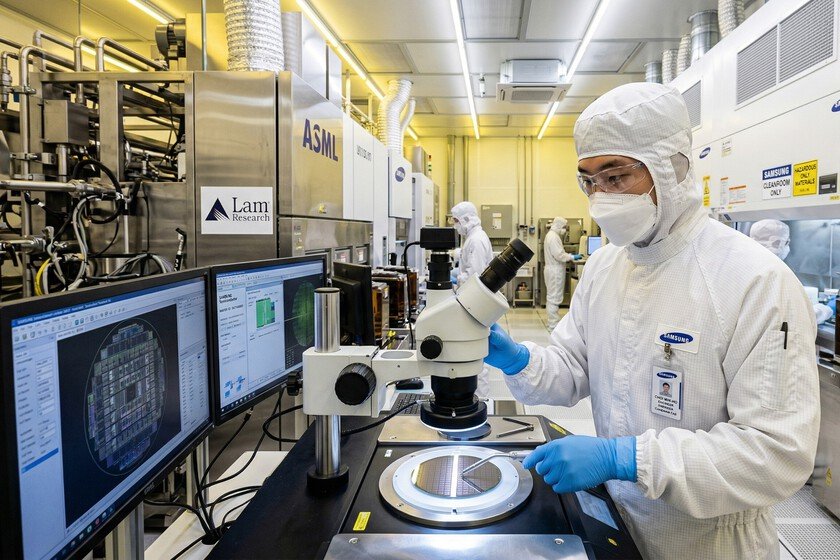

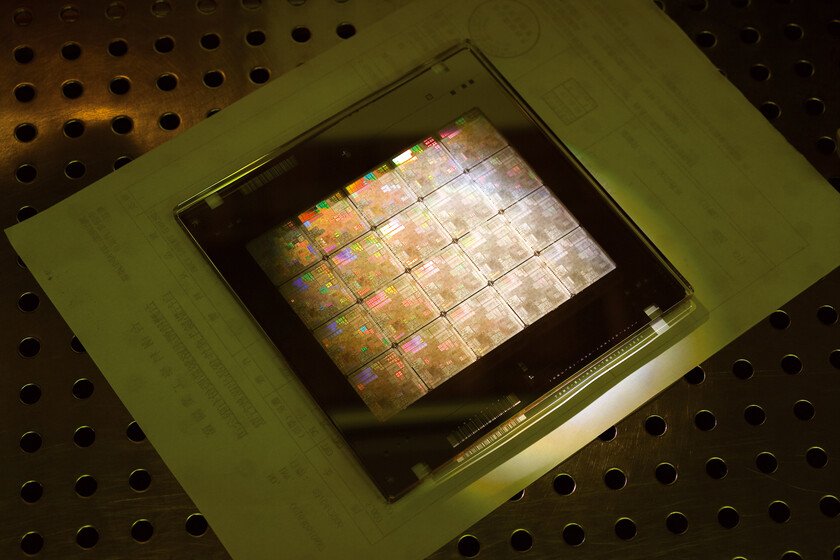

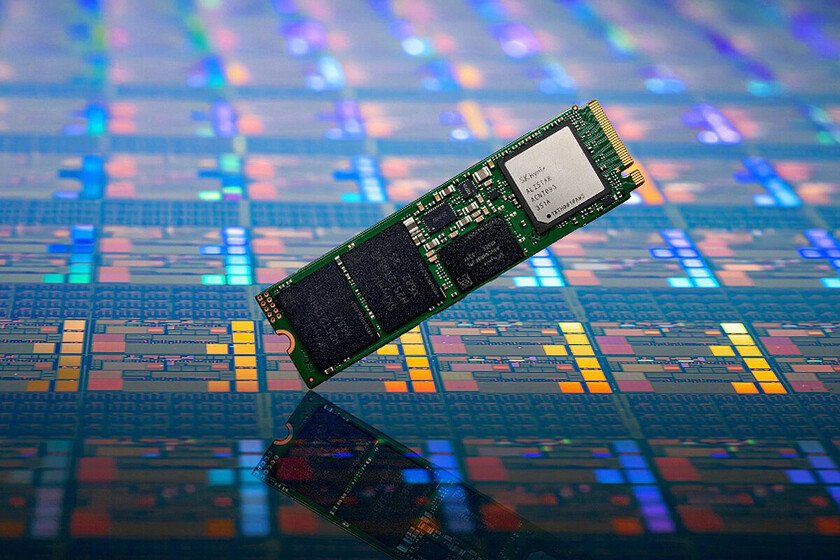

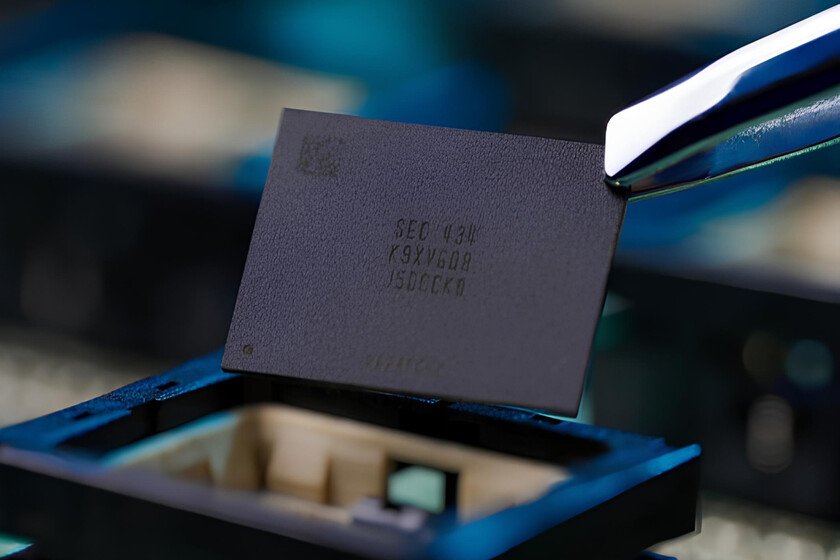

Samsung Electronics has two major competitors in the semiconductor industry: TSMC and SK Hynix. The Taiwanese company TSMC leads the market for manufacturing integrated circuits for third parties with a share close to 70%, according to the consulting firm. TrendForce. Samsung is the second largest chip producer for third parties, although with a market share of 7.2% It is positioned very far from the leader of this industry. And the Chinese company SMIC (Semiconductor Manufacturing International Corp) is hot on his heels in third position with a share of 5.32%. Samsung’s other big business is memory chips. In this market it competes with the American company Micron Technology, but its biggest rival is the also South Korean company SK Hynix. In recent years, Samsung has led the DRAM memory integrated circuit manufacturing market with an approximate 40% share, while SK Hynix defended a very worthy 29%. Behind both was Micron Technology, with 26% approximately. However, during the first quarter of 2025 a very important setback occurred. SK Hynix controls none other than 70% of the market of HBM memory ICs (High Bandwidth Memory), so its leadership in this sector is overwhelming. If we look at the DRAM memory chips the figures are much more even, although SK Hynix also leads. TSMC and SK Hynix. SK Hynix and TSMC. These two competitors are two big headaches for Samsung, but the latter company seems unwilling to throw in the towel. Samsung plans to have its 1nm photolithography ready in 2030 In February 2025 the Taiwan Economic Daily published a report in which he assured that TSMC plans to develop a cutting-edge semiconductor plant that will be expressly designed to produce 1nm chips. It will be housed in the Taiwanese town of Tainan, and will be called ‘Fab 25’. It will work with 12-inch wafers, have six production lines and will begin large-scale manufacturing in 2030. It may seem like there is still a lot of time, but that is not the case. In fact, according to the newspaper Korea Economic DailySamsung is making efforts to step on the heels of TSMC. And, incidentally, surpass SK Hynix. Samsung’s future 1nm production lines will benefit from the refinements that the company is going to introduce to its 2nm nodes And Samsung engineers have already been working on their 1 nm photolithography for many months with the aim of concluding the research and development phase in 2030 to be able to start mass manufacturing in 2031. There is a lot at stakebut the development of this technology is by no means a piece of cake. In fact, this company is currently trying to optimize the performance of its 2nm nodes because its Exynos 2600 processor in smartphones Galaxy S26 and S26+ suffers when we compare its performance and energy efficiency with those of comparable chips manufactured by TSMC in its 3nm nodes. Be that as it may, Samsung’s future 1nm semiconductor production lines will benefit from the refinements that this company is going to introduce in its 2nm nodes. And, above all, they will take advantage of Fork Sheet technology with which its engineers seek to leave behind the limitations of Gate-All-Around technology (GAA). Fork Sheet It will allow them, roughly speaking, to dramatically optimize the space on 1nm chips by adding a non-conductive element between the transistors with one purpose: to eliminate empty spaces and pack a higher density of transistors on the same surface. It sounds really good. We will tell you more as soon as we have detailed information about this innovation. Image | Generated by Xataka with Gemini More information | Korea Economic Daily In Xataka | We already know what the chips that will arrive until 2039 will be like. The machine that will allow them to be manufactured is close