If you downloaded the wrong game on Steam a year ago, now the FBI is looking for you. And yes, it’s the real FBI

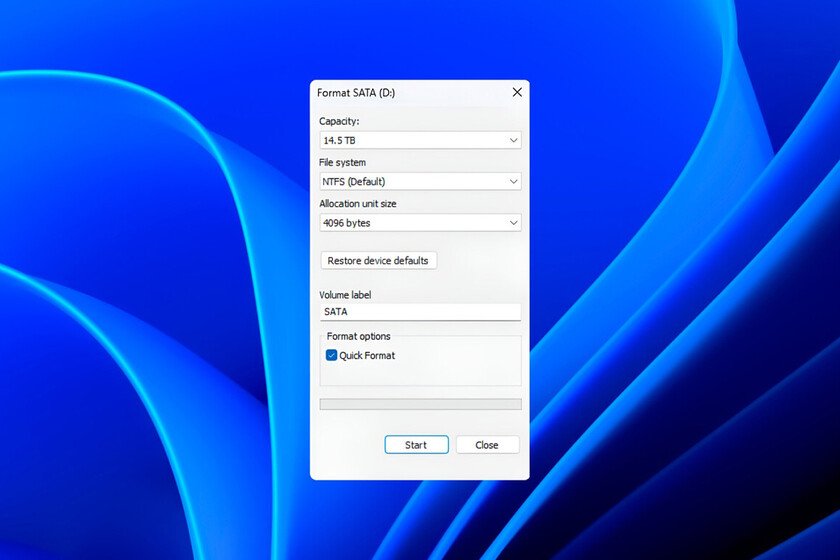

By now, we have all learned to distrust a little of what we see on the internet. Alarmist messages, supposedly official warnings, stories that sound too serious to be true. Therefore, if someone tells us that the FBI could be looking for people for having downloaded a game on Steam, the normal thing is to think that it is another hoax and move on. However, in this case it is worth stopping for a second, because what we see before us does not fit into that usual pattern. The advertisement. As Mein-MMO explainswhat we know part of a clear warning from the FBI itselfwhich has launched an investigation to identify users who may have been affected after installing certain games on Steam. Specifically, the Seattle division notes that these titles included malwaresomething that would have gone unnoticed by those who downloaded them. The time frame is broad, from May 2024 to January 2026, and that is where the agency believes the activity was concentrated. When Valve has to confirm it. The curious thing about this case is that the communication itself with users has had to overcome an obvious barrier, mistrust. Several users on Reddit point out that Valve sent messages to those who may have been affected to inform them of the investigation, but added a clarification that is unusual in this type of notice. The message said: “We can confirm that the message and the linked website are, in fact, from the FBI.” It is not a minor detail, because it reflects the extent to which the context can seem suspicious even when it is legitimate. What games are reached? The FBI has been narrowing the case down to a series of games. Besides, Bitdefender describes them as indie titles with little visibility within the platform, something that could have made it easier for them to go unnoticed for longer. The games mentioned so far are the following: BlockBlasters Chemia Dashverse/DashFPS Lampy Lunara PirateFi Tokenova What were they really looking for?. At this point, it is important to understand what type of threat the cybersecurity sources that have analyzed the case describe. According to the aforementioned cybersecurity firm, we would be facing what is known as an “information stealer“, a type of program designed to collect sensitive data from the device without the user realizing it. Among the information it could extract are credentials stored in the browser, authentication cookies that keep sessions open in different services or even data linked to cryptocurrency wallets. The steps to follow. The agency is asking those who believe they may have been affected to fill out a form specific to provide information to the investigation. As detailed by the agency itself, the responses are voluntary, but they can serve to identify victims of a federal crime and, in some cases, provide access to services, restitution and rights provided for by law. The FBI also adds that the identity of the victims will be kept confidential. Images | FBI | Compagnons In Xataka | There are people earning up to $600 a week talking to strangers. The goal: teach AI to sound human