Google is serious about putting data centers in space. Elon Musk and Jeff Bezos rub hands

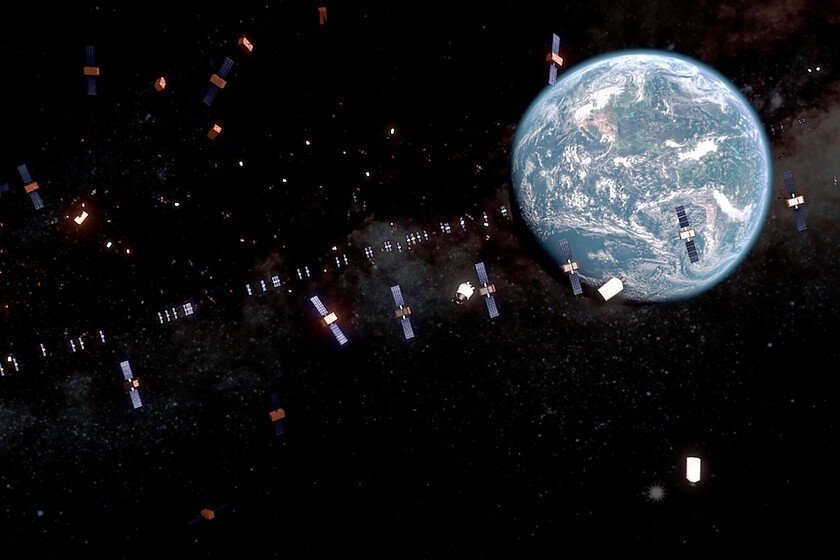

While there are municipalities debating whether to let big technology companies install data centers in their domainsGoogle wants a strike further: taking the data centers to space. Google. The company revealed its intentions a few weeks ago and your Suncatcher project wants to install two prototype satellites before 2027. Curiously, Elon Musk and Jeff Bezos are more than delighted with the idea of their rival. Suncatcher Project. Push the capabilities of the artificial intelligence requires that we train it and, for this, they are necessary huge data centers with spectacular computing power. The problem is that the energy needs of these facilities They are astronomical, becoming resource sinksmaking oil companies set aside their renewable energy plans and even raising the opening of “private” nuclear power plants. Suncatcher couldn’t have a more appropriate name. In space, without the influence of the atmosphere, solar panels They capture the light spectrum in a different way, enough to feed those data centers that seem insatiable, and what Google proposes is to build constellations of dozens or hundreds of satellites that orbit in formation at about 650 kilometers high. Each of them would be armed with Trillium TPU (processors specifically designed for AI calculations) and would be connected to each other via laser optical links. Pichai puts the topic anywhere. Although 2027 is the key date, it is evident that Google is very interested in airing its plans because it is a sign of both technological power and an invitation for interested entities to invest in the process – and a way to continue inflating everything around AI-. And the person who is practicing this speech the most is the company’s CEO himself: Sundar Pichai. Since we learned of Google’s plans, Pichai has spoken of the topic in every interview he has given. It does not tell anything new beyond that hope of having TPUs in space in 2027 and the ambition that in a decade extraterrestrial data centers will be the norm. Musk and Bezos: competition, but allies. And if Google is interested in selling its narrative, those who are also interested are two of its most direct competitors: Elon Musk and Jeff Bezos. Both Musk with several of his companies and Bezos with Amazon Web Services are in the race for data centers and artificial intelligence. They have some of the largest on the planet, but they also have something that the rest of the competitors don’t: ability to launch things into space. Musk with SpaceX and Bezos with Blue Origin have the tools to put satellites into orbit, charging for each kilo they launch into space. And it is there, the more credible it seems that the future of computing is in low Earth orbit, the more economic and political sense they will make. SpaceX as Blue Origin. Both are Google’s competition, but also the option for Google to achieve its objective. And, ultimately, we keep seeing rival companies renting their services from each other. Data center fever in space. The truth is that, at first, it sounds like a crazy plan to build these extraterrestrial data centers, but from the most pragmatic point of view (removing logistics and the money that both development and each launch will cost from the equation), it is a plan that makes sense. In space, a panel can perform up to eight times more than on the Earth’s surface, in addition to generating electricity continuously by not depending on day/night cycles. It is something that would eliminate the need for huge batteries, but also for complex water-based cooling systems. And, as we said, Google is not alone in this. Currently, there is a fever for space data centers with big technology companies in the spotlight: Considerable challenges. Now, Google itself comment It will not be easy to carry out this strategy. On the one hand, the costs. The company claims that prices may fall several thousand dollars per kilo to just $200/kg by mid-2030 if the industry consolidates. They note that, in that case, the price of launching and operating a space data center could be comparable to the energy costs for an equivalent terrestrial data center. Another difficulty will be maintaining a close orbit between the satellites. They would have to be within 100-200 meters of each other for optical links to be viable. And most importantly: radiation tolerance by the TPUs. Google has been experimenting with this for years, but they must test the effects of radiation on sensitive components such as the HBM memory. Surely astronomers They will be delighted with this strategysame as with starlink. Image | THAT In Xataka | We are launching more things into space than ever before. And the next problem is already on the table: how to pollute less