We believed that the iPhone 17 and the Air shone by cameras and design. We have just discovered that they hide an exclusive security function

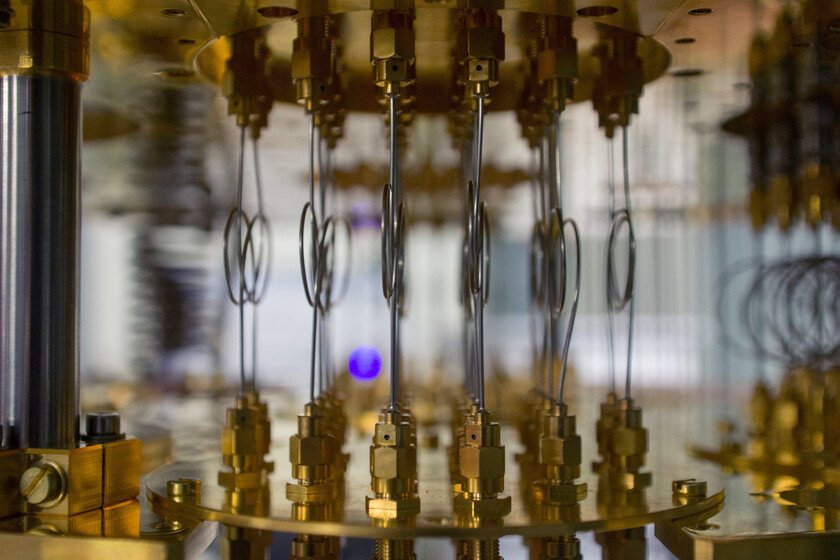

For a long time we have lived with the illusion that there are impenetrable computer systems. The reality is less resounding: in security, everything is reduced to how much effort, time and resources it requires to force a lock. Just as it is not the same to open the door of the house as the vault of a bank, in the digital world There are more or less resistant barriers and unexpected shortcuts that avoid brute force. The objective of the defense is not perfection, but to raise the toll to break it is impracticable. From there, the risk never disappears, it is managed. With that practical look, Apple has been adding layers to make every step of the attacker more and reduce its maneuvering margin. According to the Cupertino companythe most sophisticated exploitation chains that have observed against iOS come from the mercenary spyware and rely on memory vulnerabilities. Although they do not explicitly mention it, they surely refer to threats such as Pegasus of the company NSO. And the answer they have raised is a new piece in that wall: a reinforcement that integrates hardware and system to monitor the integrity of memory and cut overwhelms or undue accesses before they thrive. Memory Integrity Enforcement on iPhone 17 and iPhone Air Apple has presented Memory Integrity Enforcement (Mie) as part of the new iPhone 17, iPhone 17 Pro and Pro Max and iPhone Airan integrated memory defense directly in its hardware and operating system. This development is the result of five years of joint work among their teams of Chips and Software Engineeringwith the aim of drastically raising the cost and complexity of attacks based on memory corruption. Mie promises to act continuously and transparently, covering critical areas such as kernel and more than 70 processes in user space, all this without compromising energy consumption and device performance. The Miene nucleus combines several layers that work in a coordinated manner to reinforce security. The typated memory assigners are systems that organize the data according to their type, as if each object class had a specific drawer. This organization makes it more difficult than an error in a program allows one data to overwrite another. If a failure occurs, the system can detect it before it becomes an attack. On this basis acts the Enhanced Memory Tagging Extension (EMTE), a hardware technology that adds an extra layer of memory control. Emte works by assigning a “secret label” to each memory block. Every time an app or the system wants to access it, you must present the correct label; If it does not coincide, Hardware blocks attempt And the system can close the process. This permanent and synchronized check allows to detect and stop classic attacks such as buffer overflows or use after release (USE-AFTER-FREE), which are usual techniques to take control of a device. The allocators protect the use of large -scale memory, while EMTE provides precision to the smallest blocks, where the software itself does not respond with the same effectiveness. This permanent and synchronized check allows to detect and stop classic attacks such as buffer overflows The bet responds to a landscape of threats where the highest levels against iOS are faces, complex and directed, historically associated with state actors. These chains usually share a common denominator: they exploit interchangeable memory vulnerabilities that have been present throughout the industry. The intention of Mie is cutting the progression in early stages, when the attacker still has little room and depends on chaining multiple fragile steps to gain control. Apple graph showing real exploitation chains and the points where it blocks them The scope of protection includes kernel and extends to key system processes that are usually entry objectives. In addition, Apple makes available to developers the possibility of testing and integrating these defenses through the Enhanced Security option in Xcode, including EMTE capabilities in compatible hardware. That is especially relevant to applications where a user can be direct objective, as messaging or social networkswhich often appear at the beginning of the exploitation chains. To sustain the labeling and synchronous check -up without perceptible impact, Apple redesigned the A19 and A19 Pro allocating CPU area, CPU speed and memory for label storage. The company precisely modeled where and how to deploy emte, so that the hardware meets the demand for checks. The software, on the other hand, takes advantage of the assignments typated to raise the bar of protection against memory corruption, while the hardware assumes fine verification. As we point out above, this should maintain the expected experience in performance and autonomy. The project was evaluated with its offensive research team from 2020 to 2025. First with conceptual exercises, then with practical attacks in simulated environments and, finally, on hardware prototypes. This prolonged collaboration allowed to identify and close complete exploitation strategies Before launch. According to Apple, even trying to rebuild known real chains, they failed to restore them reliably against Mie, because too many steps were neutralized at the base. Even so, Apple remembers that perfect security does not exist. Very rare cases could survive, such as certain overflows within the same allocation. For previous generations without EMTE support, the company promises to continue expanding software -based improvements and safe memory allocatives, with the aim of bringing part of these benefits to previous devices without affecting its stability. Ultimately, Mie does not eliminate riskbut it does redraw the rules of the game by raising the cost and difficulty of memory corruption techniques. For those who buy an iPhone 17 or an iPhone Air, this translates into always active protection and, according to Apple, invisible for the user. Images | Xataka with Gemini 2.5 In Xataka | Or pay or we will use your works to train AI: the threat of hackers to an artist website In Xataka | How to change all our passwords according to three cybersecurity experts