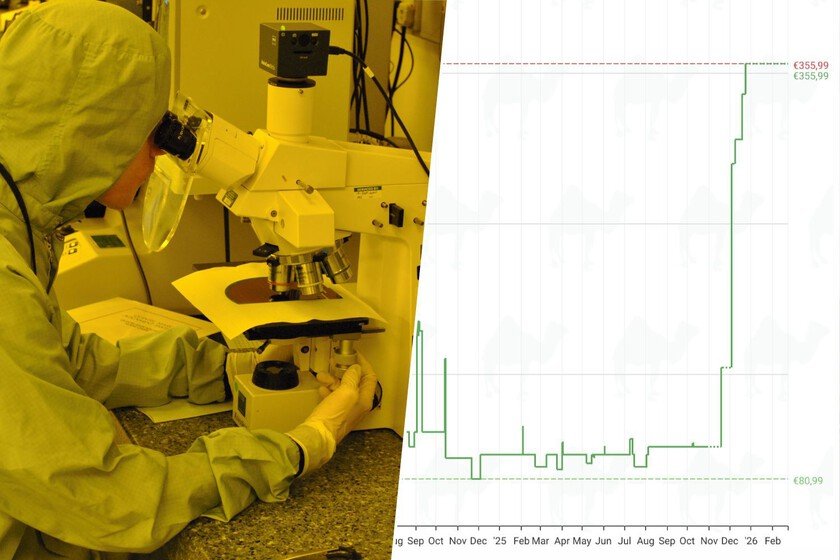

There is no RAM for so much AI. At this point in the film, no one can ignore that we are fully immersed in a new component crisis. Unlike the perfect storm that shook the technology industry in 2020, the new crisis is due to something very specific: the voracity of data centers and the artificial intelligence. In recent weeks we have seen negativity everywhere, but now one of the main people responsible for the lack of RAM comes to say that things are not going to stay the same. They are going to get worse. 30% of the goal. Chey Tae-won is not just anyone. This is the CEO of SK groupone of the largest conglomerates in the world and a South Korean giant that controls everything from the energy industry to chemicals and telephony. In addition, it has SK Hynix, one of the largest manufacturers of memories from around the world. If there is an authorized voice in this crisisof course it is yours. And what did he say? Well, there’s still a RAM storm left for a while. In a recent interview, stated that memory supply will be more than 30% below AI demand for this year. That is, by turning all their production to high-performance memory for AI, completely abandoning the consumer sector, they will be far from be able to satisfy what companies like NVIDIA they are claiming. structural problem. As we say, we have been talking about the state of the industry for weeks, but now we understand the extent to which the consumer sector has taken a backseat to memory manufacturers. That “we have given everything and we are going to fall within 30% of the goal” is tremendously revealing and explains the reason why everything with a memory chip is rising in price. Micron, SK Hynix and Samsung are the three companies that lead production by memory. They make both consumer memory (that of the mobile phone, the PC, the routerTV or car) as a professional (high-bandwidth HBMs), but their production is not unlimited: if they want to increase performance in one type of memory, they must lower that of the other. And that’s what’s happening: the AI business is memory hungry, and for every unit of high-bandwidth memory produced, several units of standard memory must be sacrificed for other devices. This creates a bottleneck and an “unprecedented” shortage, according to Micron’s vice president, as the AI industry is consuming all memory production capacity, creating a tremendous shortage in the conventional branch. All sold. As consumers, buy an SSD, a RAM module and a Large capacity HDD is a luxury right now, but to those who control chip production, it’s going well for them because they are selling all production before starting to “print” chips. Chey Tae-won himself has commented that the profit margins on his HBM4 chips are stratospheric, around 60%. Micron has already commented that all of its HBM memory production capacity for 2026 is already sold, and These are statements similar to those of Western Digital a few days ago. This implies that they have already sold components that do not exist for graphics cards that do not exist and that will power data centers that do not yet exist. abandoning ship. Samsung, SK and Micron are expanding their production lines and opening factories, but getting clean rooms It’s a slow process for them to start making chips, and Micron’s new plants, for example, aren’t expected to start making RAM until 2028. And when they do, it’ll likely be memory for data centers, not consumer price relief. In the end, there are only a few suppliers for many manufacturers, and that has another consequence: there will be brands that they have to get out of the car. The CEO of the SK group has commented that “there will probably be PC and smartphone manufacturers that will end up abandoning their businesses”, but he has not been the only one. A few days ago, the boss of Phison, a company that makes memory controllers, pointed in the same line. And it is easy to understand: if a manufacturer with low volume costs much more for memory, it has two options: sell a PC/mobile with less RAM or sell that same product much more expensive. Neither is a good idea. The price of 32 GB of DDR5 RAM from Crucial. Micron’s Crucial no longer exists Not very hopeful forecasts. The big question is when this solution will end. From SMIC, the large Chinese foundry, it is estimated that storm remains for a while because everyone wants to build their infrastructure for the next decade over the next two years. There are analysts who estimate that manufacturers – such as those in the automotive sector – are stockpiling AI out of “panic” that it will run out and now HBM4 memory is being produced, but in a few years there will be superior technology that will make AI faster and more capable… and the industry will turn to it again if the bubble doesn’t burst first. Domino. Meanwhile, companies like TeslaIntel or the Japanese giant SoftBank They want to get fully into the DRAM market and the companies Chinese companies like CXMT have an opportunity to meet the demand for AI for devices such as laptops. And, although we now see how it has impacted the price of loose components, we have to wait to see what happens in already assembled devices. Lenovo has pointed that the price of laptops is going to rise, but there are also warnings about important price increases in mobile phones, above all in low and mid-range devices, where the price of RAM represents a large part of the product cost. As I have said before, we have to cross our fingers so that the mobile phone or PC does not break, since once it is time to change it, paying the price will not be something pleasant. Images | Xataka, Bananovaya In Xataka | We … Read more