Sierra was the second most powerful supercomputer in the world. When its time came it ended up in the shredder, literally

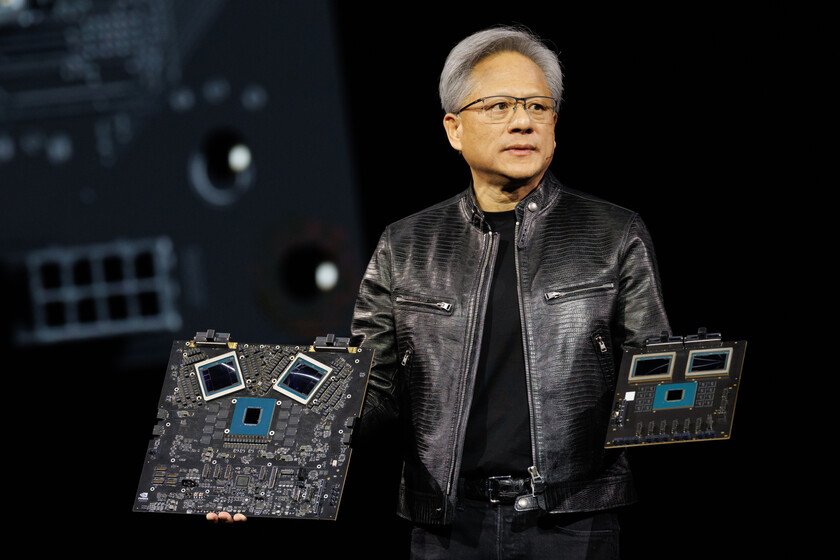

Supercomputers represent the extreme of modern computing: machines capable of performing enormous amounts of calculations every second and supporting scientific or strategic projects of enormous complexity. Saw He was one of those giants. For years he operated in the Lawrence Livermore National Laboratorywhere he was in charge of highly sensitive simulations for the United States Government. At the time he came to occupy second place in the TOP500 rankingwhich ranks the world’s fastest supercomputers. But in high-performance computing, even the most advanced systems have a limited lifespan. After seven years of service, Sierra has been retired. A giant for simulations. When Sierra began operating in 2018 at the Livermore facility, it was incorporated into the center’s high-performance computing infrastructure to support the nuclear arsenal maintenance program managed by the National Nuclear Security Administration. Instead of resorting to real nuclear tests, scientists use computer simulations capable of reproducing the behavior of the weapons and materials involved in their design. This work requires extraordinary computing power and also has implications in areas such as nonproliferation and counterterrorism. Almost at the top of the ranking. As we noted above, for several years the Sierra was among the fastest machines on the planet. According to the TOP500 ranking, it recorded 94.64 petaflops, that is, tens of quadrillion floating point operations per second. To achieve this, it used an unusual architecture at the time, based on IBM Power9 processors combined with NVIDIA Volta V100 graphics accelerators. This design allowed work to be distributed among thousands of computing nodes and offered a notable leap over previous generations of supercomputing. When the hardware starts to fail. Supercomputers do not escape a reality common to any technological infrastructure: over the years, the hardware begins to deteriorate. In this type of systems, The usual useful life is usually around five to seven yearsa period after which the failure rate begins to grow and maintaining the system becomes more complex. As these machines accumulate hours of operation, the likelihood increases that certain components will fail or need to be replaced. In the case of Sierra, furthermore, part of the problem was already very specific: some of its components had stopped being manufactured and the version of the operating system it used had lost support. The successor. Sierra’s retirement is also related to the arrival of a new generation of supercomputing at the center. In 2025 it began operating The Captainthe system destined to take its place within the laboratory’s computing infrastructure. Although at first glance both may seem similar facilities, the difference is inside. El Capitan uses an architecture based on the AMD Instinct MI300A APUs and a shared memory system between CPU and GPU, which allows it to achieve much higher performance. According to data released by the lab, this machine can reach 1,809 exaflops, about 19 times faster than Sierra at its peak according to TOP500. Disassemble a supercomputer piece by piece. The end of Sierra was not simply about shutting down the system and leaving it out of commission. The process was carried out in several phases that began with the progressive removal of computing nodes and internal components. Technicians dismantled entire racks, extracted batteries and separated different elements for recycling or controlled destruction. Some parts, such as system plates or metal structures, were sent to specialized facilities for shredding. Since Sierra had worked with simulations linked to the US nuclear arsenal, the laboratory had to prevent any possibility of partial data recovery or reconstruction of sensitive information, hence the storage devices received even stricter treatment. Images | United States Department of Energy In Xataka | Meta has been buying chips from NVIDIA and AMD for years. Now it also makes its own so as not to fall short