Something strange happened inside the Earth in 2011 and 27 years of data have not solved the mystery

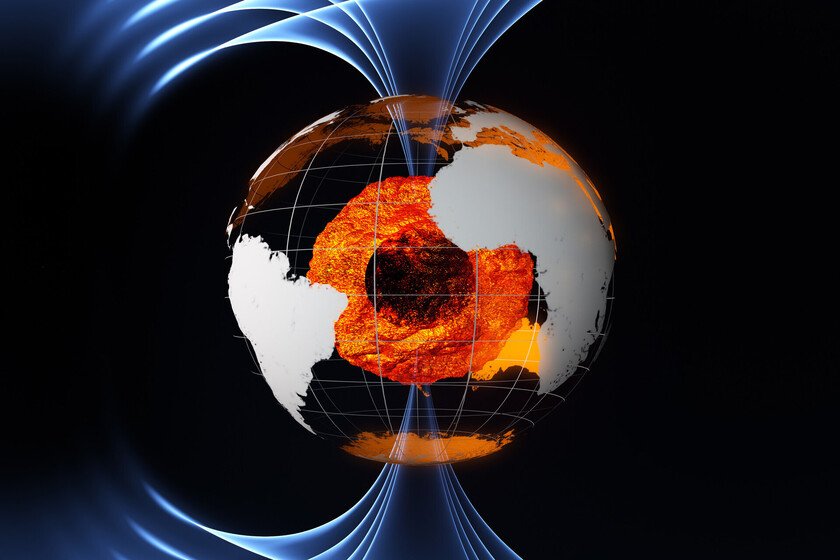

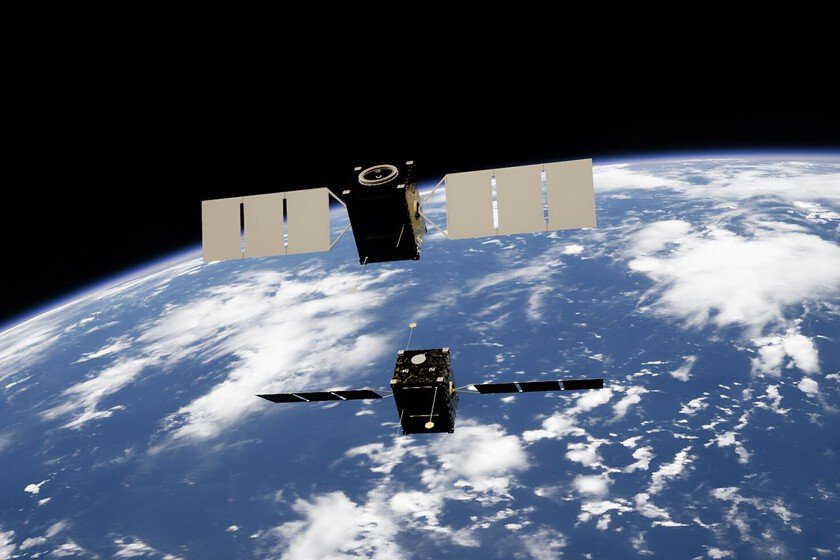

In 2011, scientists observed an unexpected change in the flow of molten iron and nickel that makes up the earth core external. While its surface flow normally moves westward, it was detected to be moving just eastward. It was something totally unusual and mysterious. As a result of this observation, a study was launched, the results of which have recently been published. The objective was to know the reasons, but now there are only a few certainties and still many doubts. 27 years of observations. In this study 27 years of behavior of the Earth’s core were retrospectively analyzed, between 1997 and 2025. The core cannot be directly observed. However, its behavior directly influences that of the Earth’s magnetic field. Therefore, fluctuations in one can be detected in the other using satellite observations. It was seen that while the Earth’s outer core moves normally westward, there was a portion of it that went from a weak westward flow in 2010 to a much stronger eastward flow in 2012. It remained that way until 2020 and now appears to be starting to weaken again. Three options. When this change in movement was detected in 2011, it was thought that it could be due to three reasons. On the one hand, it could be a one-off fluctuation. On the other hand, it is possible that it is part of a periodic oscillation. And finally, it could be due to a way of establishing a balance in the circulation of the core. The only thing we see at the moment with the satellite observations is that the change was progressive. The behavioral modification began in 2010 and was already very clear in 2012. In 2011, when it was observed, it was in full transition. Other simultaneous observations. When analyzing the data from that period, it was seen that, coinciding with this change of direction, there were also some seismic signals that agree with the dates. Even geomagnetic shocks have been detected that correspond to a turbulent activity in the earth’s core. It’s not a whirlpool. This change of direction has not occurred throughout the core. For a start, the earth’s core consists of two parts: the internal and the external. The internal one is subjected to so much pressure that the metals are in a solid state despite the high temperatures. On the other hand, on the outside they are in a liquid state and, therefore, in motion. Even so, it wasn’t the entire outer core that changed its movement either. It corresponds to a specific region, located under the Pacific Ocean. It could be seen as a whirlpool, but these scientists have concluded that it is not, since the movement is part of a larger, wavy structure. Something like if an entire section of this part of the core suddenly began to move against expectations. Why is it important. The movement of the molten metal in the core generates electrical currents, which in turn give rise to a geomagnetic field that extends into space. Therefore, thanks to the movement of the Earth’s core we have an entire magnetic shield around the Earth that protects our atmosphere from the erosion caused by particles from the solar winds. For this nucleus to change its movement is not dangerous. We are not going to run out of atmosphere, because the core is still there. However, understanding its fluctuations can help us also understand the fluctuations of the magnetic field. This not only protects the atmosphere from erosion. It also helps us keep away a good part of the particles that could affect our telecommunications systems. Therefore, understanding how this shield works can help us prevent those more extreme events that do cause some technological havoc. That’s why, while this study has given us a lot of interesting data, it’s still not enough. We must continue monitoring the Earth’s core, what caused this anomaly of 2011. Image | THAT In Xataka | The Webb and Hubble telescopes simultaneously observed Jupiter’s auroras. The problem is that they didn’t see the same thing