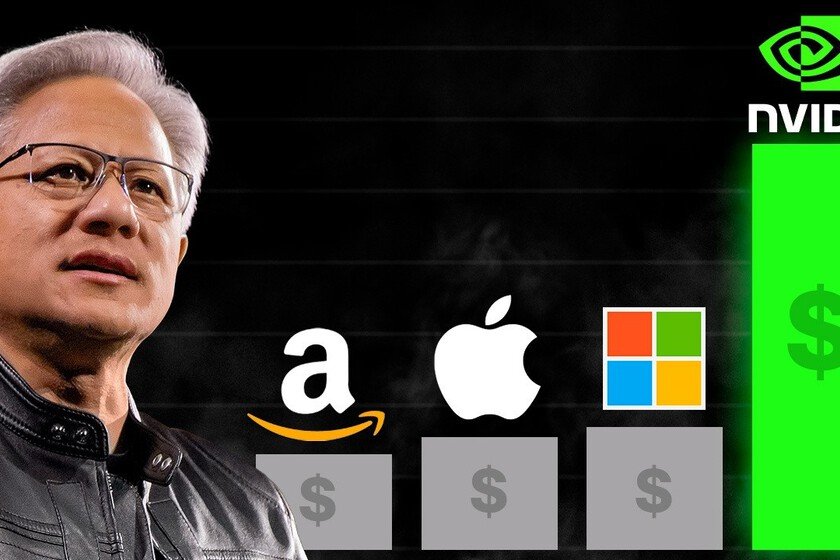

Michael Burry just shorted NVIDIA. All good except because he was the one who predicted the 2008 real estate bubble

Michael Burry, the well-known investor and fund manager who predicted the 2008 financial crisis, has recently shown his bearish positions against NVIDIA and Palantir just after launching on social networks a warning about excess optimism in the market. Warning which the Bloomberg media has qualified ‘cryptic’, for several reasons. The movements, made known in regulatory documents filed on Mondayhave reopened the debate on whether artificial intelligence is generating a speculative bubble. What exactly has Burry done. His investment fund, Scion Asset Management, has bought put options (puts) worth $186.5 million against NVIDIA and $912.1 million against Palantir, according to mandatory filings with the SEC. These options benefit if the stock price falls. Burry also took bullish positions (calls) in Pfizer and Halliburton, two stocks that have underperformed the market this year. Why does it matter? Burry is not just any investor. Its history is marked by having bet short against the US real estate market two years before the 2008 crashenduring criticism from his investors until Lehman Brothers went bankrupt and his fund multiplied its profits. His story inspired the film ‘The Big Bet‘. Having gained that fame, when Burry bets against something, the markets pay attention, although his track record is not infallible, as he has been wrong in the past with other bubble predictions. Click on the image to go to the post The context of their movements. Days before these positions became known, Burry broke two years of silence on social networks with a disturbing message: “Sometimes we see bubbles. Sometimes you can do something about it. Sometimes the only winning move is not to play,” accompanied by an image of his character in the film. On Monday night he posted again, this time sharing a Bloomberg chart about concerns about circular financing between OpenAI, NVIDIA and other AI companies. Market reactions. Palantir shares fell more than 10% following the news, even though the company had just raised its annual revenue guidance. NVIDIA also fell by up to 2.9%. Palantir CEO Alex Karp responded in an interview with CNBC calling the idea of shorting against companies like Palantir and NVIDIA, which he says are doing “noble tasks,” “crazy.” The bubble debate. For months, many investors have expressed concern about whether the AI boom is being artificially sustained. Ray Dalio, founder of Bridgewater Associates, warned recently told CNBC that “there are many things that look like bubbles,” although he clarified that bubbles do not usually burst until the Federal Reserve tightens its monetary policy. According to its “bubble indicator”, approximately 80% of market gains are concentrated in large AI-related technology companies. An important nuance. It’s not entirely clear whether Burry is betting directly on the downside or whether these options are part of a more complex strategy to protect other investments. And just as share Bloomberg, regulatory filings only reflect long positions, so if you were using these puts as a hedge for other investments, we wouldn’t know. The curious thing is that its first quarter presentation did include a note explaining that puts “could be used to cover long positions”, but the third quarter presentation does not say anything about it. Scion’s recent history. This is not the first time Burry has bet against NVIDIA. During the first trimester He has already liquidated almost his entire portfolio of listed shares and bought put options against the chipmaker. However, it has also achieved success: in the third quarter it closed positions in Alibaba (with a 36.5% profit), Estée Lauder (27%), ASML Holdings (45.7%) and Regeneron Pharmaceuticals (10.8%). Canary in the mine or false alarm? The question on Wall Street is whether Burry is once again detecting a bubble before anyone else or if he is wrong this time. NVIDIA is up 54% this year until reaching a capitalization of 5 billion dollarswhile Palantir has soared 173% thanks to its expansion in AI-related businesses. Valuations are high, but both companies continue to grow and expand their business. Be that as it may, if there is a bubble, we will find out in the worst possible way: when it bursts. Cover image | Solen Feyissa and ‘The Big Short’ In Xataka | The geopolitical irony that we are experiencing in the chip war has an unexpected beneficiary: Russia