Satellite images have revealed what happened to one of Russia’s biggest arsenals. Now we understand Moscow’s silence

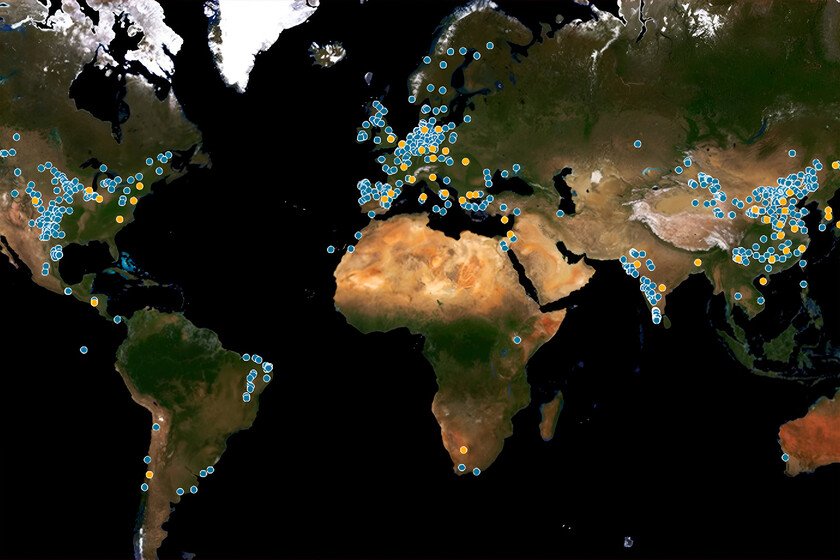

On April 22 the satellites began to point out A point on the planeta change only perceptible through the images from space offered a first track of what was happening about 60 kilometers from Moscow. Despite the weather conditions of that day and the low resolution of the optical data captured by the Sentinel-2 satellite From the European Space Agency, the damages were clearly visible. An explosion had “burst” the 51st arsenal of the main missile and artillery direction of the Russian Ministry of Defense. Total devastation of Arsenal. Visual confirmation was reinforced by radar images Synthetic opening (SAR) capable of penetrating clouds and smoke, which showed significant structural alterations in the complex nucleus. The comparison between images Taken on April 14 and 23, it indicated that at least 30 buildings destined to storage of ammunition had been completely destroyed. Explosions, evacuations and blackouts. The day after the explosion, the secondary detonations They still continuedunderlining the magnitude of the stored material. The strength of the outbreak forced Evacuate eight nearby townswhile 37 settlements were left without gas supply. The most remote evacuated town was 4.5 kilometers from Arsenal. NASA fire monitoring system data also confirmed the existence of multiple igneous foci Within the perimeter, coinciding with the analysis of the intelligence expert (OSINT) MT Anderson, who used additional filters to detect heat points and Confirm destruction Massive infrastructure. A strategic arsenal. Then the magnitude of what happened began to be known. He 51st Arsenal Grau It was not simply a deposit of ammunition. As one of the Eight main arsenals that still operated in the European part of Russia, its function was key both in the distribution and in the logistics maintenance of the Moscow weapons. Three of those eight arsenals had already been destroyed for 2024, which turned this loss into a considerable strategic blow for the Kremlin military supply chain. Arsenal was designed to house Up to 264,000 tons of explosive material. Among the remains found after the explosion were identified 107 mm rockets for Multiple type 63 rocket Chinese manufacturing, many of which were recorded spread around local residents, suggesting that part of the material was stored outpatient and had recently been delivered. The catastrophe, or the attacknot only compromised Russian logistics operability in the Ukrainian conflict, but raised (once again) serious doubts about the security of its own arsenal in times of war. Images of the British report with the before and after the explosions A self -inflicted blow. Now, and after A study Of all the images and confidential information of the intelligence of the United Kingdom Ministry of Defense, it has been confirmed that the cause of the incident was not “external”, but a combination of bad practices in the management of armament and a negligent storage management by Russia. British research, in fact, is reinforced by the declaration of the Russian Defense Ministry itself, which, in silence from the incident without offering more data, there were attributed the disaster to the “violation of security requirements” in the manipulation of explosive materials. For the United Kingdom, the event is not an isolated case, but the reflection of a prolonged and documented trend of “Russian ineptitude in the treatment of its own ammunition”, although that yes, in this case it represents the greatest loss of self -inflicted arsenal since the beginning of the large -scale war in Ukraine. Strategic installation We already said it before. The affected deposit was a key installation for the war supply of the Kremlin on the Ukrainian front and, according to figures from the Ukrainian authorities cited by the United Kingdomhosted around hundreds of thousands of tons of ammunition, including ballistic missiles, projectiles thrown from air and anti -aircraft systems. Satellite images verified by the insider medium They also revealed that more than a square kilometer of the complex was affected by the detonations, which suggests that massive and prolonged destruction, with multiple fires and a chain of secondary explosions that, According to disseminated videos In social networks, they even reached nearby civil areas. Error pattern. In addition, it is not the first time that the arsenal of the 51st Grau suffers incidents of this type. Insider told That in June 2022, Russian state media reported a spontaneous explosion during loading and unloading operations that cost four people. The pattern is consistent with British complaint: A continuous chain of operational errors and insufficient security measures that make critical facilities into vulnerable points within the Russian military apparatus. The lack of technical discipline and effective prevention protocols has not only generated large material losses, but also has compromised the safety of populated areas in times of war. Consequences. If you want also, the incident gives wings to the rhetoric of the West. The impact of this catastrophe transcends the material. The destruction of one of the main deposits of Russian ammunition not only weakens the immediate logistics capabilities of Moscow in its offensive against Ukraine, but also reinforces an idea increasingly sustained Among the “alidos”: that of a corroded military power for structural failures, operational improvisation and a dangerous carefree for the most basic security standards. Seen thus, in full prolonged war and with its supply lines under pressure, losing tens of thousands of tons of armament due to internal negligence constitutes a defeat with several readings. Image | Maxar In Xataka | Russia launched its fearsome nuclear missile Satan II last week, the “Invincible Weapon” of Putin. It was regular In Xataka | The US has detected an object in space with strange behavior. The source that released it has also located: Russia