Freepik has just launched an Open Source model with a groundbreaking characteristic: licensed images

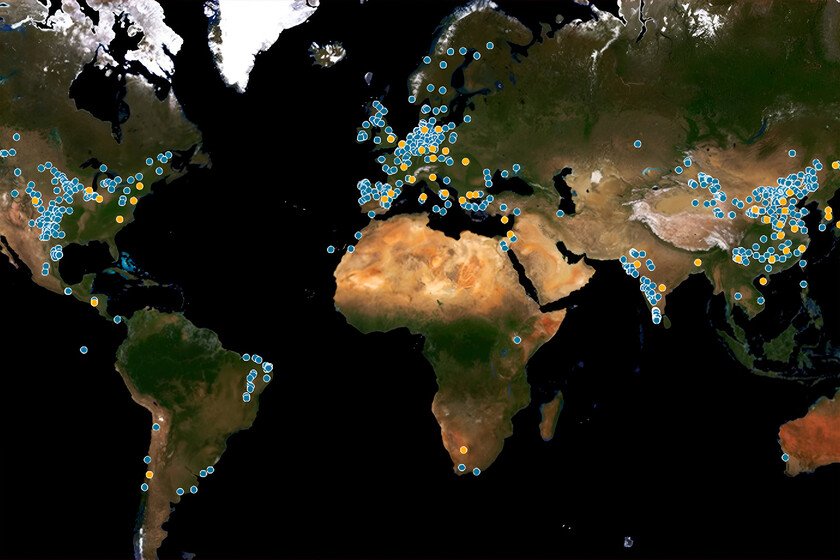

The Spanish Startup Freepik is already one of the absolute referents in the segment of artificial intelligence, but its last launch is especially significant. It is a new model of its own generative called F lite which is a new blow to the table for several reasons, but especially for an especially striking. Licensed images. Freepik, in collaboration with Fal.AI, has presented F lite, a model of generative text in image that stands out especially for being trained “exclusively with high quality images, with legal support and copyright protected” thanks to an important detail. They come from the Freepik library. Inspired (partly) in Deepseek. In Xataka we have talked to Omar Pera (@ompemi), Product responsible in Freepik. He has explained to us how the Deepseek Chinese model served as inspiration by demonstrating that it was possible to create a small but very capable model with much less resources and data. F lite is trained with “only 80 million images, compared to more than one billion usual images” in models of the generative images of the competition. Open Source. As explained in the technical report that accompanies the launch, F Lite is also An Open Source model of 10 million parameters-diminuto if we compare it with the 1.76 trillion estimated GPT-4-which has been trained for two months in 64 GPUS NVIDIA H100. A sample of the result obtained with F lite via Fal.AI. The car does not resemble Renault 5, true, but at least at the level of photorealism the result is really decent. Small but solvent. As explained Iván de Prado (@ivanprado), one of the top people responsible for its development, F lite is a decent model to generate certain types of images despite its small size. It also has its limitations, and can show anatomical defects or not give good results in complex or text rendering. The model is available in two versions in Hugging Face (regular, Texture) and in Fal (regular, Texture). It is also possible Download the github and use it at home, for example via comfyui. Is just the beginning. The launch of this model raises the beginning of an especially striking project that could gradually make it an alternative that rival the most ambitious models, but always supported by that argument of being trained with licensed images and being Open Source. More options for users. The model is not part of the moment of the offer of models available on the Freepik web platform, yes, and does not compete directly with models such as FluxMystic or image 3. “The bet and strategy does not change,” Pear pointed out, and consists in “optimizing the user offer and offering the best technology to solve the problem” and the need of each user. Demands everywhere. We have been seeing how artificial intelligence companies make indiscriminate use of content protected by copyright to train their models. They usually do it without a license and without having permission to use those works, and that has caused numerous Copyright rape demands, especially in cases Like OpenAi. Freepik Enterprise. This announcement comes almost at the same time as its new business offer, called Freepik Enterprise. Omar Pera confirmed that the initial reception of F Lite has been remarkable since this new service. In this area it is precisely where using a model like this is especially interesting, because “companies are covered” when using a model trained with licensed images. A by adobe and other rivals. Pera also pointed us out in Freepik They do not compete With images, “we are going for professional use cases of marketing or design.” They are not compared to a Getty or a Shuttersock, and more “with the creative tools of Adobe, with Leonardo (part of Canva from a year ago) or with the professional services of Midjourney. “ Image | Freepik In Xataka | All the great AI have ignored the laws of Copyright. The amazing thing is that there is still no consequences