The industry became obsessed with training AI models, while Google prepared its masterstroke: inference chips

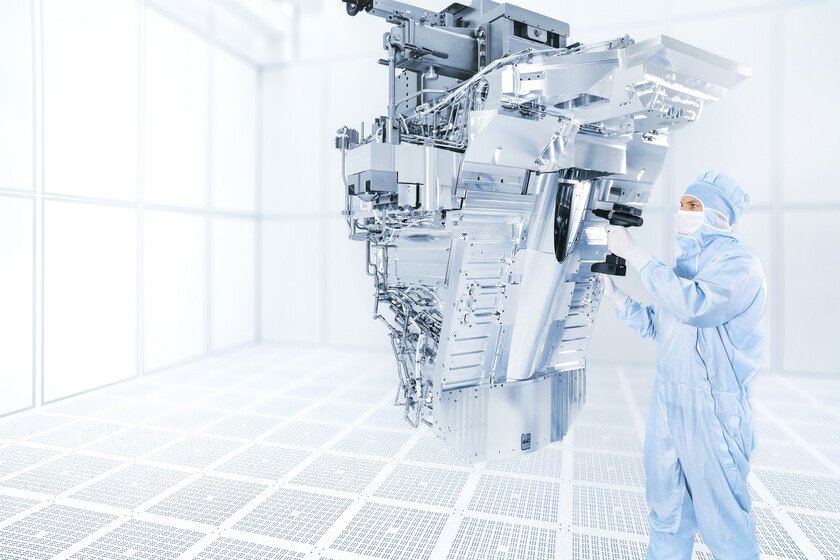

In recent years, what was truly relevant was training AI models to make them better. Now that they have matured and training it no longer scales as noticeablywhat matters most is inference: that when we use AI chatbots they work quickly and efficiently. Google realized this change in focus, and has chips precisely prepared for it. Ironwood. This is the name of the new chips from Google’s famous family of Tensor Processing Units (TPUs). The company, which began developing them in 2015 and launched the first ones in 2018now obtains especially interesting fruits from all that effort: some really promising chips not for training AI models, but for us to use them faster and more efficiently than ever. Inference, inference, inference. These “TPUv7” will be available in the coming weeks and can be used to train AI models, but they are especially aimed at “serving” these models to users so that they can use them. It is the other big leg of AI chips, the really visible one: one thing is to train the models and quite another to “execute” them so that they respond to user requests. Efficiency and power by flag. The advance in the performance of these AI chips is enormous, at least according to Google. The company claims that Ironwood offers four times the performance of the previous generation in both training and inference, and is “the most powerful and energy-efficient custom silicon to date.” Google has already reached an agreement with Anthropic so that the latter has access up to one million TPUs to run Claude and serve it to its users. Google’s AI supercomputersand. These chips are the key components of the so-called AI Hypercomputer, an integrated supercomputing system that according to Google allows customers to reduce IT costs by 28% and a ROI of 353% in three years. Or what is the same: they promise that if you use these chips, the return on investment will be multiplied by more than four in that period. Almost 10,000 interconnected chips. The new Ironwoods are also equipped with the ability to be part of joining forces in a big way. It is possible to combine up to 9,216 of them in a single node or pod, which theoretically makes the bottlenecks of the most demanding models disappear. The size of this type of cluster is enormous, and allows for up to 1.77 Petabytes of shared HBM memory while these chips communicate with a bandwidth of 9.6 Tbps thanks to the so-called Inter-Chip Interconnect (ICI). More FLOPS than anyone. The company also claims that an “Ironwood pod” (a cluster with those 9,216 Ironwood TPUs) offers 118x more ExaFLOPS FP8 than its best competitor. FLOPS measure how many floating-point math operations these chips can solve per second, ensuring that basically any AI workload is going to run in record times. NVIDIA has more and more competition (and that’s a good thing). Google chips are a demonstration of the clear vocation of companies to avoid too many dependencies on third parties. Google has all the ingredients to do it, and its TPUv7 is proof of this. It’s not the only oneand many other AI companies have long sought to create their own chips. NVIDIA’s dominance remains clearbut the company has a small problem. In inference CUDA is no longer so vital. Once the AI model has been trained, inference operates under different game rules than training. CUDA support remains a relevant factorbut its importance in inference is much less. Inference focuses on obtaining the fastest possible answer. Here the models are “compiled” and can run optimally on the target hardware. This may cause NVIDIA to lose relevance to alternatives like Google. In Xataka | When you’re OpenAI and you can’t buy enough GPUs, the solution is obvious: make your own