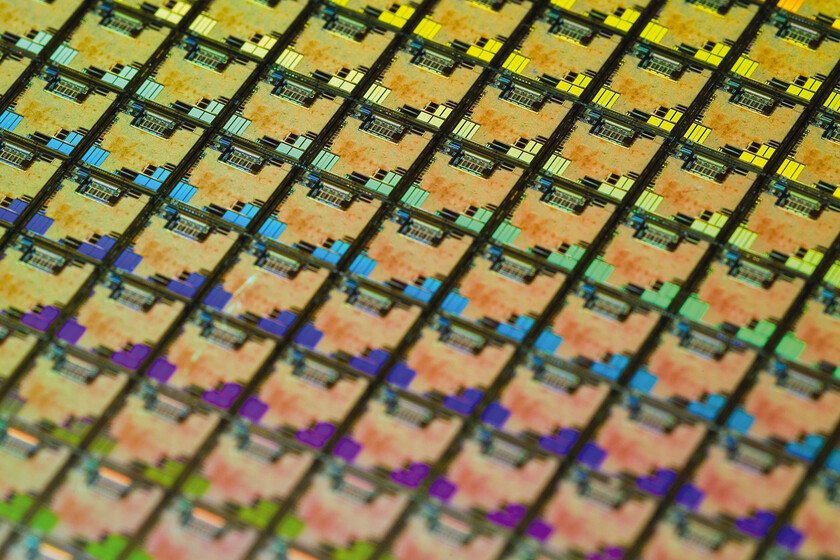

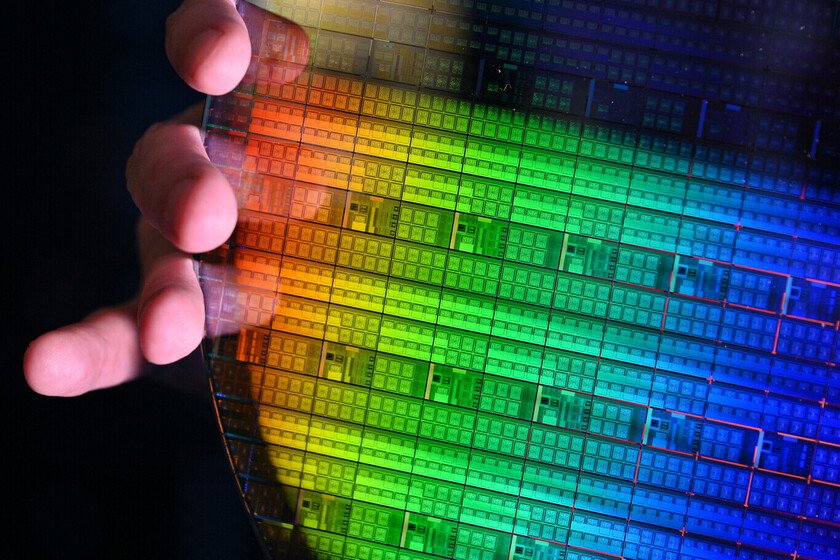

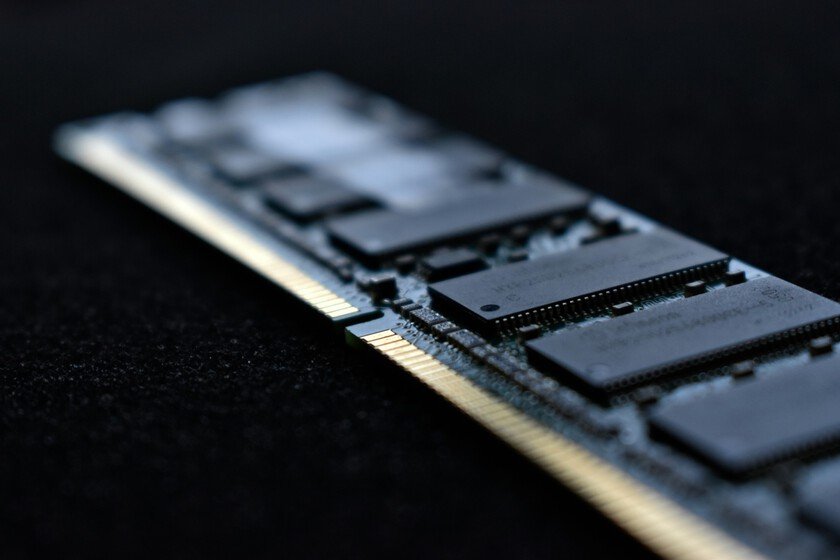

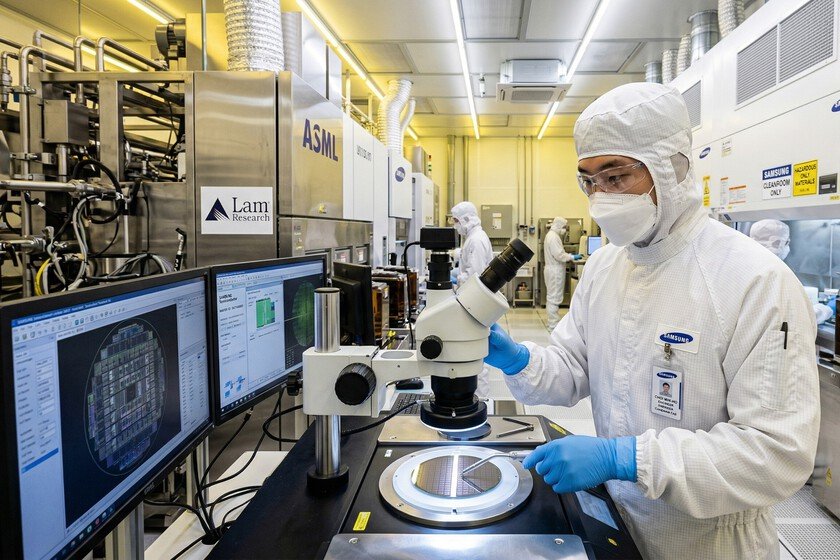

Companies that made “boring” chips are riding the dollar

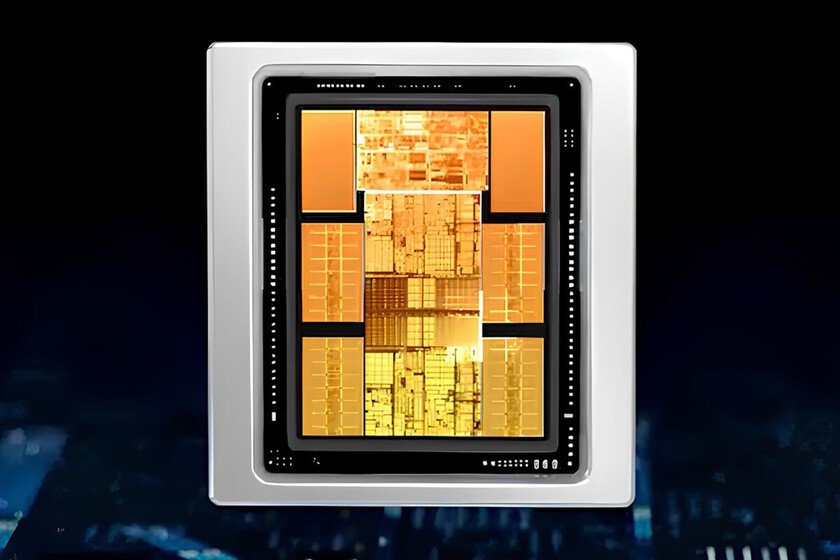

In any sports team there are starters and substitutes. The headlines are usually the big stars, who capture all the attention. The substitutes are the ones who go out to do the job when it’s time, without making so much noise. That same universe of ‘Zidanes and Pavones‘is in the world of computer components and, if the chips of Intel, Nvidia, amd either TSMC They are the Zidanes, the Pavones are, indisputably, the chips of Texas Instruments. And the accounts are coming out. Texas Instruments. It is one of the most evident cases of how profitable it is to live outside the hype. Texas Instruments is the ‘Paco Bearings’ of technology, a company that has been manufacturing chips for decades that we have in a multitude of devices, but that do not make noise with specifications. Are very specific chips to carry out very specific tasks, and if in February we already said that They were dropping their wallets to acquire companies like Silicon Labs (an American company that also makes ‘boring chips’), now we have to echo the accounts. Revenue for the first quarter of the year they reached 4.8 billion dollars, 19% more year-on-year and exceeding expectations. And, precisely, what has increased the most year after year has been the number of chips for data centers. Boring chips in AI. Think about the chips in your washing machine, but also in the refrigerator, in a smart speaker or even in wireless headphones. It also makes other types of chips: those that control power, isolate signals and manage faults. And those are the ones who are making gold in the age of AI. GPUs and CPUs are the star chips of a data center, but others are needed to do the most basic work: power, control, interfaces and protection. Texas Instruments manufactures and sells these chips, and they are what allow a GPU or CPU to run stably in racks. Putting it down, if Nvidia or AMD put the ‘brains’ in the data centers, Texas Instruments provides the nervous system. And this is tremendously profitable since, in the breakdown by segments, although Texas Instruments’ industrial chip segment increased by 30% year-on-year, that of data centers grew 90%, representing approximately 11% of the company’s income. The ARM case. Another interesting case is what processors are experiencing. AI needs are shifting from GPU power for training to CPU efficiency for efficiency. In the era of Agentic AIit is estimated that more CPUs will be needed in data centers in what has already been dubbed the ‘CPU renaissance’. Intel is adapting to it and the market is rewarding a historic processor: Arm Holdings. On March 24, presented AGI CPU, ARM’s first proprietary processor for data centers. It is optimized to precisely run large inference workloads, such as the aforementioned agentic artificial intelligence. Manufactured in a 3-nanometer TSCM process, it has 136 cores per chip and a performance that promises to be double that of conventional x86 processors. AND co-developed with Meta, one of the most interested in stopping depending on Nvidia. Market confidence is at its highest and share prices have shot up to all-time highs. In fact, the graph of ARM and Texas Instruments is extremely similar over the last five years. Those of memory, to their ball. In parallel, there are other companies that do not create the processors to ‘move’ the AI, but rather the memory for the most powerful GPUs on the market. They are invisible chips, but unlike Texas Instruments, their presence in data centers is notable for a very simple reason: they are those same memory companies that have stopped making memory for consumers, focusing almost all of their production on data centers. SK Hynix record a 405% growth in its operating profit in the first quarter of the year, something driven by HBM memories and DRAM for AI. Samsung, more of the same, earning more in three months than during all of last year. The question is the same as in recent months: how long will this growth last and whether investment in data center equipment has a ceiling. And what will happen when that ceiling is reached. Images | Victorgrigas, Raimond Spekking In Xataka | NVIDIA has so much money that it is becoming something different: the largest startup incubator in the world