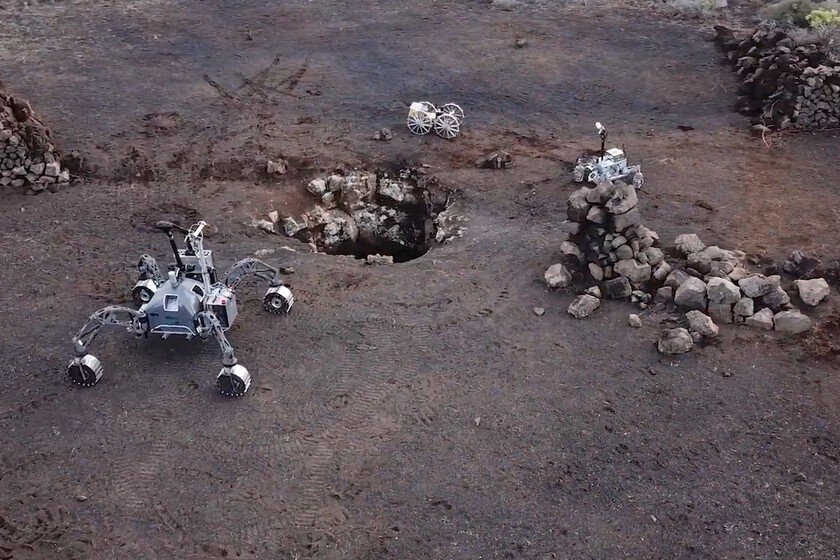

Mova Rover X10, the “what do you want me to beat you” of pool robots

Mova has just landed in Spain con a lot of devices. One of them is the Mova Rover X10, a pool cleaning robot that costs more than 2,000 euros, but that is a lot more than just a ‘Roomba’ for the pool: It is a cleaning submarine. Last year we already analyzed the Dreame Z1 Pro (Mova’s sister company) and although it made my life much easier with pool maintenance, there were things that could be improved to make the experience better, such as the control system and charging. And here comes Mova with the “what do you want me to beat you” of pool cleaning robots. Let’s go with the main features and get into detail. Mova Rover X10 technical sheet Mova rover x10 Dimensions and weight 540 x 460 x 320mm 15.8kg Mapping and navigation 29 sensors Surface and wall mapping Real time control Obstacle Avoidance Dynamic Suction power Maximum of 38,000 L/h Cleaning surface Up to 500 m2 Brush type Two central rollers Two side rollers Filter 3 micron particles 5 liter capacity Control With mobile Battery 6 hours of floor cleaning 12 hours of surface cleaning 6.5 hours of charge Wireless charging Price 2,099 euros Design with multiple brushes There is not much room for innovation when it comes to pool cleaning robots. It’s like the robot vacuum cleaner: There is an almost standardized design because it is ideal for attaching brushes, moles, navigation system and tank. In the case of a pool robot, the same thing happens, although with some extra elements such as the propulsion system. However, Mova has gone all out and he thought that two central brushes for the background were not enough and he attached two smaller ones to the sides. With them you make a first pass by scratching the wall, but they have another function that we will get into later. Something fundamental in a robot vacuum cleaner is the navigation system. The more complete it is, the fewer passes it makes over the surface and the more efficient it is in cleaning. Mova calls theirs ‘360-degree AquaScan’, but basically it is a front sensor, one top and one side to know at all times both the distance from the walls and if there are any obstacles. In the upper backpack we have the reusable filter and Mova ensures that a load of its 15,000 mAh battery It allows six hours of floor cleaning, being compatible with fiberglass, tile, concrete, marble, stainless steel, ceramic and PVC pools. Submarine So far, a “conventional” robot vacuum cleaner. However, there are three features that are really crazy and that we can’t wait to try. One is the propulsion system. The pool cleaner robot has jet propulsion, as this is what allows it to both move forward and stick to the surface and walls. However, the Mova adds another four propellers at the base. So that? To make a ‘jump’ and go up to another platform. Instead of going up, moving close to the wall, he directly pushes himself off. That’s it, an underwater ‘rover’ that solves a problem that some of the competition has: underwater navigation. Other robots have an app that allows very intuitive control and mapping, but once they are submerged, the connection is lost. Some have a knob for basic control, but depending on the water conditions, it may go… or not. What the Rover X10 includes is a beacon. It is connected by WiFi and allows us to have constant communication with the robot. If we want to pause work or change the plan, we simply do it from the app without having to go to the edge of the pool to try to get the remote control right. It also, obviously, allows us to see the work in real time. Surface vacuum cleaner And those two side brushes that we mentioned before are the last trick of this model. Because It is not just a pool cleaner robot, but a surface cleaner. It has a front nozzle that works like a home robot vacuum cleaner: it sucks up dirt thanks to both its advance and what the side brushes attract. According to the manufacturer, it has 12 hours of autonomy in this mode. To charge it, instead of by cable, we have an IPX8 certified base for wireless charging. Mova Rober X10, price and availability Given the characteristics of the Mova Rover X10, it’s time to talk about the price. And as you’d expect, packaging all this technology doesn’t come cheap. The device can now be purchased for 2,099 euros on your website. In the end and as happens with other similar ones such as lawnmower robotsit all depends on the desire you have to automate unpleasant tasks that are not usually pleasant. In Xataka | 3D printing has three big problems. Mova has solved them in a very curious way: with a nozzle roulette