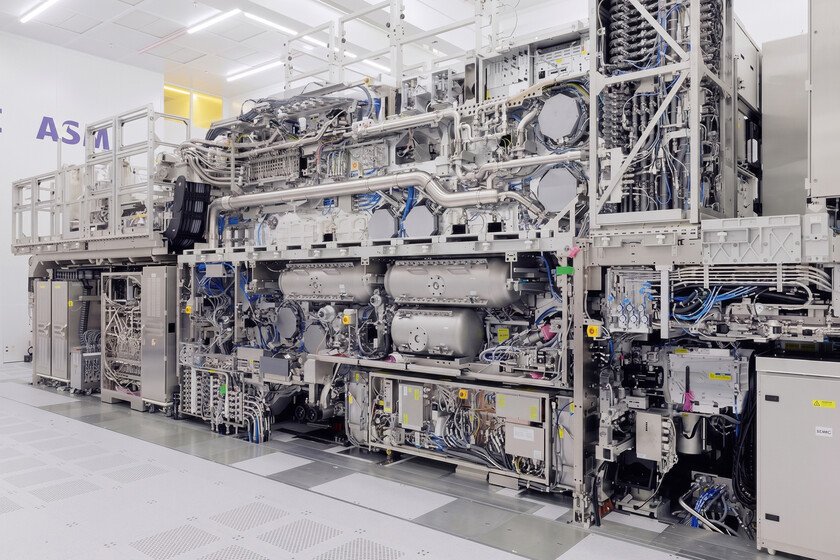

TSMC is not going to use its High-NA machines at the moment and has a compelling reason not to do so

On April 23, TSMC made official a strategic decision very important: has postponed the adoption of ASML’s extreme ultraviolet (EUV) and high aperture lithography machines until 2029. These are the equipment of manufacturing of more advanced integrated circuits that this company from the Netherlands currently has in its portfolio, and TSMC’s announcement caused ipso facto a drop of 3.3% of the value of its shares. It is not in vain that this Taiwanese chip producer is ASML’s largest client. In 2025 23.9% of total sales of this Dutch company came from TSMC. The main reason why this last company has decided not to use UVE High-NA machines of ASML in the short term is strictly economic. Each of them has a price of around 350 million euros, and, in addition, a single cutting-edge semiconductor plant requires the installation of several dozen of this equipment. TSMC considers that they are currently too expensive to make the manufacturing of advanced chips profitable. And, interestingly, Intel, Samsung and SK Hynix they are already adopting High-NA technology. This decision by TSMC brings great technical challenges The step taken by TSMC has not been improvised, as might be expected. In fact, over the past two years several managers at this company have publicly expressed doubts about the short-term adoption of ASML’s High-NA equipment. In January 2024 CC Wei, the current president and CEO of TSMC, surprised us with this statement: “We are studying it carefully, evaluating the maturity of the tool and examining its costs. We always make the right decision at the right time in order to offer the best service to our clients,” Wei assured. during a meeting. A few weeks earlier Szeho Ng, an analyst at China Renaissance, predicted that TSMC would not use ASML’s high-aperture UVE equipment until it introduced its 1nm integration technology. “We always make the right decision at the right time with the purpose of offering the best service to our clients” Last week it was Kevin Zhang, TSMC’s deputy chief operating officer, who clarified something very important: “I am amazed by our R&D team. They continue to find ways to drive technological development without using ASML’s High-NA UVE equipment. Someday we may have to use them, but right now we can continue to reap the benefits of current EUV technology without moving to High-NA which, as we all know, is extremely expensive.” In 2029, TSMC intends to have the A12 and A13 integration technologies ready for large-scale production, which are nothing more than derivatives of its A14 photolithography. From a commercial point of view these will be the first 1.2 and 1.3 nm technologies of this company. They will use GAA transistors (Gate-All-Around) and NanoFlex Pro technology. This latest innovation will allow IC designers to use fast cells for the critical parts of the GPU that need speed, and dense or efficient cells for the rest, thus optimizing the chip area down to the last millimeter. What we still do not know is what technical solutions TSMC engineers are going to implement to make it possible to manufacture 1.2 and 1.3 nm integrated circuits using ASML’s UVE equipment. It’s just a guess, but it seems unlikely that they will resort to the multiple patterning because this procedure compromises the performance per wafer and the cost of the semiconductors. TSMC would lose competitiveness. One last note: the multiple patterning Broadly speaking, it consists of transferring the pattern to the wafer in several passes with the purpose of increasing the resolution of the lithographic process. Image | ASML More information | Innovation Origins In Xataka | Bill Gates has X-rayed Intel. And his diagnosis is overwhelmingly accurate.