NVIDIA has lost hope in China, which is why it has started manufacturing its own next-generation GPUs for AI

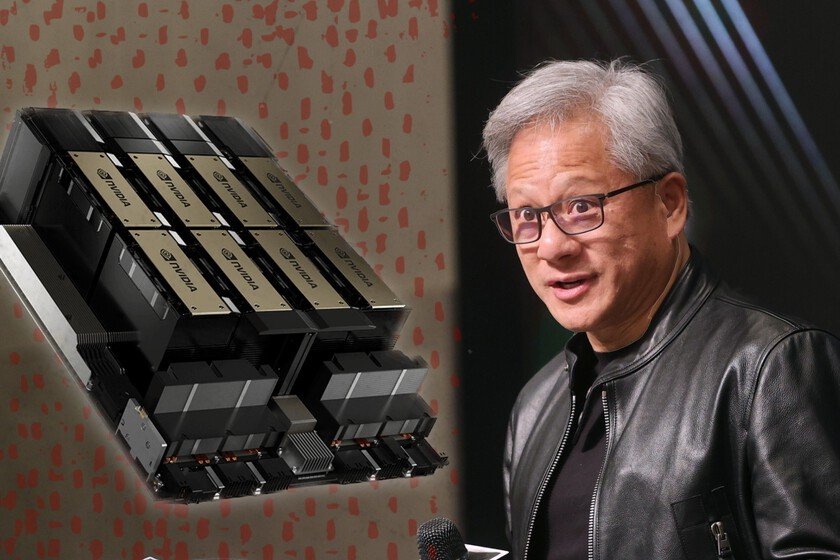

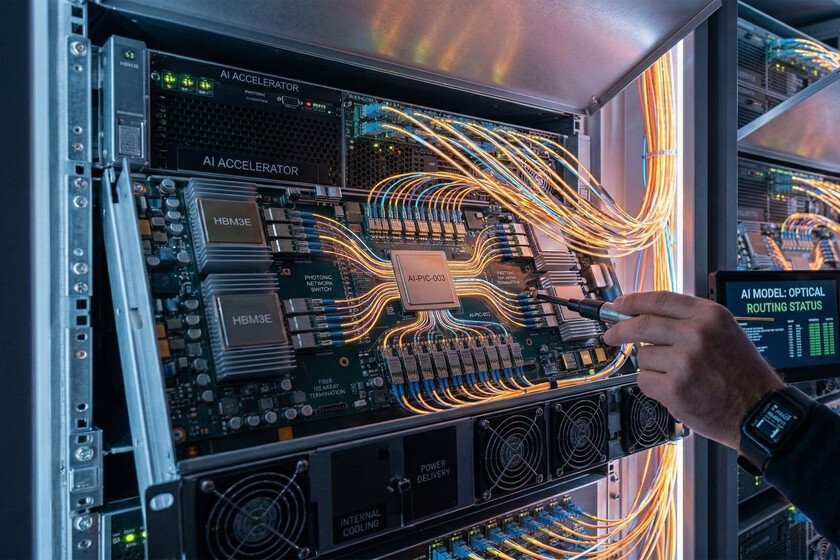

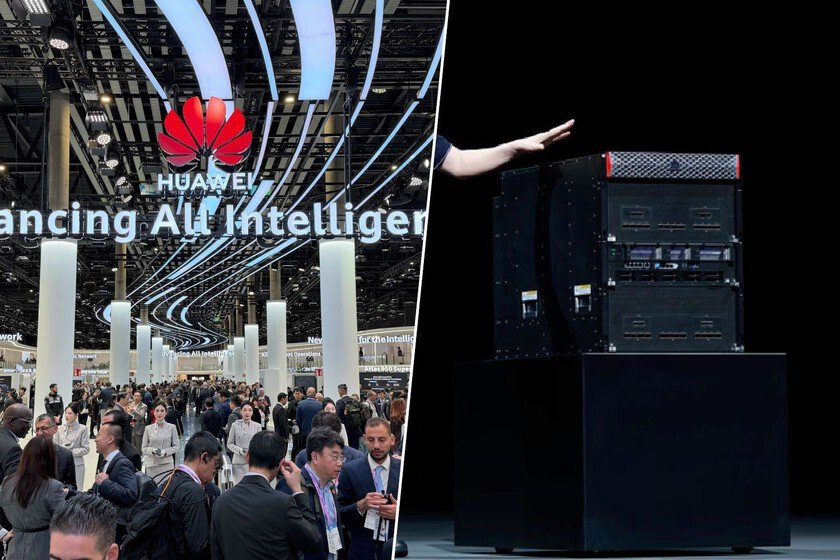

NVIDIA faces this 2026 a crucial year. They have become one of the largest strategic investors in the AI ecosystem with dozens of billion-dollar investments in other companies, models, infrastructure and robotics. But, in the end, they are a company that supplies chips and, so far, the H200 They set the tone. According to a report by Financial Timesthat’s over. NVIDIA just ordered TSMC to start mass manufacturing Vera Rubinits next-generation hardware for AI. The reason? They have lost all faith in China. In short. With the entire AI industry looking to the future, and NVIDIA that has its Vera Rubin on the starting grid, it was strange that the company continued to invest so much in keeping TSMC working on a chip as old as the H200. Although it has been around for a while, it has positioned itself as unbeatable in the industry due to its price/power ratio, so these are the chips on which it has been built. the AI empire. However, time passes and NVIDIA needs to move. Data centers need more power, new models are more demanding and the spearhead of the software sector – such as OpenAI either Google– have demanded new solutions. According to two sources consulted by the financial media, and close to NVIDIA’s plans, the company has grown tired of “waiting in limbo” and has begun to accelerate the delivery and deployment of Vera Rubin. Yoncomparable. As it could not be otherwise, TSMC is going to be in charge. The Taiwanese foundry would have already been asked to begin diversifying the production line to begin manufacturing the new chips. And if you’re wondering why it’s not enough for Google or OpenAI to simply buy more H200, the answer is because the chips have nothing to do with it. H200 is a more classic GPU for a data center. It is the configuration that AI and computing companies on these servers have been working with for years. Vera Rubin, however, is a paradigm shift made up of new CPUs, new GPUs and designed so that everything works as a single rack-scale accelerator. It has not only more power, but also the latest software and hardware additions from NVIDIA and something very important: incredible bandwidth. The higher the bandwidth on such a system, the more simultaneous data it can handle. This implies greater efficiency when training, but also a lower cost in inference. It is not an update, it is a platform change designed for models with trillions of parameters. Qgoose faith in China. To put it more simply, if the H200 is like a “super powerful graphics card”, Vera Rubin is like a mini data center in itself. And if you’re wondering why they didn’t start production sooner, the reason is… China. Jensen Huang, CEO of NVIDIA, has been ‘fighting’ with Washington for months to open their arms in the trade and technology war maintained by the US and China. Trump ended up agreeing and Huang commented earlier this year that they had returned to “turn on” all production lines to supply the very high Chinese demand. The problem is that that demand did not arrive. At least, It was not as high as Huang expected. In the presentation of results, NVIDIA’s financial director commented a few days ago that “although small quantities of H200 for Chinese customers were approved by the US government, we have not yet generated any income. And we do not know if imports to China will be allowed.” We already told the problem: The US was leaving for NVIDIA to sell its graphics, butThe Chinese government did not seem so convinced. Your main Big Tech They were demanding NVIDIA solutionsarguing that they need them to keep up with what their American rivals are doing, but the ball was in the court of the Government and Customs. China is promoting AI that is different from that of the US, more focused on low costs and rapid acceptance by the client, and at the same time want to build your own hardware network with companies like SMIC or a Huawei that you already have your supercomputer for AI. complicated swerve. From the Financial Times they point out that the president of China, Xi Jinping, and the president of the United States will meet at the end of March to discuss export controls. The problem is that, according to their sources, even if the barrier is lifted completely and not just for certain companies and China can buy H200s en masse, turning TSMC’s ship around so that it starts producing H200s again would be complicated. It is not as simple as pressing a button and going from producing one thing to another. If this situation occurs, “NVIDIA would take up to three months to reallocate or add capacity to the supply chain to produce H200.” One of Vera Rubin’s PCBs Rebound winner. What is clear here is that NVIDIA is not going to lose from the operation. Huang already argued that the United States could not miss the opportunity to take a slice of a multi-billion dollar market (because the US let the cards be sold… with a 25% tariff), but whether it is the Chinese or the Western industry, it is from NVIDIA that they continue to buy the H200 and, ‘shortly’, the Vera Rubin. And the rebound winner in this operation is Samsung. Of the three companies that manufacture memory (and that have catapulted the RAM and SSD crisis we are in), Samsung is the one that has completed its new generation HBM4 memory. It is the one that has passed the high standards of NVIDIA and the one that is already being mass manufactured to be able to integrate into Vera Rubin systems. Everyone attentive. As we said, NVIDIA has to the entire industry at his feet. Google, xAI and Meta are working on their own chips, but together with Microsoft, Amazon Web Services, OpenAI, Mistral and Anthropic they are some of the companies that they … Read more