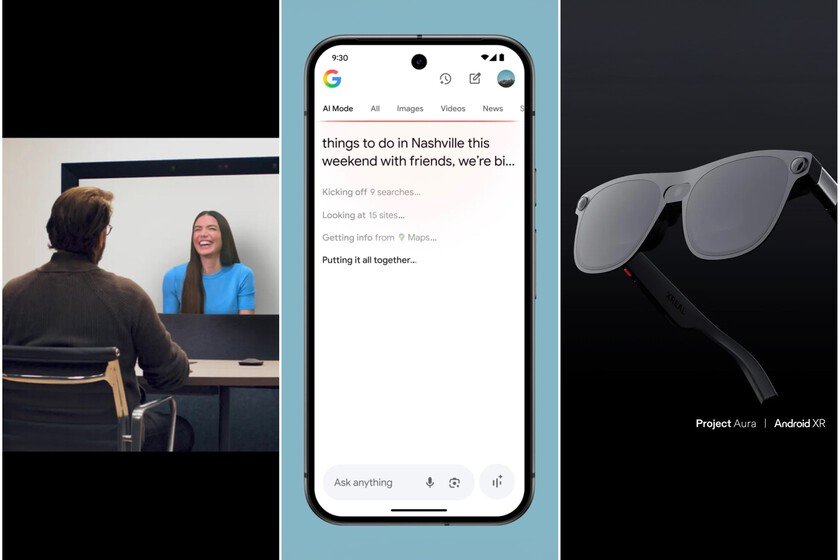

Ai Mode, Project Beam, I see 3, the Project Aura, Jules glasses and everything presented in a Google I/or 2025 loaded with ambition

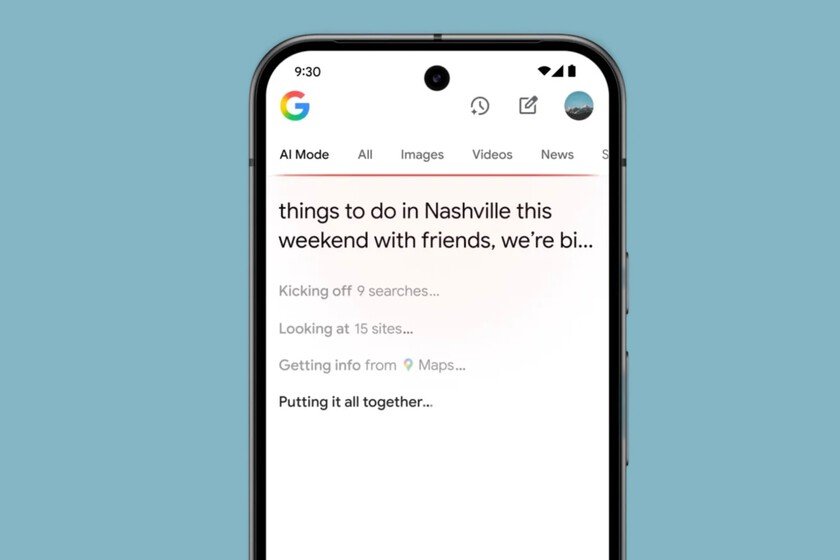

Google has taken the heavy artillery in the war to lead the development – and the business – of artificial intelligence. The American giant has done so in his annual developer conference, which more than this has been a demonstration of strength, a showcase where he has presented some of his most innovative advances. Next, we review all the products that Google has presented this Tuesday, May 20. If you want to deepen any of them, next to each name you will find a link with all the information. Gemini Ultra, I see 3 and image 4: a subscription for those who want everything Gemini Ultra is the new most complete artificial intelligence subscription of Google. It costs $ 249.99 per month And, for now, it is only available in the United States. Includes access to tools such as video generator I see 3the FLOW editing app and the Deep Think mode of Gemini 2.5 Pro, which has not yet been officially released. Subscribers also obtain improvements in notebooklm and whisk, storage of up to 30 TB in the cloud, YouTube Premium and access to the chatbot gemini directly from Chrome. Some of the most advanced functions are driven by the technology of Project Marinerwhich gives “agricultural” capacities agents. Deep Think: an AI that takes time to respond better Deep Think is a new mode of reasoning of the Gemini 2.5 Pro model which allows the AI to consider several possible answers before deciding on one. It seeks to improve accuracy in complex tasks and advanced benchmarks.It is currently only available for a small test group through Gemini’s API. Google states that he is carrying out security evaluations before launching it publicly. Ai Mode and Search Live: This way Google wants to redesign the search Ai mode It is a new experimental function for the search for Google that allows you to ask complex questions, with multiple elements, from an AI -based interface. It is launched this week in the United States and is able to handle sports, financial data and offer options such as “tested” virtual. Throughout the summer Search Live will arrive, a function that will allow asking questions based on what the mobile camera detects in real time. Gemini in Chrome and detection of synthetic content Gemini is integrated into Chrome as navigation assistant to help understand the web pages content and execute tasks. Gmail incorporates custom intelligent responses and a new function to clean the inbox. Google has launched Synthid detectora verification system that uses invisible water marks to identify content generated by AI. BEAM: 3D video call with simultaneous translation Beam, formerly known as Project Starlineconvert video calls into almost face -to -face conversations thanks to a six -chamber matrix and a light field screen. It offers headquarters to the millimeter and video to 60 frames per second. It includes real -time translation in Google Meet, preserving the voice, tone and expressions of the original speaker. Jules: Google agent to program without touching the keyboard Jules is Google’s new assisted programming agentdesigned to compete with platforms such as cursor, Windsurf or Codex. It is able to generate tests, update dependencies, write changelogs in audio and correct bugs while the user continues to work on other things. It works without plugins or additional facilities and is available in Public Beta for US users. Android also present at the event Android premieres new tools to find phones and lost objects, and also a new design language called Material 3 Expressive. Google showed its new glasses livedeveloped with Xreal and based on Android XR. The demo included interaction with Gemini by voice, simultaneous translation and overlap of real -time information. The project is called Project Aura and seeks to bring Android to the XR world with a practical and ornaments. Images | Google In Xataka | Smart glasses do not have to be an armed one. Google has it clearer than ever