making cell towers mini data centers for AI

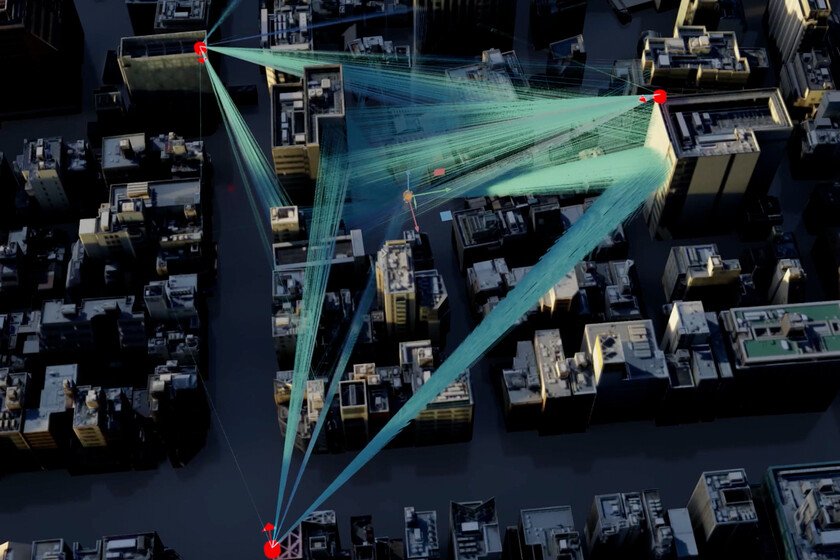

A few days ago we heard the news that NVIDIA had invested $1 billion in Nokiataking over 2.9% of the Finnish company. Although the check in itself is striking news, since for many people, Nokia had been lost off the map for many years, the movement makes all the sense in the world: it is the Western response to many of the Chinese technology companies that for years have been investing in the deployment of 6G. And of course, with NVIDIA behind them, telephony base stations can serve much more than just providing coverage to millions of devices: becoming small distributed data centers for AI. The plan behind the investment. NVIDIA and Nokia are not just designing equipment for mobile networks. They are redefining what a cell tower is. The idea is that each base station (the towers and small installations that we see on buildings and streets) become a computing node with the ability to execute operations involving AI technologies in real time. “An AI data center in everyone’s pocket”, according to Justin Hotard, CEO of Nokia. The key here is to bring processing closer to the user in order to eliminate latency, which is usually one of the most frequent problems in AI applications that require real-time processing, such as instant translation, augmented reality or autonomous vehicles. Without latency, everything changes. When we ask an AI to translate a conversation or analyze live images, every millisecond counts. Sending that data to a distant server, processing it, and returning it introduces a significant delay that mars the final experience. The most logical solution is to decentralize: that the AI lives close to the userin the telecommunications infrastructures themselves. In this sense, NVIDIA will contribute chips and specialized software, while Nokia will adapt its 5G and 6G equipment to integrate that computing capacity. As announced, the first commercial tests will begin in 2027 with T-Mobile in the United States. The Nokia effect on the stock market. Nokia shares they shot up 21% after the news broke, reaching highs not seen since 2016. NVIDIA and OpenAI have become King Midas of technology: everything they touch goes up. The investment is also a boost to the strategy of Hotard, who since his arrival in April has accelerated Nokia’s shift towards data centers and AI. The company, which already acquired Infinera for 2.3 billion to strengthen its position in data center networks, it is now positioned as the only Western supplier capable of competing with Huawei in the complete supply of telecommunications infrastructure. EITHERafter space race. While Europe and the United States accelerate their 6G plans, China has been investing aggressively in this technology for years. This alliance between NVIDIA and Nokia is a somewhat late response, but necessary. Jensen Huang, CEO of NVIDIA, explained in his speech in Washington that the goal is “to help the United States bring telecommunications technology back to America.” It is not just about infrastructure, but about strategic control. And whoever dominates this network of brains distributed throughout cities and roads will control the AI applications of the future. And now what. The McKinsey consulting firm esteem that investment in data center infrastructure will exceed $1.7 trillion by 2030, driven by the expansion of AI. Nokia and NVIDIA want their piece of the pie, but they are also betting on a structural change: that mobile networks stop being mere data tubes and become intelligent computing platforms. It remains to be seen if this model works commercially and whether operators are willing to update their infrastructure. Cover image | NVIDIA In Xataka | Xi Jinping wants two things: first, to create a global center that regulates AI. The second, that it is in Shanghai