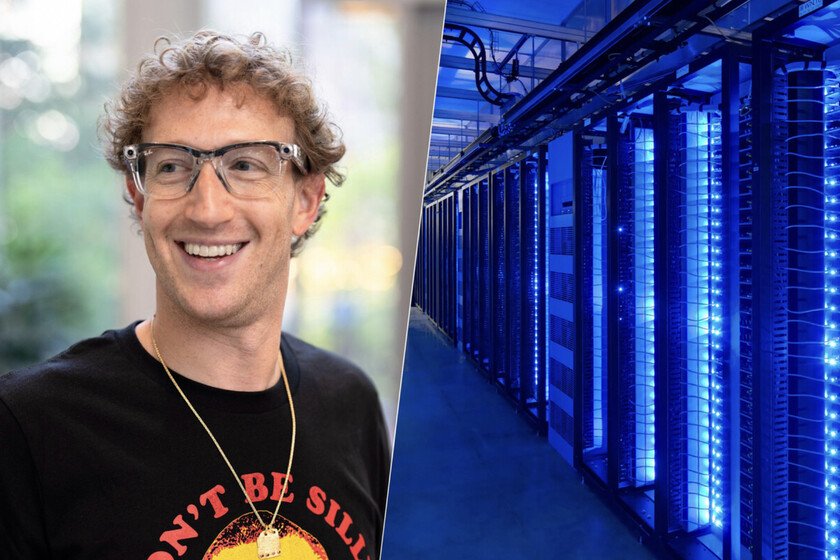

Data centers are so important that Meta has spent millions on advertising to change our perception of them

Meta has spent 6.4 million dollars on an advertising campaign between November and December of last year to convince the American public of the benefits of its data centers, according to the New York Times. The ads, aired in eight state capitals and Washington, DC, featured idealized images of American towns revitalized by these facilities. exists an increasingly significant social rejection on the installation of data centers dedicated to AI, especially due to the impact they have on the excessive consumption of basic resources like light and water. And of course, first we have to convince that they are key so that Meta and the rest of the big technology companies can continue with their operations. The Goal campaign. According to the media, the ads featured emotional stories about Altoona (Iowa) and Los Lunas (New Mexico), two locations where Meta operates data centers. With guitar music and shots of farms and football fields, the videos promised jobs and prosperity. “We are bringing jobs here, for ourselves and for our next generation,” the voiceover said. According to Michael Beach, CEO of Cross Screen Media, Meta “could have purchased these ads with the goal of influencing political decisions and reaching legislators.” Ryan Daniels, spokesperson for Meta, limited himself to say to the NYT that the company pays the full costs of the energy used by its data centers, without commenting on the advertising campaign. Meta is not alone. Just like account NYT, Amazon is funding a similar campaign in Virginia through Virginia Connects, a nonprofit created by the Data Center Coalition. From the Financial Times they point In addition, other operators such as Digital Realty, QTS and NTT Data are also acting more intensely to defend the construction of new facilities. Endurance. In the United States, social rejection has caused the cancellation of multimillion-dollar projects in Oregon, Arizona, Missouri, Indiana and Virginia. Democratic Senator Chris Van Hollen explained He told the NYT that the issue has become “a priority on Capitol Hill” when his voters began to complain en masse about electricity bills. Just like share The media, this month, Van Hollen presented a law to regulate the energy consumption of data centers. Even President Donald Trump spoke out on the matter: “The big tech companies that build them must pay their own way,” wrote a few weeks ago on Truth Social. electricity bill. Data centers have become critical infrastructures for the development of artificial intelligence, but there is increasing social tension over their installation. In October, Bloomberg counted that in the last five years the wholesale price of electricity in areas near large concentrations of data centers in the United States had increased by up to 267%. In Baltimore, residents paid $17 per megawatt-hour in 2020; In 2025 that figure reaches $38. On the other hand, the medium demonstrated In their research, 70% of the points where electricity price increases were recorded were less than 80 kilometers from data centers with significant activity. From Bloomberg they estimate that the energy demand of these facilities in the United States will double by 2035, becoming the largest increase since the 1960s. The situation in Spain. Our country is also experiencing a boom in the construction of data centers. The Community of Madrid, paradoxically the region with the greatest energy deficit in Spainconcentrates a good part of these projects and is expected to reach a power of 1.7 gigawatts in 2030. The consulting firm CBRE pointed out in a report that “there is no investor, operator or large technology company that does not have in its strategic plans to establish its data center project in the Iberian market.” Madrid, together with Barcelona, already competes with cities such as Milan, Zurich or Berlin, although still far from the leading European group in terms of power capacity formed by Frankfurt, London, Amsterdam, Paris and Dublin. What awaits us. According to Bloomberg, the forecasts they point because data centers will consume more than 4% of the world’s electricity in 2035. If these facilities were a country, they would be fourth in energy consumption, only behind China, the United States and India. Meanwhile, big technology companies are already exploring solutions such as modular nuclear reactors (SMR) to power your facilities, or send data centers to space. Cover image | Mark ZuckerbergGoal In Xataka | “The assemblies are not going to be done by AI”: we talk to the kids who have become carpenters, truck drivers and tinkerers