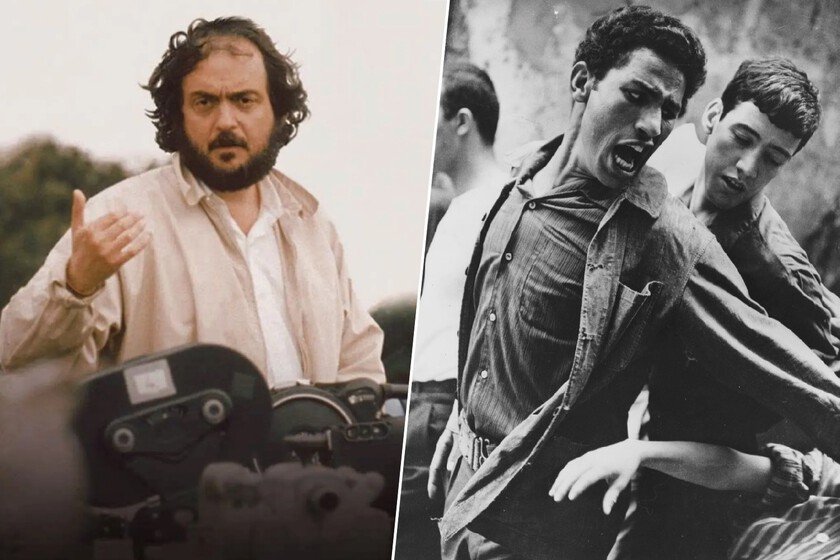

Kubrick was obsessed with this masterpiece of war cinema

Stanley Kubrick one of the most demanding directors in the history of cinemawas unable throughout his life to stop talking about a film, to the point that he “spoke enthusiastically about it until shortly before his death.” It was not his, but from an Italian filmmaker practically unknown to the general public. It was filmed in 1966 with non-professional actors and on the streets of Algeria: ‘The Battle of Algiers’. Says who knows. Anthony Frewin worked as Kubrick’s personal assistant between 1965 and 1999, except for short interruptions at times in the 1970s. No one knew the director’s cinematographic tastes better than him, as demonstrated in an interview where he states that Kubrick “was generally very disappointed with Hollywood cinema.” What interested him was something else: international directors who questioned the conventions of the medium and sought new forms of expression. Your favorite. Among all those films, one occupied a separate place. According to Frewin, Kubrick was “excited” by it for decades ‘The Battle of Algiers’by Gillo Pontecorvo. The first time this assistant started working for him, Kubrick already told him that it was impossible to understand what cinema could really do without having seen that film. And he continued saying it until shortly before he died, in 1999. What is ‘The Battle of Algiers’. Winner of the Golden Lion in 1966 at the Venice Festivalreceived three Oscar nominations. Gillo Pontecorvo filmed it in black and white, on the real streets of the Casbah of Algiers, with thousands of local extras and a handful of non-professional actors. The result was so convincing that the film’s advertisements warned that the images did not come from documentary archives. The film reconstructs the most intense years of the Algerian conflict against French colonization, between 1954 and 1957. Pontecorvo based it on the memories of FLN commander Saadi Yacef, who also acted in the film itself, playing a character inspired by himself. The director spent an entire month testing before shooting a single scene, using multiple cameras to make the crowds appear larger, and even repeating some takes more than twenty times to exhaust the actors. The music, signed by Ennio Morricone, flirts with traditional North African percussion and traditional military marches. And finally, the film stands out for its refusal to offer a clear moral perspective: both FLN guerrillas and French paratroopers commit atrocities, and no one plays the role of unequivocal hero. What did Kubrick see in him? In an interview included in the aforementioned article, Kubrick commented that “all films are, in a sense, mockumentaries. You try to get as close to reality as you can, but it is not reality. There are people who do very intelligent things that have fascinated and completely deceived me. For example, ‘The Battle of Algiers’. It is very impressive.” Frewin added a detail: the director went so far as to say that ‘The Battle of Algiers’ and Andrzej Wajda’s ‘Danton’ were the only two films he would have liked to have directed. Parallels with his cinema. Above all from a thematic point of view, the influence of ‘The Battle of Algiers’ on Kubrick’s cinema is indisputable: ‘Paths of Glory’ examines the mechanics of military hierarchy and the corruption it generates, and ‘Full Metal Jacket’ divides its story into two almost incompatible points of view to show that war does not have only one face. In none of these films is there a protagonist who triumphs morally, and in that sense, Pontecorvo and Kubrick shared that war films should not generate catharsis but rather discomfort. At the Pentagon. The influence of ‘The Battle of Algiers’ exceeds the cinematographic sphere. In August 2003the Pentagon’s Directorate of Special Operations organized a screening of the film for senior military and civilian officials. The invitation brochure said: “How to win a battle against terrorism and lose the war of ideas. (…) The French have a plan. It works tactically, but it fails strategically.” The background was the occupation of Iraq: the US army was looking for clues to understand why military victories did not translate into political stability. They weren’t the only thing: the Black Panthers used the film as training material in the 1960s. The IRA also studied it. Argentine intelligence used it in the seventies, for radically different purposes. And today, is screened regularly at West Point, at the Naval War College and at the Academy’s Combating Terrorism Center. In the world of cinemaNolan cited him as an influence when he released ‘Dunkirk’ and (2017) and ‘The Dark Knight Rises’. In Xataka | ‘2001: Flashes in the Dark’: An HBO Max immersion in Stanley Kubrick’s masterpiece that surprises with its visual inventiveness