Anthropic is winning the enterprise AI race, so OpenAI has a new plan: become Anthropic

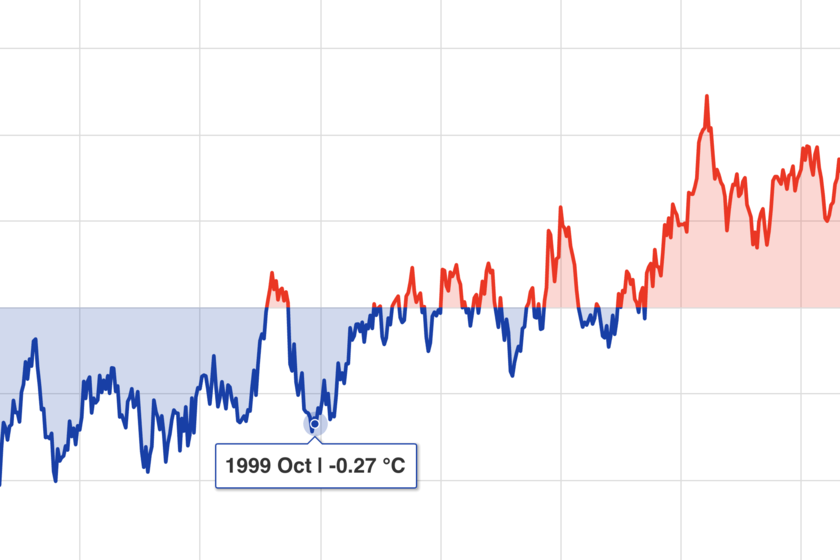

OpenAI has thrown out everything that moved in AI. They have been launching everything: a video generatora web browser with AI, an image generator with Studio Ghibli styletools e-commerceetc. The logic was simple: whoever tries everything has more chances to get something right, but the result has ended up being the opposite. While OpenAI seemed to be everywhere, Anthropic was focused on a single site and It has managed to eat the land where it mattered most. Enough of trying everything. Fidji Simo, the board that Altman signed last summer, recently called upon employees to give them a message that is rarely heard in a company with the growth of OpenAI: their main rival was teaching them a lesson. What Anthropic is doing, Simo explained, should be a wake-up call for OpenAI, which has lost leadership among software developers and enterprise customers. “We cannot waste this moment because we are distracted by parallel projects,” he stressed. The hidden cost of doing a little of everything. The problem with shooting at everything that moves is not only the focus, but the resources that this implies. In companies that develop foundational models, the key resource is computing capacity, and at OpenAI that resource jumped from one team to another depending on the priorities of the day. The Sora team, for example, was integrated into the research division despite being one of the company’s most visible products. OpenAI was growing fast in too many directions, and that also created internal tensions over which project should be prioritized. Anthropic focused on one thing. As OpenAI diversified, Its main rival adopted a completely opposite strategy: few products, a lot of depth. Claude does not generate images or video, does not have his own browser and is not trying to create his own chips (at the moment). It is dedicated to creating foundational models and offering them both in web service mode and especially through APIs for companies and developers. Claude Code, its flagship product for programming, became a viral phenomenon among software engineers last fall, and has ended up consolidating itself as the reference tool among amateur developers—vibe coding is still going strong—and of course among technical teams in all types of companies. OpenAI strikes back. The response has not been long in coming: OpenAI launched last month a new version of Codexhis programming tool, and accompanied it with new GPT-5.4 which is precisely much more oriented towards professional environments. According to Simo itself, Codex already exceeds two million weekly active users, almost four times more than at the beginning of the year. To drive usage of its product, OpenAI is deploying engineers to consulting firms and business partners to accelerate adoption of these products. IPO on the horizon. Both OpenAI and Anthropic are taking clear steps towards an IPO which in fact could occur this year. That makes gaining share in the corporate market—which is the one that really pays, the one that signs contracts, and the one that justifies valuations—absolutely essential for these IPOs to be successful. The initial share price and real valuation of these companies will depend on how well positioned they are, and at OpenAI they want to recover the lost ground in the enterprise market. In the meeting with the staff Simo explained that “we are acting as if this were a code red.” The paradox of being the pioneer. OpenAI unleashed the AI fever with the launch of ChatGPT in November 2022 and made generative AI an almost everyday phenomenon. However, being the first usually has a trap, because it forces you to explore and diversify to maintain your reference position and that is very expensive. Anthropic came along later, saw where the real money was, and focused specifically on that sector. The student has surpassed the teacher, it seems, and at OpenAI they want to correct the strategy. What will happen to so much product?. It remains to be seen how this OpenAI strategy affects its entire product catalog. If you start focusing on developers and enterprise solutions, what will happen to your imager, Sora or Atlas? The structural tension between being a “research laboratory” and being a “product company” can pose a challenge for a company that naturally did not stop exploring new ideas to apply AI to them. Image | TechCrunch | Wikimedia Commons In Xataka | Sam Altman says he’s terrified of a world where AI companies believe themselves to be more powerful than the government. It’s just what you’re building