the new Siri will be based on Gemini AI models

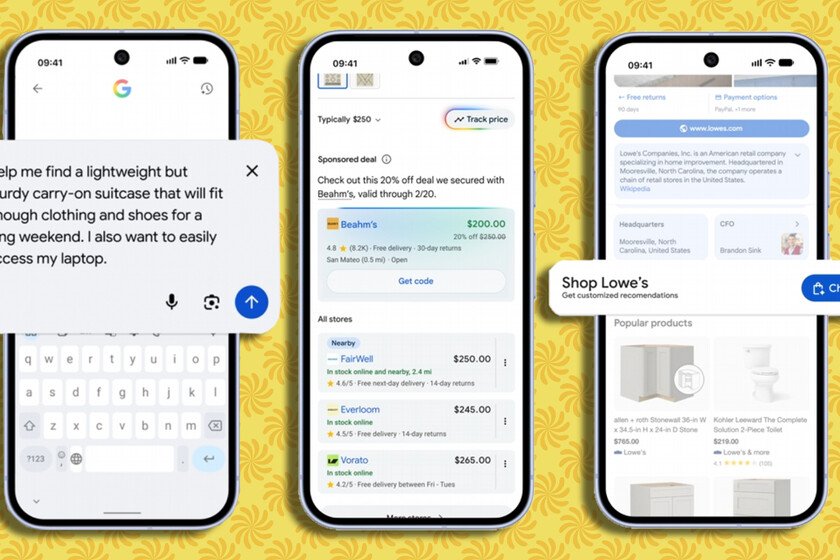

In the midst of the rise of artificial intelligence, with increasingly sophisticated voice assistants like those of ChatGPT either PerplexitySiri begins to show the passage of time too clearly. He doesn’t always understand what we ask of him and often stumbles as soon as we stray from a few predefined patterns. Among promises that have fallen by the wayside, Internal tensions and leadership changesApple seemed to be losing its footing in one of the most decisive technological races of the decade. And, although it is still too early to know if it will be able to reverse this dynamic, the company has just made a move with a major decision: to ally with one of its great rivals. Agreement with Google. The Cupertino company has signed a collaboration multi-year agreement with the search giant by which the next generation of the so-called Apple Foundation Models will be based on the models Gemini and in the search giant’s cloud technology. The next functions of Apple Intelligenceincluding a more personalized Siri whose arrival is “this year.” With privacy at the center. The statement adds that, despite this change, the system will continue to run on the devices and on its platform. Private Cloud Computingfollowing their privacy standards. Apple insists that the operational heart of Apple Intelligence does not leave home. The starting point of everything is at WWDC 2024. There Apple presented Apple Intelligence as its great response to the rise of generative AI and placed Siri at the center of that strategy, promising a much deeper understanding of personal context, the ability to “see” what appears on the screen and to chain actions between applications. In practice, this meant that the assistant had to be able to interpret emails, messages, appointments or files and act on them without the user having to jump from one app to another. It was a leap in ambition much greater than that of traditional Siri. From promises to reality. At the end of 2024, Apple publicly maintained the pace. In a December press release, it reiterated that Siri’s most advanced capabilities would arrive “in the coming months,” while launching other Apple Intelligence pieces such as Image Playground or Genmoji. In that same context, Apple once again spoke of awareness of personal context, vision of what is on the screen and “hundreds of new actions” within and between its own and third-party apps. Three months later, in March 2025, the tone changed. In an official statement to Daring Fireball, the company admitted that some of those features would require more time than expected and went on to talk about a “more personalized” Siri that would be released “over the next year.” June 2025 arrived and, at WWDC that year, Siri did not show a jump equivalent to the one that had been hinted at twelve months earlier. This lack of news ended up pushing Apple to give explanations in public. Craig Federighi, chief software officer, and Greg Joswiak, head of marketing, addressed the issue in interviews after the event. Federighi went on to explain that Apple had had a “version 1” of the new Siri prepared to arrive between December 2024 and spring 2025, but that they decided to stop it after evaluating that it would not meet customer expectations or the company’s internal standards in that period. In the end, everything comes back to the same point. The company now places a more personalized version on its immediate roadmap, after months of back-and-forth with the calendar. The announced alliance changes the technical basis to get there, but it does not eliminate the acid test. It will be actual use, when users start asking complex things from their iPhone or Mac, that will determine whether Apple has managed to catch up in a race that never lets up. Images | Apple | Google In Xataka | Google has found a way to monetize its AI: adding advertising while you shop without leaving it