That Oracle speaks out on the soap opera between NVIDIA and OpenAI is a bad sign. That it will not have benefits until 2029, too

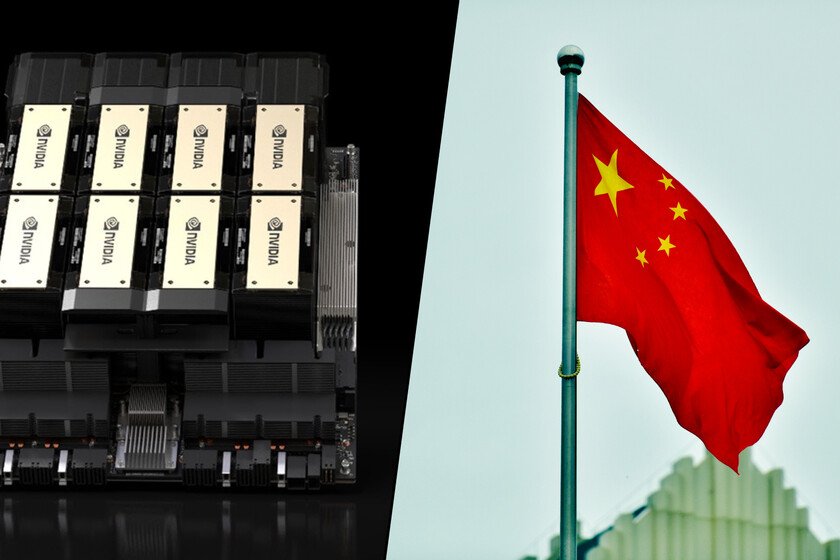

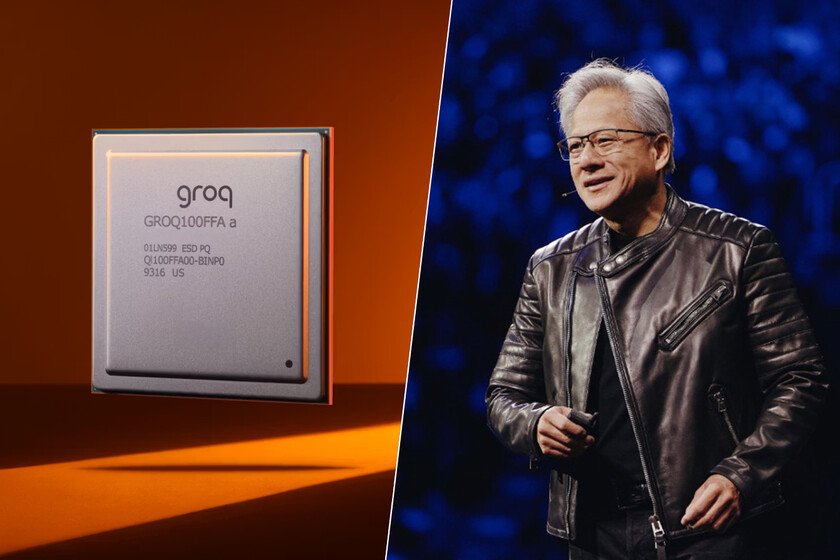

Oracle counted in a tweet that the agreement between NVIDIA and OpenAI has “zero impact” on your financial relationships with the company that owns ChatGPT. This is more complicated than it seems, because the AI business could end up collapsing if a large company like NVIDIA or Oracle shows even a hint of doubt towards OpenAI. The latest statements by Jensen Huang, CEO of NVIDIA, have made the market nervous, although Oracle’s path is not very encouraging either. Why is it relevant? Oracle just announced that will raise between 45,000 and 50,000 million of dollars this year through debt and equity issuance to build cloud infrastructure for its large AI clients. Among them, OpenAI stands out with a contract of 300,000 million of dollars for five years that starts in 2028. The problem is that OpenAI is not profitable right now, and Oracle needs OpenAI to raise capital so that it can pay it. It is a circular financing circuit where everyone depends on everyone Keep signing checks. The numbers don’t add up yet. The contract with OpenAI involves about $60 billion annually starting in 2028. To fulfill it, Oracle must buy approximately 400,000 chips NVIDIA’s GB200, with an estimated cost of $40 billion just for its flagship data center in Abilene, Texas. Meanwhile, OpenAI’s total revenue in 2025 was around $13 billion, according to Bloomberg. Oracle is betting its bottom line that a company that currently burns more cash than it generates can pay bills equal to five times its current annual revenue. The alarm signals. In January, investors accused Oracle of hiding the need for more debt to finance its AI infrastructure, according to Reuters. Oracle’s debt-to-equity ratio is at 6x, and credit default swaps reached levels not seen since the 2008 financial crisis in December, according to point Bloomberg. In addition to all this obstacle, Oracle’s action has fallen 50% from its September peak, when it announced precisely the agreement with OpenAIerasing some $460 billion in market capitalization. ANDnegative n until 2029. Developing data centers for AI has pushed Oracle’s free cash flow into negative territory, where it is expected to remain until 2030, according to data compiled by Bloomberg. Jefferies esteem that the company will need to raise more funds in 2027 and subsequent years, since cash flow will not return to positive until 2029. Oracle plans to raise 50 billion: half through equity, with convertible preferred securities and a share sale program of up to 20 billion, and the other half through a single bond issue in early 2026. Between the lines. What really worries the market is the structure of mutual dependence. NVIDIA funds OpenAI. OpenAI pays Oracle. Oracle buys chips from NVIDIA. Everyone’s income growth depends on everyone else continuing to write checks. When Jensen Huang, CEO of NVIDIA, declared to journalists that the 100 billion agreement with OpenAI “was never a commitment” and that they would invest “step by step”, Oracle had to come out with that tweet to calm the waters. And that tweet is precisely the type of communication that worries investors. Cover image | IEEE Awards, Hartmann Studios, Wikimedia Commons In Xataka | The CEO of Airbnb is clear that there are companies with too many meetings: his trick is to follow Jony Ive’s philosophy