The AI has no future without nuclear energy when even Nvidia has begun to pray to Bill Gates reactors

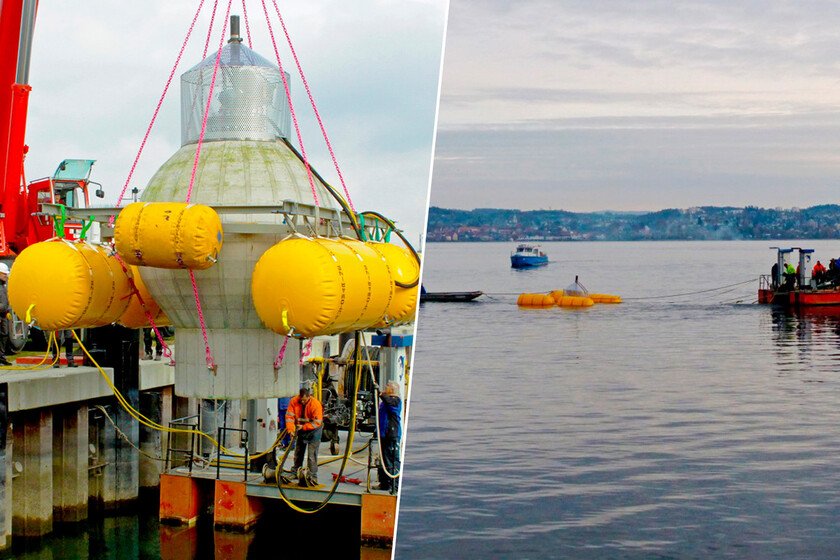

Data centers will be responsible for 10% of the increase in energy demand until 2030, according to the International Energy Agency (IE). The rise of artificial intelligence (AI) What we are living has triggered the proliferation of these facilities In the US, China, Japan, Singapore, India, Germany, Netherlands or Ireland, among other nations. And for the moment there is no indication that invites us to anticipate that this trend will be exhausted in the medium term. A data center dedicated to large AI can exceed 150 MWand, precisely, these are the facilities that are proliferating the most. In fact, in 2024 its global consumption amounted to about 415 TWH, a figure that represents around the 1.5% of global electricity consumption. To solve this challenge and guarantee to data centers the delivery of energy that more and more companies need nuclear. The last one who has done is Nvidia. And is that the company led by Jensen Huang has participated in a financing round Of 650 million dollars to support Terrapower projects, the nuclear energy company founded by Bill Gates in 2006. With this decision NVIDIA adds to the strategy that defends the use of Compact modular reactors (known as SMR for its denomination in English) with the purpose of delivering to the data centers the electricity they need. And, incidentally, put one more leg in a sector with an indisputable growth potential. Terrapower is already building the first Natrium nuclear reactor The nuclear fission reactor that this company has designed is a modular and compact design refrigerated by sodium that uses a molten salts storage system. Because of its characteristics, it is about A fourth generation machine That, according to those responsible, it will be able to generate electricity in half of the cost that a conventional nuclear fission reactor. Whatever the interesting thing is that the first Natrium nuclear reactor in Terrapower is being built in a Wyoming Mining town (USA), and, according to Bill Gates, will be completed in 2030. Nvidia has participated in a financing round of 650 million dollars to support Terrapower projects It sounds good, but we must not overlook that it is a new generation design, so a priori the five years that Terrapower manages seem too optimistic. However, this reactor has an important asset in your favor: on paper Its tuning should be faster and cheaper than that of conventional reactors. In addition, a Spanish public company is participating in the construction of this machine. It is called Ensa (Nuclear Teams, SA), is Cantabrian and has more than five decades of experience in the field of design and manufacturing large components for the nuclear industry. There is no doubt that the fact that Terrapower has decided to ally with it is a boost that will surely reinforce its international image. And, perhaps, he opens the door of other latest generation nuclear energy projects. “This is the first reactor of these characteristics that is manufactured following the highest standards of safety and quality in accordance with the most demanding nuclear regulations,” has declared A Enso spokesman. Interestingly, this Spanish company will participate in The manufacture of the Natrium reactor lid. A last interesting note: currently also intervenes in the construction of ITER (International Thermonuclear Experctor reactor), The experimental reactor of nuclear fusion that an international consortium led by Europe is pointing in the French town of Cadarache. Image | Terrapower More information | The Register In Xataka | “We are already on the last step”: how Spain has done with the key to realize nuclear fusion