With AI, Microsoft has once again insisted that we talk to our computer: experience says that we don’t feel like it

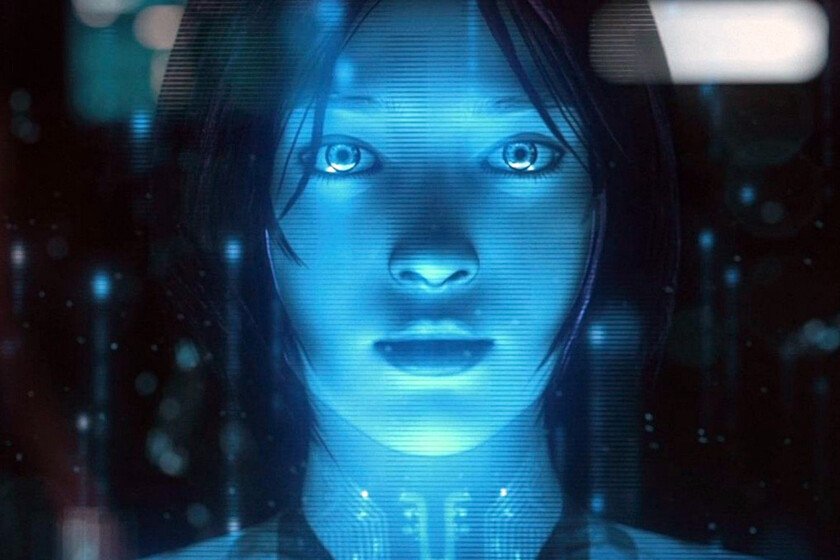

You get up in the morning, go to work and sit in front of the computer, but the first thing you do is not pick up the mouse and keyboard, but say “Hey, Copilot”. Can you imagine it? Me neither, completely, but that is Microsoft’s clear obsession: to get us to talk to our PC instead of using the usual peripherals. That futuristic vision is striking, but it faces several enormous challenges. what memories. The thing about Microsoft and other technology companies with their intention for us to talk to machines goes back a long way. The first generation of voice assistants precisely pursued that goal. There we saw how Alexa, Google Assistant and of course Cortana tried to make us talk much more with our devices. We were not prepared to talk to machines. Its success was rather limited, and even Nadella himself admitted in 2023 that, for example, those “smart” speakers They were “dumber than a stone”. In Xataka Voice assistants and the fight to gain our trust Cortana tried. The Redmond company certainly tried to make Cortana successful. It offered it on both Windows 10 and on Android and iOS…and even the sadly defunct Windows Phone. Over time the company realized that that assistant was not a good fit, and was killing him little by little. The launch of ChatGPT was used by Microsoft to raise your new assistant powered by AI and definitely kill to his first assistant: Copilot wants to be what Cortana could never be. Who asked for this? With that “Hey, Copilot” the same thing is happening as with Cortana: did someone ask Microsoft to integrate a voice assistant into Windows? The voice assistants of that first generation were relegated to residual use, and Amazon suffered this problem firsthand. He bet billions of dollars that Echos would become devices we wouldn’t stop talking about, but most people I just used them to set timers and music. AI promises to go much further. But in spring 2024 we live in a hopeful moment for this type of technology. OpenAI launched GPT-4o and demonstrated that natural conversations with a mobile phone were not only possible, but also They were very powerful. AI could be ours confidant and companion -with controversy included— or our private teacherand as others later wanted to demonstrate, it could also do things for us just by talking to her. Let them tell you to the vibe coders. But we still have a hard time talking to the PC. Since then it certainly seems that we have become a little more accustomed to talking with our smartphone, but things seem to be different on the PC. The statistics reflect that 77% of young people use their voice on their smartphone, while only 38% of them do so on the PC. “But everyone on the PC listens to me”. There is also a sociological component in this use of voice on the PC. The mobile phone is more intimate and personal, while the PC is often used in a static setting in which there are people around who can capture what we say. Furthermore, in the physical context, the unspoken rules of coexistence—do not disturb, do not invade others’ acoustic space—outweigh the promise of comfort. And then there is distrust. Microsoft is not helped by its recent history, especially with Recall, that option that seemed really striking and ingenious but ended up being delayed to generate a great controversy regarding privacy. The launch of the new Windows 11 options, with “Hey, Copilot” as the main protagonist, does not seem to have been received with too much enthusiasm, and the tone, for example, of the comments from this long thread It is skepticism. Rivals focus on mobile phones and speakers, not the PC. The truth is that the adoption of voice as a way to interact with our devices does not seem to be particularly viral. The erratic launch of Alexa+ does not seem to be providing great advantages, Apple continues to make itself wait with its renewed version of Siri, and only Google has taken a step forward with Geminialthough not clearly on the desktop. Talking to machines works, but not as much on the PC as on the mobile. {“videoId”:”x9jvzns”,”autoplay”:false,”title”:”Project Astra Exploring the Capabilities of a Universal AI Assistant”, “tag”:”Project Astra”, “duration”:”116″} A triumph for accessibility. Where there is a clear use scenario for this technology is in the area of accessibility. For users with reduced mobility, the ability to dictate or control the device with their voice can be transformative. This need is concrete and well defined, however: it does not justify a general redesign of the interaction or a marketing campaign that tries to get us all to talk to the computer. The voice should solve things, not be a fair trick. Microsoft’s real challenge is not technical — the technology is there — but human. The company must convince people that talking to the PC makes sense. To do this, it must address three fronts: privacy, the social context—that you don’t mind talking to your PC—and of course, that said interaction has practical use and works. For example, they come in there Copilot Actionswho will have to demonstrate – like everything else – that Microft is on the right path here. Otherwise, “Hey, Copilot” could become the new Cortana. In Xataka | Sundar Pichai (CEO of Google) believes that ‘Her’ is inevitable: “there will be people who fall in love with an AI and we should prepare ourselves” (function() { window._JS_MODULES = window._JS_MODULES || {}; var headElement = document.getElementsByTagName(‘head’)(0); if (_JS_MODULES.instagram) { var instagramScript = document.createElement(‘script’); instagramScript.src=”https://platform.instagram.com/en_US/embeds.js”; instagramScript.async = true; instagramScript.defer = true; headElement.appendChild(instagramScript); } })(); – The news With AI, Microsoft has once again insisted that we talk to our computer: experience says that we don’t feel like it was originally published in Xataka by Javier Pastor .