Until we saw the data from other countries

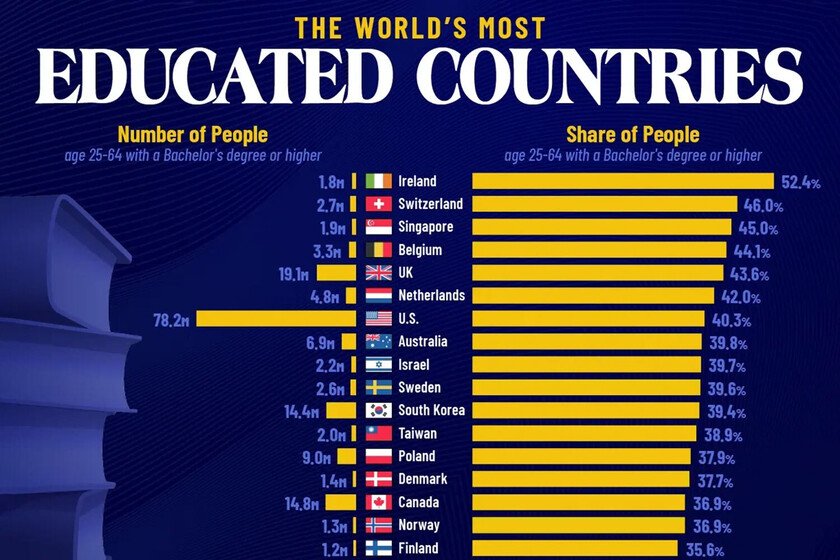

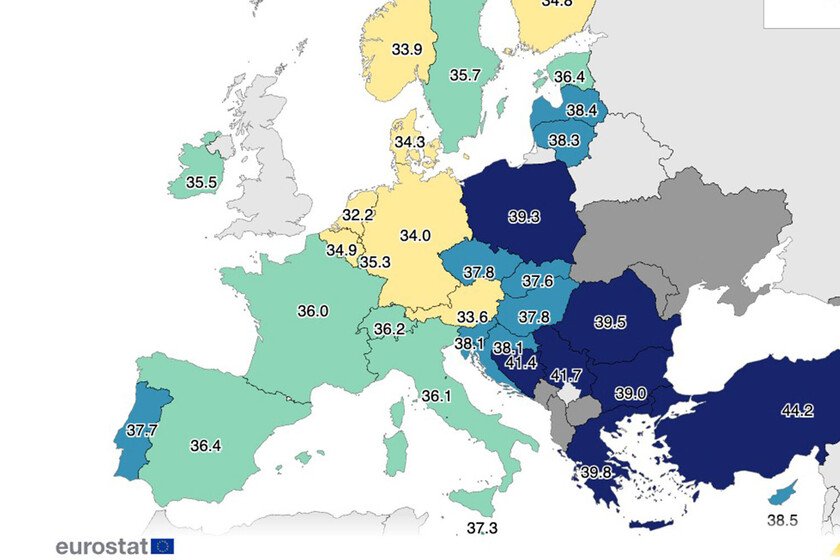

The most students Second of Baccalaureate in Spain have already completed their University access tests (PAU) and have the challenge of their higher studies ahead. Comparatively, Spain has advanced a lot in terms of the number of people With university career or higher studies. However, it is enough to take a look at the countries around us to confirm that there is still a lot of work to do in this area. The “most educated” countries. A study prepared by CBRE Research With the latest available data on higher education in OECD countries and main economic powers, the list of countries has just established with the highest percentage of adults with higher education titles. Those countries with the highest proportion of qualified workforce They tend to offer better productivity and greater capacity for innovation and economic growth thanks to having a better prepared work mass. The portal Visualcapitalist He has collected all this data in a single more enlightening graph so that, in a single glance, we can get to the idea of how the level of higher education is located in Spain with respect to other countries. Spain, a lot of work to do. According to Fedea dataSpain has made Very good job As for education during the last decades, going from illiteracy rates of 15% of the active population over 25 years of age registered in 1960 to only 1.9% registered in 2022. However, according to data from the study of CBRE Research Only 28.8% of the active population between 25 and 64 years has a university degree or accredited higher studies. This, in absolute figures, assumes that 9.2 million Spaniards have completed His university studies. Ireland, the example to follow. The absolute leader in population with university training is Ireland, which with 52.4% is placed as the country as more graduates in their labor market. The Eurostat figures About Ireland confirm the theory that a more formed population improves productivity since it is like the country with the highest productivity of the OECD duplicating the European mean However, if we look at the absolute population values, we find that, by population, only 1.8 million Irish has a university training. Switzerland (with 46%), Singapore (45%), Belgium (44.1%) and the United Kingdom (43.6%) compete with the top 5 of countries with the highest percentage of university graduates with respect to the whole of its population. Our environment is not better. If we look at our northern neighbors, France is not much better than Spain in terms of education with 28.1% of its population with higher studies, while Portugal significantly exceeds the percentage of Spain with 29.4% of its active population with university education. Demographic trap. However, despite using percentage values of its population, it is worth highlighting the population differences of each country when taking into account the percentage of population with a university degree and the economic effort that this supposes for the states. For example, Singapore had a total population of 5.91 million inhabitants in 2023, of which 1.9 million had a higher education title, hence their percentage is 45%. On the other hand, cases such as India, which occupies the penultimate position in terms of training, in 2023 had a population of 1,438 million inhabitants, of which 139.4 million had completed a university career. The relative percentage with respect to its total population is 14.2%, but it is an educational system that has formed 139.4 million people. Brain drain. On the other hand, one of the biggest challenges for these countries is to get their higher studies, these future professionals return Investment that the country has done in its education Avoiding talent escape to provide added value to the economy. The problem is that the lack of job stability and opportunities in countries such as Spain or Portugal is making many graduates have MORE Remedy to migrate To countries such as Australia, Ireland, Germany or the US to develop their professional career. In Xataka | The 100 best universities in the world excluding those of the US, exposed this graphic revealing Image | Visualcapitalist