The US tried to treat Anthropic as if it were an enemy company for refusing to arm its AI. The judge just stopped him

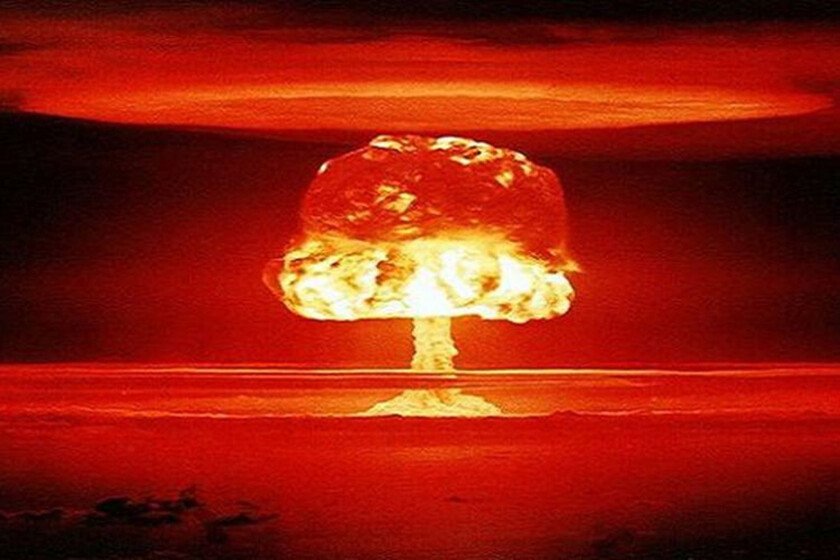

There is a new chapter in the clash between Anthropic and the Pentagon, and it is one that must not have sat well with the Trump administration. After declaring it “a risk to the supply chain” (put her on the blacklistOh), Anthropic went to court and now the judge has just agreed with them, so the order has been paralyzed. what has happened. The Trump administration sought to punish Anthropic after refuse to let their AI be used in lethal autonomous weapons and mass surveillance, but Judge Rita Lin, of the Northern District of California, just blocked the order. The judge has asked the government for a report, which they must present before April 6, in which they detail how they have complied with their resolution. The government has seven days to appeal. “Orwellian idea”. The judge is quite harsh with the government’s decision. He considers that it is an “arbitrary and capricious” move and that “no provision of the applicable law supports the Orwellian idea that an American company can be branded as a potential adversary and saboteur of the United States for expressing its disagreement with the Government.” Furthermore, he indicates that if the problem is that they do not trust Anthropic’s AI “the War Department could simply stop using Claude.” It’s not going to sit too well with the Trump administration. In his order he also mentions the “financial and reputational prejudice” to which Anthropic would be exposed if this measure is applied, arguing that it could leave the company paralyzed. Why is it important. It is the first time that a restriction of this caliber has been applied to a domestic company. Supply chain risk is defined as “the risk that an adversary could sabotage or subvert a covered system,” but what has happened here is that it has been used as a punishment for disagreement. Furthermore, if the order were implemented, Anthropic would be commercially isolated by being prohibited from working, not only with civilian agencies, but also with private companies that wanted to work with the defense department. And now what. Several legal experts They already warned that the decision would not survive legal scrutiny and it has. This decision represents a victory for Anthropic, which in a statement assured that “Our goal remains to collaborate constructively with the Government to ensure that all Americans benefit from safe and reliable AI.” The question now is what will be the next step of the Trump administration, which has not yet commented on the matter. In Xataka | OpenAI says its deal with the Pentagon is secure. Seriously, really, you have to believe it, trust it, it assures you Image | Anthropic, edited