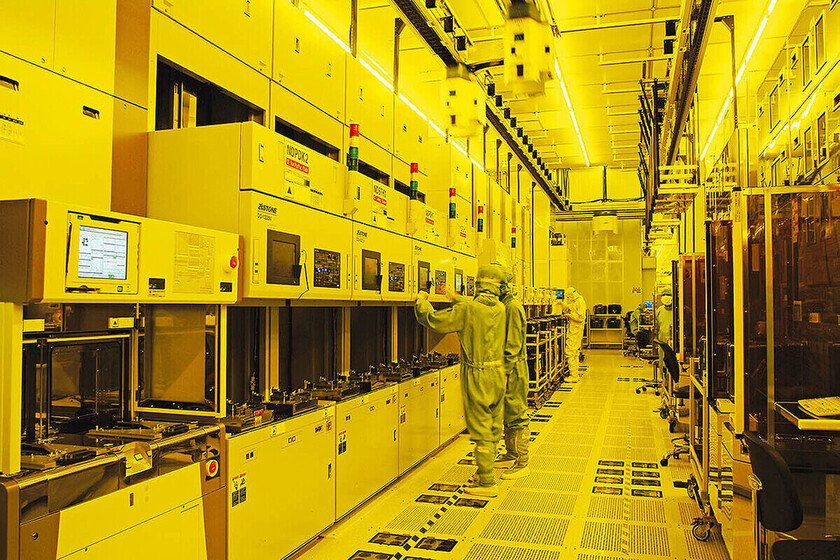

It will impose tariffs from 25 to 100% to the chips manufactured in Taiwan

Donald Trump is fulfilling his word. During the electoral campaign he promised that he would make the decisions that were necessary to reinforce the business of US companies within the US. And he also assured that he would sanction tariffs all those countries that threaten the interests of the nation that leads since January 20. As soon as he has been in the government for a week, and he is doing both. “In the very close future we will impose tariffs on foreign production of computer chips, semiconductors and pharmaceutical products to return the manufacture of these essential goods to the US (…) went to Taiwan; now we want them to return. We do not want to give them Millions of dollars in the ridiculous Biden program. 100%”, Donald Trump declared yesterday during a conference that was held in Florida (USA). This measure of the Donald Trump’s government has not taken TSMC offset The express mention to Taiwan that the US president has made a few hours ago is a very clear allusion to TSMC. On this Asian island there are other semiconductor manufacturers, such as UMC, but its relevance in the chip market is much lower than that of the company currently leading CC Wei. TSMC dominates the integrated circuit market with A quota of approximately 60%so your leadership in the chip manufacturing industry is indisputable. TSMC has been leaching its strategy for more than four years to extend its semiconductor manufacturing infrastructure beyond Taiwan’s borders Anyway, the passing step that the US administration is going to give will not take TSMC by surprise. This company has been outlining its strategy for more than four years to extend your manufacturing infrastructure of semiconductors beyond the borders of Taiwan. And he is doing it for two reasons. On the one hand it is an effective way to protect your business if at any time it is triggered A war conflict between China and Taiwanand their plants on the island were useless. But, in addition, TSMC is significantly developing its infrastructure in the US. His plan is that their new Arizona factories not only serve to protect their business from a possible conflict between China and Taiwan; They also protect it from the foreseeable US tariffs. The first of these plants is already producing integrated circuits in the N4 lithographic node, which belongs to the Finfet family of 5 Nm. In fact, he is about to deliver Apple’s first chips games. The second Arizona factory will be operational in 2028 and will produce circuits integrated in N3 (3 Nm) and N2 (2 Nm) nodes. And finally, the third factory will not be listed at all until the end of this decade and will produce chips in the N2 (2 nm) node. So far the most advanced TSMC integration technologies were only available In its Taiwan plantsbut, as we have just seen, Soon they will also be in the US. And in this way it will solve two problems of a stroke: it will be fought from the tariffs that the Trump government will approve and will reinforce its production infrastructure beyond its country of origin. One last note: in addition to the US, TSMC is building New plants in Japan and Europe. Image | TSMC More information | C-Span In Xataka | Intel’s plan in front of an unattainable TSMC: beat Samsung and consolidate as the second largest chips manufacturer