AI agents have indeed changed work and the economy forever. But for now only in one sector: programming

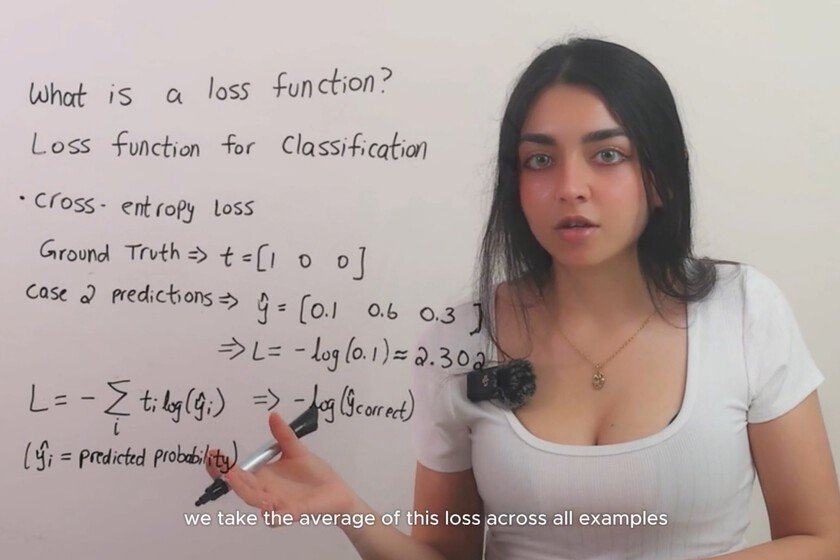

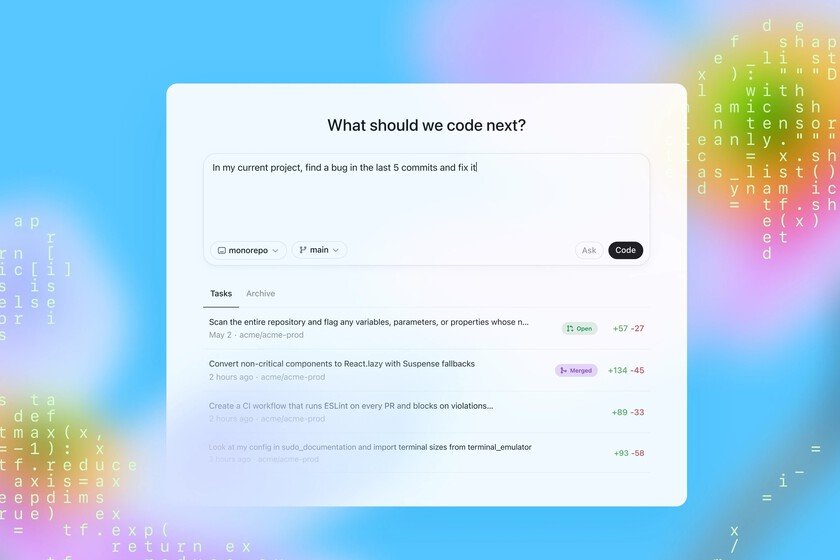

AI agents are beginning to demonstrate their capabilities, but the only area in which they do so is programming. An Anthropic report reveals how software engineering is where half of the activity of AI agents is currently concentrated, and that proves two things. The first, that AI can effectively enhance work. The second, that there is a huge opportunity for hundreds of verticals where AI has barely landed. what has happened. If there is a sector that has embraced AI and AI agents, it is programming. Platforms like Cursor or WindSurf first and like Claude Code, OpenAI Codex or Antigravity today have made all kinds of people —whether they know programming or not— can turn their projects into reality in a really simple way. It’s a clear case of how AI can contribute to a field, but there’s a problem: it’s practically the only case where it has actually done so. Distribution of requests to AI tools by segment. Software engineering is almost responsible for 50% of those calls or requests, at least in the case of the Claude platform. Source: Anthropic. Verticals with a lot of margin. As can be seen in this graph, the presence of AI agents is very reduced or practically non-existent in a large number of verticals in which it is evident that there is a notable opportunity to take advantage of these tools. The automation of office tasks is the second main protagonist with 9.1% of the function calls of the Anthropic AI model in this report. Below it we find segments such as marketing, sales, finance, business analysis or scientific research. And others who are ignoring AI. There are quite a few sectors in which AI agents seem to be barely present. The travel, legal, medical, e-commerce or education segments seem perfect to start taking advantage of these tools, but at the moment this is not the case and this presence is very, very small in all of them. Claude Code can work longer and longer. Double what it was three months ago, in fact. Source: Anthropic. Models can now work autonomously for a long time. In these scenarios it is true that the models used to be limited by the time they could function autonomously and “chain” actions and self-analyze progress to continue acting. That’s not so true now. Claude Code, for example, has doubled the time of his longest sessions in just three months: from 25 minutes in October 2025 to 45 minutes in January 2026. And they need less human intervention. Another of the revealing data of the study is that the evolution of these agents not only means that they can function autonomously for longer periods of time, but that this also implies fewer human interventions. Those situations in which an agent “needs human help” to continue with the process are becoming limited. In August 2025, the average was 5.4 human interventions per session. In December that average dropped to 3.3 interventions. We trust more and more in AI. At Anthropic they have also noticed a unique behavior among users: they are increasingly trusting AI agents. In programming, novices approve each new step before it is executed, but veterans delegate and intervene when something goes wrong: they have gone from pre-approving everything to exercising active and constant monitoring. As they say at Anthropic“Users develop confidence as they work with the model, and change their monitoring strategy based on that growing confidence.” From programming to other fields. What has happened with programming could happen in other scenarios. The challenge is to build AI agents that adapt to each segment using that specific data from said vertical. If an AI wants to help in the legal segment, it must be specifically trained for that segment. What the AI did when trained with thousands of code repositories on GitHub It was learning and improving. Well, the same can be applied to other verticals, although the challenge is certainly notable because programming was a perfect segment for the application of AI: it is very deterministic. It either works or it doesn’t, and whether it does or not, execution logs allow you to fine-tune that operation. The new unicorns await. As entrepreneur Garry Tan points out in your newsletterin the last two decades SaaS platforms have managed to capture 40% of venture capital investments and that industry has more than 170 unicorns. “The thesis is simple,” Tan concludes, “all of those unicorns have an equivalent in the form of vertical AI waiting.” Promises and realities. The AI agent segment therefore promises many changes in a multitude of segments, but the reality is that today the practical success (there is no economic success at the moment) of AI is limited to the world of programming. Will we be able to transfer it to other segments? The opportunity is there, but it is one thing to say it, and quite another to do it… even if it is with AI. Image | Joshua Reddekopp In Xataka | Every time Facebook had a competitor, it bought it: it is exactly the same thing that OpenAI is doing