If anyone was waiting for the AI bubble to burst, NVIDIA’s results have a message: sit tight

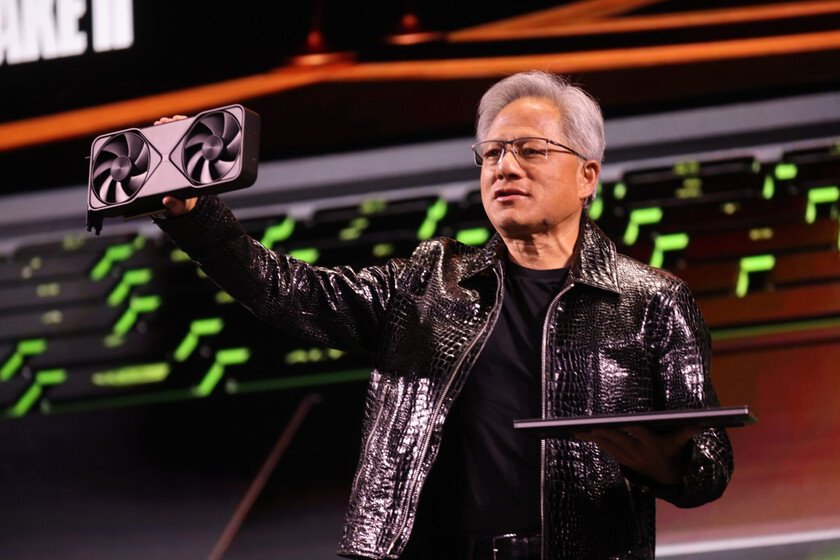

NVIDIA just published your results of the fourth quarter of its last fiscal year and has left Wall Street speechless. Revenues of $68.1 billion, a net profit that almost doubles that of the same period of the previous year, and a forecast for the following quarter that has far exceeded analysts’ expectations. And all this in a turbulent context where more efficient models and other alternatives are beginning to appear. The crash of DeepSeek is far away, and the demand for chips does not slow down. We tell you the numbers in detail. In case your position was not clear. Only a handful of companies in history have exceeded $100 billion in annual profit. Alphabet, Microsoft and Apple are in that club. NVIDIA has just joined them, with $120 billion in profits in the last twelve months, according to the report. The difference is speed: just three years ago, its annual profit was 4.4 billion. We can say with certainty that no technology company has ever grown so quickly on that scale. AI, and more AI. The engine that has driven these profits is its data center business, which generated $62.3 billion in the quarter, 71% more than a year ago. Within that segment, if we focus on their Blackwell chips, they have gone from entering 32.6 billion to 51.3 billion, while the networks (NVLink, Spectrum-X and InfiniBand) grow from 3,000 to 11,000 million. Gross margin is 75%, and earnings per share nearly double to $1.76 in GAAP terms (which is the official rulebook that companies follow to demonstrate transparent accounting). What Jensen Huang says. “Without computing, there is no way to generate tokens. Without tokens, there is no way to grow revenue.”, counted directly the CEO of NVIDIA in the meeting with investors. Their thesis is that in the new AI economy, computing power directly equates to revenue for their customers. That is why the large cloud service providers (Google, Amazon, Microsoft, Meta) continue increasing your capex budgetswhich together will exceed 500,000 million dollars in 2026 to build AI data centers. And NVIDIA is the main beneficiary of that expense. What DeepSeek has not broken, but accelerated. At the beginning of 2025, the emergence of the Chinese DeepSeek model generated an unprecedented tremor in the markets, leaving a simple question in our minds: if AI becomes more efficient, why do we need so many chips? The answer from NVIDIA’s results is that efficiency does not reduce infrastructure demand, it multiplies it. Every improvement in inference efficiency lowers the cost per token, encouraging more companies to deploy more AI applications, which in turn requires more compute. It’s like Jevons’ paradox, but applied to AI: efficiency expands the market instead of contracting it. Agentic AI as the next catalyst. On the same call with investors and analysts, Huang stood out that “enterprise adoption of agents is skyrocketing.” AI agentsthese systems that make decisions and execute tasks autonomously, require many more inference cycles than chatbots. They are the next step in the AI value chain, and NVIDIA is once again in a privileged position. Colette Kress, CFO of the company, confirmed In addition, the first samples of Vera Rubin, the next generation of chips that will arrive later this year, have already been sent. China and the competition. Not everything is green. NVIDIA acknowledged that its forecast for the next quarter ($78 billion) does not include computing revenue in China. The company has generated just about $60 million from H20 chips since the Trump administration reapproved some sales in August 2025, according to SEC filings, and has yet to earn revenue from the most recently approved H200. Regulatory uncertainty with Beijing remains a small China in Huang’s shoe. In parallel, competitors such as AMD, Broadcom or Google’s own custom chips (TPUs) are gaining ground. But the NVIDIA CEO remains focused on his vision. And according to pointed at the meeting: “Every company depends on software, and all software will depend on AI.” As long as this is fulfilled, everything indicates that NVIDIA will continue selling the blades and picks. Cover image | NVIDIA In Xataka | NVIDIA was founded by three engineers, but only Jensen Huang remains CEO: “I wish I had kept some shares”