NASA’s alliance to finally understand dark matter

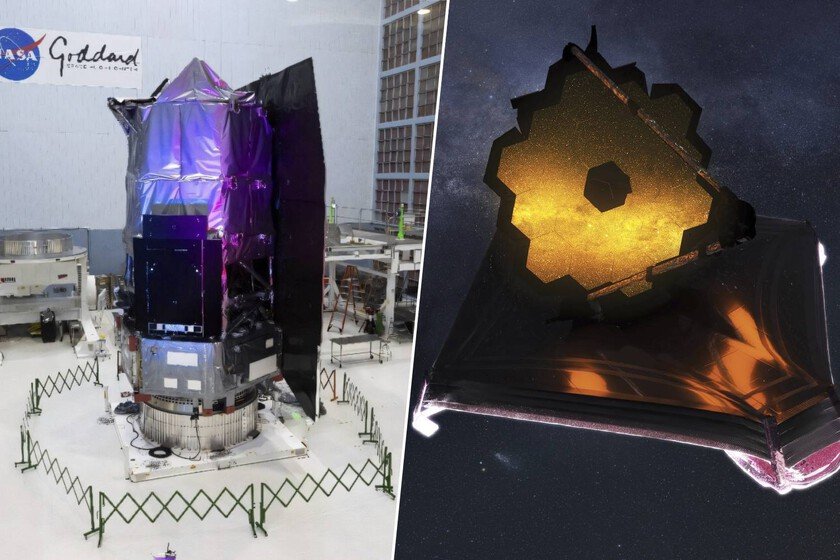

This week, NASA launched the Nancy Grace Roman Space Telescope, better known as the Roman Space Telescope. With its launch scheduled for September of this year at the earliest, it will become the space agency’s newest space telescope. It will coexist with others like Hubble or James Webbbut it has something that these don’t have. The ability to track vast expanses of the Universe at once. That’s what makes it special. Much more space. The Roman Space Telescope has 18 detectors that give it a panoramic view of space. It has been baptized with this name in honor of what is known as the mother of Hubble, for her important role in the development of this other space telescope. However, both have major differences. It is capable of looking at a field 100 times larger than that of Hubble. As a result, is expected that discovers tens of thousands of planets, billions of galaxies and stars and thousands of supernovae. An ideal companion for James Webb. The Roman Space Telescope also has advantages over the James Webb. If it is capable of analyzing a field 100 times larger than that of Hubble, in the case of James Webb exceeds it by 50 times. This allows you to observe without a clear objective on the part of the researchers. When exploring such large expanses, you may find something unexpected at any time. That’s where James Webb comes into play. And, although it can analyze less space at once, it is much more precise. Its mirrors are larger, so it captures more light and can discern more details. If the Roman detects something interesting, the James Webb analyzes it with a magnifying glass. Context matters. We have already seen that the James Webb can study the Roman detections with more precision. However, they can also help each other in the opposite direction, since the Roman is capable of providing context around James Webb’s objectives. Together to unravel dark matter. The biggest difference between the Roman Space Telescope and the James Webb compared to Hubble is that they can analyze space by focusing on emissions in the infrared spectrum, rather than visible light. As a result, it can see through cosmic dust, detect cold objects, and look further back in time. The latter is extremely useful for understanding the expansion process of the universe and, incidentally, unravel some mysteries about dark matter. The Universe expands. We have known for a long time that the universe is expanding. That is, the galaxies are moving away from each other, but not because they are moving, but because the space between them is stretched, like a balloon that is inflating. It is also known that this is happening more and more quickly. But why does it happen? It is not clear, but it is suspected that it may be due to dark matter. Supernovas that act as lighthouses. To better understand what is happening, it is important to measure very well how galaxies are separating. One of the ideal ways to do this is by using Ia supernova explosions as beacons. They are phenomena with a known maximum brightness, so they are used to measure distances, taking into account the analysis of their relative brightness from Earth or the place where a space telescope is located. The problem is that they only occur once every 500 years in the Milky Way. A telescope that measures in the infrared can travel very far back in time, but the James Webb only does so in small pieces. The Roman, on the other hand, can analyze such large areas that several of these explosions could be detected at the same time. That would allow several beacons to operate simultaneously to better map the Universe and understand why it is expanding as it does. Once the beacons were located, the James Webb would enter the game to do its detailed analysis. Together they can unravel very ancient mysteries of astrophysics. There is no one better than the other. Image | POT In Xataka | We have been studying the planets of TRAPPIST-1 for years with great hope. James Webb just knocked it down