summarize everything in your email inbox with Claude, Gemini or ChatGPT

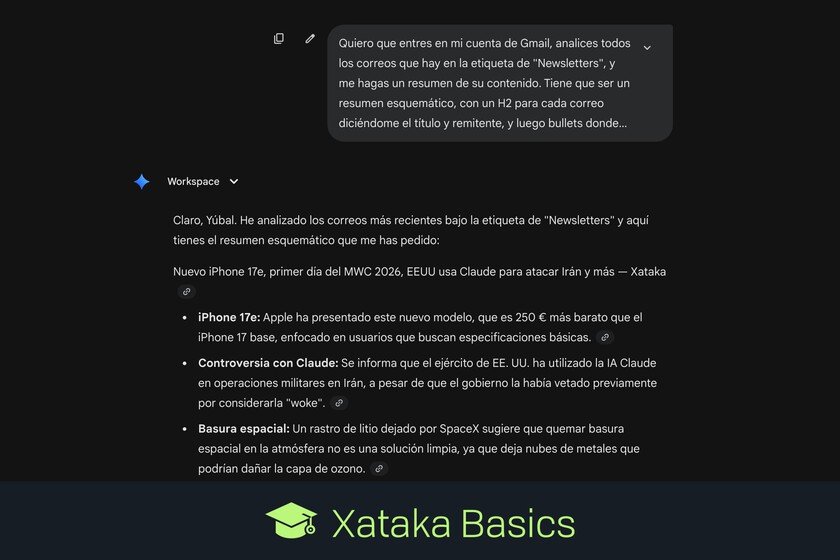

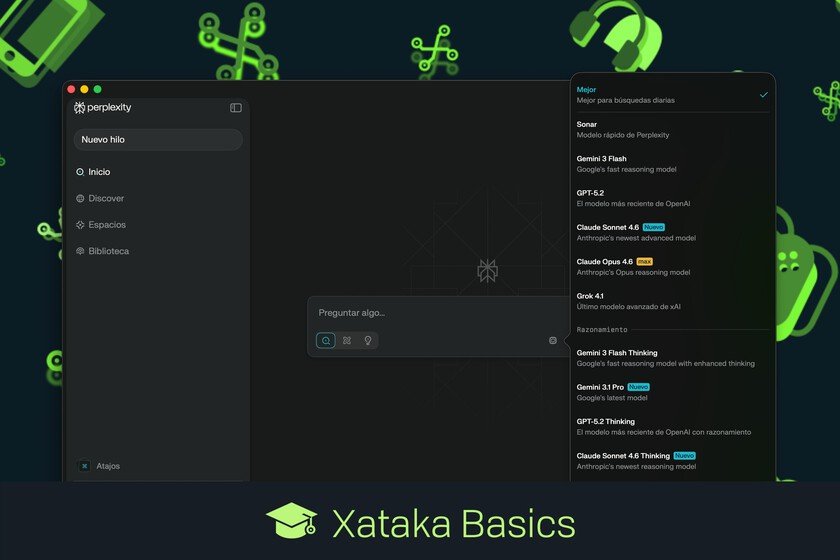

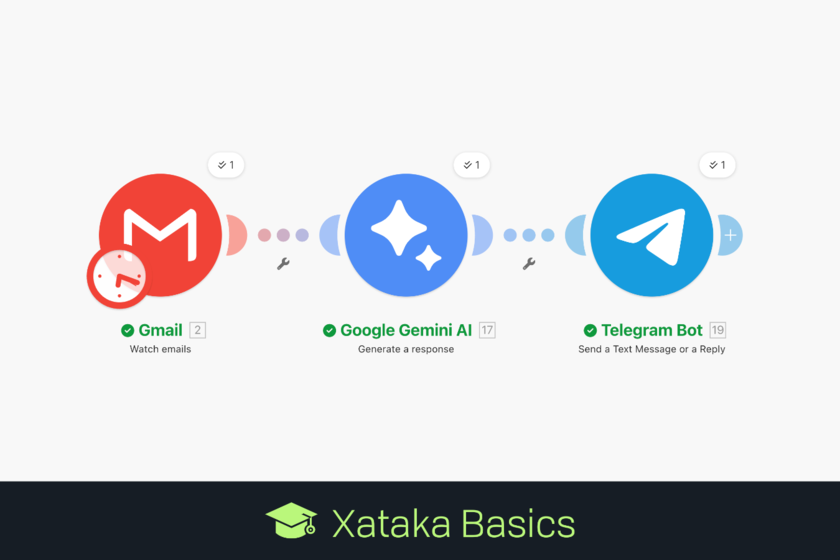

Let’s explain to you how to make summaries of the newsletters you have in your email punctually with artificial intelligence. So, if you see that they have been accumulating but you don’t have time to read them, you will be able to ask the AI to summarize them all for you. If your email is Gmail you can resort to Gemini already Claudeand if you have an outlook email then you can do it with ChatGPT. These are the AIs that have connectors for each mail service. But we will also start by telling you how we recommend organizing the newsletters in the email so that it is easier for the AI to find them. First, organize your newsletters Before you start, I recommend tag all newsletters with the label or category system that Gmail and Outlook have. This way, you will be able to later ask the AI to search directly in these categories instead of having to analyze the entire content of your email. Therefore, take your time entering the newsletters and tagging them. At first you will have to label them all, but then, each email address will be linked to the labelmeaning that the next ones that arrive to you and are not new will already be well labeled. Now link AI to your email Claude has a connector system where you must add and activate Gmail. Gemini allows you to do the same with its Connected Appsand in ChatGPT you have a section Applications which allows you to connect Outlook. With this previous step, you will have to link your email account to the AI so that it can access and read your emails. If you are most concerned about your privacy Maybe you should reconsider doing this, because in the end you are going to link your account to the AI, so it can read and process all your emails when you ask it, storing its content on your company’s servers. The emails will no longer be private, you will be sharing them. Now, ask the AI for a summary And now it’s time to go to the AI and write a message asking for the summary. This prompts It has to mention Gmail or Outlook depending on the AI you use and the email you have linked, and if you have done what we have recommended you have to indicate the newsletter label and ask for a summary. Besides, you can specify the structure of the summary so that it is more to your liking. This is the prompt that I have used: I want you to enter my Gmail account, analyze all the emails in the “Newsletters” label, and give me a summary of their content. It has to be a schematic summary, with an H2 for each email telling me the title and sender, and then bullets where you explain the most interesting points of its content. With this, the AI will start to see the emails within your account and will give you a summary as you have requested. Here, keep in mind that you can simply tell it to search for the newsletters without having tagged them, but then there is the possibility that it will not find them all or consider something as a newsletter that really is not. Each AI will give you the results in its own wayalthough maintaining the structure that you have requested if you have specified it. Thus, with the prompt that we have used you will have everything summarized in several points so that you can read it in just a few minutes. In Xataka Basics | Claude: 23 functions and some tricks to get the most out of this artificial intelligence