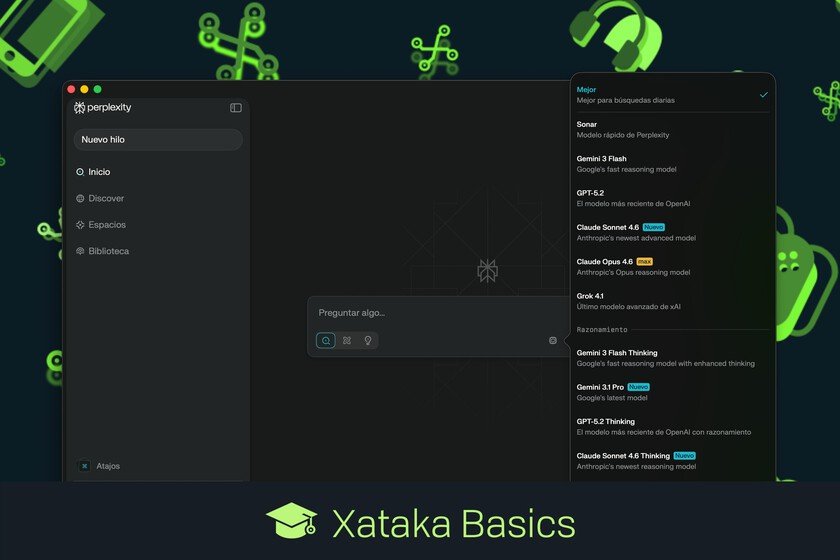

is that Claude looks like GPT and GPT looks like Claude

OpenAI launched yesterday its new foundational model, GPT-5.5. It did so just a week after Anthropic released Opus 4.7, and that confirms that frenetic cadence that several AI companies are involved in: there is not a week that does not have at least one important release. Each model is better than the previous one in the benchmarks, but the surprise is the sensation that the latest OpenAI and Anthropic models convey. It’s as if the roles had been exchanged. The sea of good… GPT-5.5 is “our smartest and most intuitive yet.” At OpenAI they say that this version understands what you really need faster, and it is not necessary to give it so many details to “intuit” what you want. It is now available for subscribers of the Plus, Pro, Business and Enterprise plans. …and expensive. Access for API users will arrive “very soon” according to AI engineers, but be careful, because it will not be a cheap model. In fact, it will cost $5 per million tokens in and $30 per million tokens out. It is double what GPT-5.4 cost, but OpenAI seems to be sure that it is worth paying that price. And they may be right. There is an even more expensive version: GPT-5.5 Pro costs $30 per million input tokens and $180 for output tokens. It is the highest price we have seen in AI models, although in OpenAI the model is more efficient in tokens, which if met reduces the real cost per task. Agentic by design. The new GPT-5.5 is positioned as a model designed to complete tasks, and not so much to answer questions. The distinction is very intentional: previous versions required detailed prompts and constant monitoring, GPT-5.5 is intended for long agentic tasks where the model has to make autonomous decisions over multiple steps. The model uses algorithms designed by itself and which according to OpenAI allow generating tokens 20% faster than GPT-5.4, and some users seem have noticed that change. Benchmarks with nuances. The test comparison table published by OpenAI shows how GPT-5.5 wins in 14 of those benchmarks, compared to 4 for Opus 4.7 and 2 for Gemini 3.1 Pro. As always, they are internal tests and will have to be validated independently, but there are curious data. GPT-5.5 dominates in TerminalBenh, FrontierMath and ARC-AGI-2, while Opus 4.7 dominates in SWE-Bench Pro (programming), although according to OpenAI it does so with a “memorization” technique that could influence the results. Those responsible for the Artificial Analysis Intelligence Index they are clear that GPT-5.5 is currently the most powerful model on the market, and the leap with respect to its predecessor is notable. GPT now looks like Claude (and vice versa). The reactions of the user community have drawn attention not to the power of these models, but to their behavior. In The Neuron newsletter they explain that Opus 4.7 now seems more like a GPT because it consumes more tokens, writes more and does not respond with that tone so characteristic of Anthropic models. Just the opposite happens to GPT-5.5, and it seems to give the feeling that one is using Claude. He writes concisely, doesn’t seem as clumsy when he reasons quickly, and is more direct. Dan Shiper, CEO of Every, Indian that Opus 4.7 seems slow compared to GPT-5.5. For analysts like Dylan Patel, from Semianalysis, the reason is that Opus 4.7 is deliberately compute-intensive. OpenAI has an advantage. Here appears an interesting advantage for OpenAI, which has always trying to guarantee future computing capacity. It may not have achieved it because demand continues to grow, but here it seems to have room for maneuver and that allows its most advanced models not to have the infrastructure problems that Anthropic has. It’s as if Anthropic were a Ferrari with rationed fuel, and as if OpenAI had just bought the gas station and had (more or less) plenty for its models. Minipoint for OpenAI? It’s early to say, but the reception of Claude 4.7 has not been as good as we would have hoped, and if GPT-5.5 indeed confirms expectations, we could have a surprising change of leadership here. It seemed that Anthropic I had everything under control with Claude Code and Claude Opus 4.6, but the recent criticism of Opus 4.7 and the apparent virtues of GPT-5.5 could mean a battle won for OpenAI, which certainly needs them for its IPO. While, of course, There are other rivals lurking. In Xataka | Someone has had a simple idea so that data centers do not collapse in Spain: “unplug them” 18 days a year