There are people sharing their court cases with AI. The problem is when a judge considers the conversations as evidence

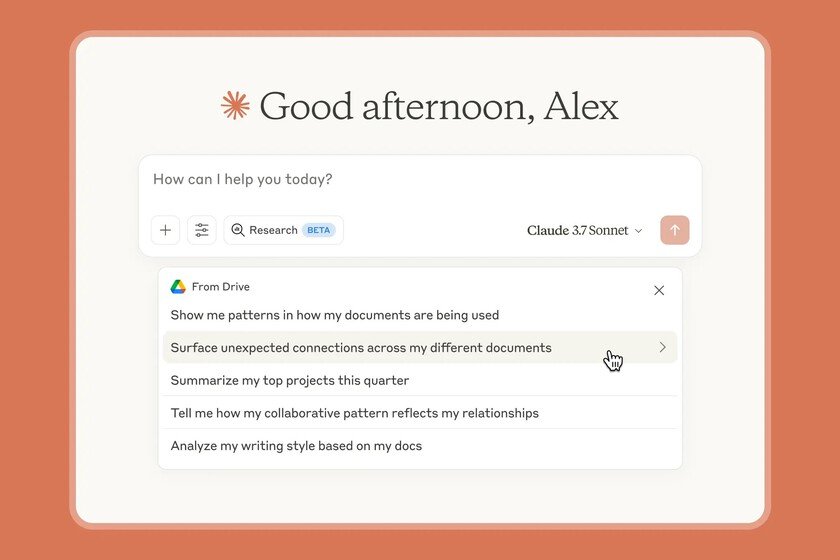

More and more users have an AI chatbot as a companion for everything, whether ChatGPT, Gemini, Claudeor any other. The problem comes when we decide to share sensitive data with this type of tools, especially with commercial models produced by large technology companies where we will always have the doubt of where our data travels. In this sense, there are those who share their legal data with the assistant, which can lead to something like what recently happened in New York. And a city judge just set a precedent historical by considering that any conversation held with a chatbot is public and therefore not protected by attorney-client privilege. That is to say: everything you share with the AI can end up being used against you in court. The case. Bradley Heppner, an executive accused of fraud worth $300 million, used Claude, Anthropic’s chatbot, to ask questions about his legal situation before being arrested. He created 31 documents with his conversations with the AI and later shared them with his defense attorneys. When the FBI seized his electronic devices, his attorneys claimed those documents were protected by attorney-client privilege. Judge Jed Rakoff has said no. Because No. Just like share Moish Peltz, a lawyer specializing in digital assets and intellectual property, in a post on X, the sentence establishes three reasons. First, an AI is not a lawyer: it is not licensed to practice, owes no loyalty to anyone, and its terms of service expressly disclaim any attorney-client relationship. Second, sharing legal information with an AI is legally equivalent to telling it to a friend, so it is not protected by professional secrecy. And third, sending ‘non-privileged’ documents to your lawyer afterwards does not magically make them confidential. The underlying problem. As the lawyer recalls, the interface of this type of chatbot generates a false sense of privacy, but in reality you are entering information into a third-party commercial platform that retains your data and reserves broad rights to disclose it. According to Anthropic privacy policy In effect when Heppner used Claude, the company may disclose both user questions and generated responses to “governmental regulatory authorities.” Dilemma. The court document reveals Also an aggravating factor: Heppner introduced into the AI information that he had previously received from his lawyers. This poses a dilemma for the prosecution, according to account Peltz. And if you try to use those documents as evidence at trial, defense attorneys could become witnesses to the events, potentially forcing a mistrial. What does it mean to you? If you are involved in any legal matter, according to this ruling, what you share with an AI can be claimed by a judge and used as evidence. It doesn’t matter whether you are preparing your defense or seeking preliminary advice, as each query can end up becoming a factor against you. And it does not only apply to criminal cases: divorces, labor disputes, commercial litigation… any conversation with AI on these topics escapes legal protection. And now what. Peltz points out that legal professionals must explicitly warn their clients of this risk. You can’t assume that people understand it intuitively. The solution he mentions involves creating collaborative workspaces with AI shared between lawyer and client, so any interaction with artificial intelligence will occur under the supervision of the lawyer and within the lawyer-client relationship. Cover image | Romain Dancre and Solen Feyissa In Xataka | Folding clothes or taking apart LEGOs has always been a tedious task. Xiaomi’s new AI for robots has put an end to it