TSMC is the ‘kingpin’ of chips and Apple has always been its best friend. That just changed

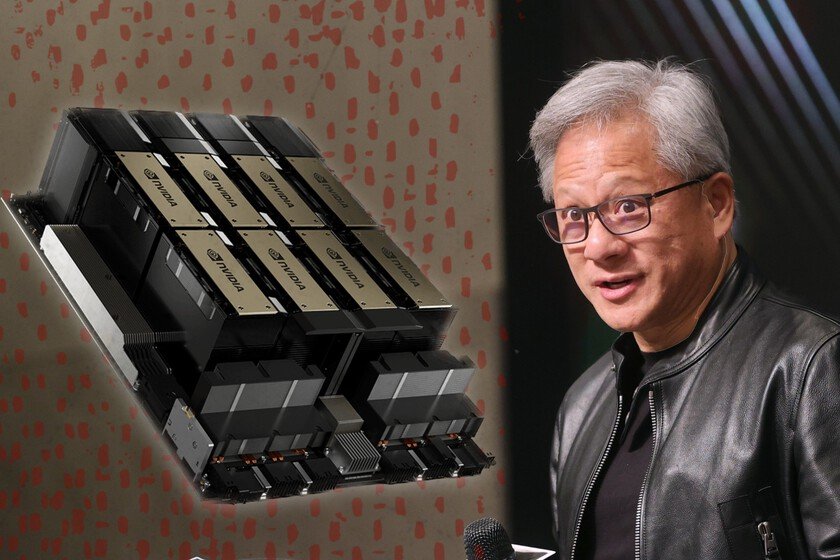

TSMC is the foundry of the world. Although there are others like Samsung that have muscleit is the Taiwanese company that has conquered the high-performance chip segment. It has achieved this through capacity, technology and an alliance: that of Apple. For a decade, TSMC was Apple’s great friend, the one that manufactured its chips and the one that revolutionized – with the designs of Apple Silicon– the laptops. Now NVIDIA rules. And he has elbowed his way through. In short. In the midst of the AI era and with a technological current in which it is impossible to separate oneself from NVIDIA, Apple has more than enough reasons to feel jealous. While the mobile segment faces cuts unprecedented due to the crisis of RAM and components, and with Tim Cook himself -CEO of Apple- commenting on the difficulties they will have This 2026, artificial intelligence is going like a rocket. Major memory manufacturers have pivoted to high-bandwidth memory for AI GPUs, and companies like NVIDIA, Phison, amd and even the Chinese ones like SMIC and Huawei They are clapping their ears. They have made the AI Big Techs dependent on their hardware, and no one makes that hardware like TSMC. Result? According to the latest reports, NVIDIA will become its largest customer this year. The importance of ‘Customer A’. It may seem like an unimportant change of chips, but it is actually more relevant than we think. The difference between a ‘Customer A’ and a ‘Customer B’ implies that, faced with production bottlenecks, one of the two is given priority. We already saw this in the 2020 semiconductor crisis when, precisely, half of the industry was drowning (cars, cameras, TVs and mobile phones) while Apple did not have such bad forecasts because it was the darling of a TSMC that was going to focus on iPhone chips to consolidate a lucrative relationship that began with the Apple A8 of the iPhone 6. Jensen Huang himself -CEO of NVIDIA- has commented the quite proud play on a podcast. “Morris –Morris Chang, founder of TSMC and friend of Huang – will be happy to know that NVIDIA is TSMC’s largest customer right now,” said the CEO. It is because little margin: 19% for NVIDIA compared to 17% for Apple, but it is an achievement and a thermometer of how the industry is doing. Last year, NVIDIA’s contribution to TSMC was 12%, which is a considerable jump in a very short time. “I need a lot of wafers”. Obviously, this does not imply that TSMC is going to stop pampering Apple over other companies. Apple has a huge percentage of the mobile segment, but NVIDIA is crucial to keep the AI machinery rolling. Despite the Google attempts with its TPUsthe agreements of OpenAI with Broadcomthose of Goal with NVIDIA and AMD or those of xAI manufacturing its chipsNVIDIA is still the one who splits the cod. Even Chinese companies need NVIDIA GPUs and, of course, NVIDIA is more than willing to take a cut. On a recent visit to Taiwan,. Huang met with local industry heavyweights and noted that “NVIDIA would need a lot of wafers this year,” putting even more pressure to a TSMC that is crucial in the artificial intelligence chain. Synonym of success. Samsung, Huawei and SMIC They are fighting to be alternatives in case TSMC collapses. But TSMC has put us on the couch and has been looking at how to diversify the business for a few years. In Taiwan they maintain the heart and the muscle, but the plant in Europe – in Germany – is underway and they already have an operational foundry in the United States. In fact, there are plans to expand it because they have more and more clients who need a very specific product that works like a Swiss watch. But this has a B side: all the industry’s eggs are in the same basket. If TSMC fails, the house of cards can collapse. There is already some report that indicates that the American plant that manufactures for Apple, Intel, NVIDIA or AMD is overwhelmed due to a huge amount of orders. And there, precisely, lies the importance of being a client A… or a client B. Images | TSMC, NVIDIA In Xataka | SK is one of the chip whales and it is clear about one thing: not all the money in the world will satisfy AI’s hunger for RAM