US agents denounce that it is failing in a key point

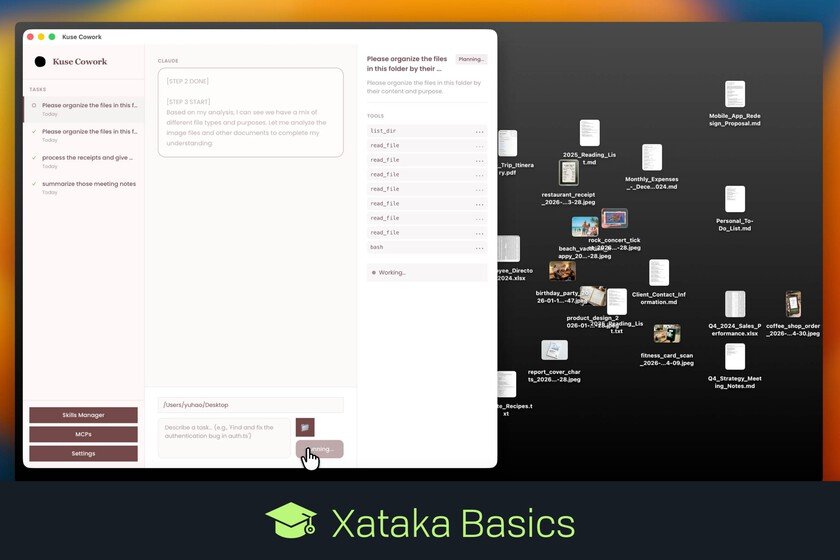

Social networks have been using automated systems for years to try to detect some of the most serious crimes that circulate on the internet. Among them is child sexual exploitation, a phenomenon that forces platforms, regulators and security forces to monitor enormous volumes of content every day. The promise of these tools is clear: identify potential cases sooner and make the work of agents easier. However, some specialized teams in the United States maintain that the volume of notices they receive from Meta platforms has skyrocketed and that a significant portion of them do not provide useful information for action. Clash between scale and utility. In a lawsuit underway in New Mexico, prosecutors maintain that Meta did not adequately disclose what it knew about the risks minors face on its platforms and that it violated state consumer protection laws. According to the Associated Pressthe indictment also argues that the company presented the safety of its services in a way that did not correspond to the risks faced by children and adolescents. The case is part of a broader wave of lawsuits filed in the United States against large technology companies for the effects their services may have on minors. Meta rejects that interpretation. In his speech before the jury, the company’s lawyer Kevin Huff defended that the company has reported the risks associated with the use of its services and that it has introduced different tools to detect and eliminate harmful content. According to the Associated Press, Huff insisted that the central point of the case is not to prove that problematic content exists on social networks, but rather to determine whether the company hid relevant information from users. Researchers on the front line. Those who have provided figures and concrete examples of this problem are agents who work directly in investigations of child exploitation on the Internet. In the United States, those tasks fall largely to the network of units known as Internet Crimes Against Children (ICAC), a program that brings together police forces at different levels and is coordinated with the Department of Justice to investigate and prosecute crimes committed against minors in digital environments. Its agents receive notices about possible cases from different sources, including the technology platforms themselves. During the trial, some of these agents have described how they are experiencing the increase in ads from Meta platforms. Benjamin Zwiebel, ICAC special agent in New Mexico, explained in court that many of the notices they receive are of little use in advancing an investigation. “We get a lot of advice from Meta that is just garbage,” he declared, according to The Guardian. His words reflect a broader concern within these units: the volume of alerts has skyrocketed, but not all of them contain the information necessary to identify a suspect or initiate police action. Poor quality. In some cases, reports sent from the platforms include data that does not describe criminal conduct. In others, they do point to a possible crime, but they arrive without essential elements to continue the investigation, such as images, videos or fragments of conversations that allow those responsible to be identified. Without this material, agents have few tools to advance the case or request new proceedings. Some agents have also noted that a portion of these notices arrive with incomplete or partially removed information. The mass reporting machinery. Behind this increase in notices there are several factors that help to understand why the volume of reports sent to the authorities has skyrocketed. In the United States, technology companies are required by law to report any child sexual abuse material they detect on their services to the National Center for Missing & Exploited Children (NCMEC), an organization that acts as a national center for receiving these notices and subsequently distributes them to the corresponding police forces. Agents cited by The Guardian also point to recent legal changes, such as the Report Act, which came into force in November 2024, as a possible factor that would have increased the number of notices sent to avoid non-compliance. Meta says he’s doing the opposite.. The company rejects the idea that its systems are making the work of the authorities more difficult and maintains that, on the contrary, it has been collaborating for years with security forces to detect and prosecute this type of crime. A Meta spokesperson stated that the United States Department of Justice has recognized on several occasions the speed with which the company responds to requests from authorities and that NCMEC has positively evaluated its notice notification system. According to the company, in 2024 it received more than 9,000 emergency requests from US authorities and resolved them in an average time of 67 minutes, a process that, it claims, is accelerated even more when it comes to cases related to child safety or the risk of suicide. Meta also notes that it reports to NCMEC any material that may be linked to child sexual exploitation and that it works with that organization to help prioritize the notices, including by labeling those it considers most urgent. a real problem. Regardless of what the jury in New Mexico determines, the case reflects a tension that goes beyond a single company or a single state. Digital platforms operate on a global scale and use automated systems to detect illicit content in volumes that would be impossible to review manually. However, the experience described by some agents shows that increasing the number of tips does not always translate into more effective investigations. Images | Dima Solomin | ROBIN WORRALL In Xataka | Dario Amodei founded Anthropic because OpenAI didn’t take the risks of AI seriously. Now you are going to give in to those risks