This engineer found 1,351 loose photos in his grandmother’s house. He ended up building a personal Wikipedia of his entire life

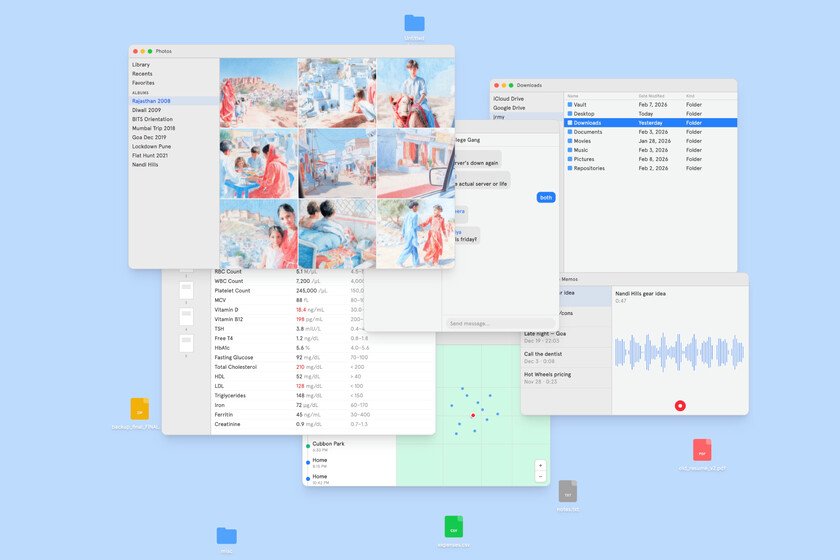

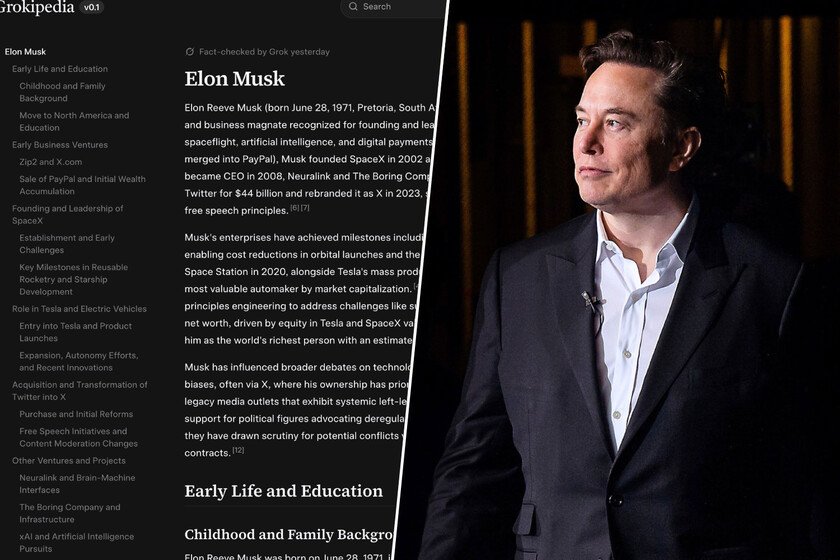

It all started with a closet full of old loose photos. Last year an engineer named Jeremy visited his grandmother’s house for the first time since the pandemic and unknowingly came across a treasure. 1,351 on paper, without order, without dates and without context. Some were in black and white, from when his grandparents were 20 years old. Others were from his mother as a baby. The last ones were from him in high school, just before smartphones arrived and everything moved to the cloud. What began as a family organization exercise became a fascinating project over the weeks: a personal encyclopedia. A Wikipedia of his own life. First, the physical photos and the grandmother. The first problem he encountered when starting his project is that physical photos do not have EXIF metadata. There is almost never a capture date (although some cameras superimposed it), there are no GPS coordinates and there is no information that allows them to be easily sorted. What Jeremy did was resort to a much more direct solution: sit down with his grandmother and ask her about the photos. Remembering that it is a gerund. In that conversation she rearranged the photos of their wedding and narrated the details while he took notes. Names, places, who was sitting where, what each ritual meant. With those notes, he set up a local instance of MediaWiki, the same software that Wikipedia uses, and wrote a page about the wedding following the same format that was used on Wikipedia to royal wedding between Prince William and Kate Middleton in 2011. Within two afternoons I had a complete article with scanned photos, captions, links to empty pages about each person mentioned, and links to the real Wikipedia to give historical context to the events. Digital photos and Claude Code to get the job done. Jeremy realized that things could get worse and took the opportunity to do tests with digital photos, which do have EXIF data with date and time and even GPS coordinates. With that information he wanted to see how far he could go without interviews, so he took 625 photos from a family trip to Coorg (India) in 2012, put them in a folder and opened Claude Code in that directory with a simple instruction: compose a Wikipedia page by browsing the images. The model used ImageMagick to create contact sheets that allowed him to process multiple photos at once, and the magic of AI did the rest. The result was a detailed draft chronicling the trip organized by time of day. Without location data, just with timestamps and visual content, the AI model was able to identify the places that appeared in the photos, including some that Jeremy himself had forgotten. It even detected the means of transportation used between destinations just with what it saw in the images. When AI starts remembering for you. Then came the most ambitious experiment, when he wanted to go further with a trip he took to Mexico City in 2022. He had 291 photos and 343 videos taken with an iPhone 12 Pro with GPS coordinates in the metadata, but he also exported his Google Maps location history, his Uber trips, his banking transactions and his Shazam history. By including all that data and sources, the model was able to cross-reference banking transactions with location data to identify the restaurants where he had eaten. For example, he found images of a soccer match in the photos but did not remember which teams were playing, but he found out that information by crossing those photos with bank transactions in which he found a Ticketmaster invoice with the name of the tournament and the teams, and incorporated them into the page. He also used Shazam’s history to describe the music playing in each location. From photos and memories to a personal encyclopedia. A wonderful project that now anyone can replicate thanks to the whoami.wiki website. First the trips, then the friendships. What started as a travel documentation project evolved into something more personal. The Facebook, Instagram and WhatsApp archives contained some 100,000 messages and several thousand voice notes exchanged with close friends over a decade. The AI model managed to convert all this information into a unique biography, identifying vital episodes of the protagonists, then converted into pages that, according to Jeremy, “read as if they were written by someone who knew us both.” When he shared the pages with those friends, they couldn’t stop reading those stories and wanted more. MediaWiki as a master ingredient. One of the most interesting decisions of the project is the choice of software. MediaWiki, Wikipedia’s engine, turned out to be an extraordinarily suitable tool for that use case. AI models understand this perfectly because they have been trained with millions of Wikipedia pages and know their structure and functioning. Discussion pages serve to control the development of those pages, categories group pages by topic, and revision history monitors the evolution of each page. All of this infrastructure already existed, and it was not necessary to create a new platform to organize the information that Jeremy was providing. Surpriseyes. At the end of his story, Jeremy explains that after the process: “I realized that I was no longer alone working on a family history project. What I had been creating, page by page, was a personal encyclopedia. A structured, navigable, interconnected record of my life compiled thanks to the data I already had around me.” Documenting her grandmother’s life revealed things she didn’t know: her years as a single mother or the decisions she had to make, for example. Going through the history of his friendships allowed him to recover moments that he had almost forgotten and made him call some of them to remember them together. “The encyclopedia not only organized the data, it made me pay more attention to the people in my life,” he explained. you can do it too. The project has been so rewarding for him that he … Read more