Of algorithmic trader to revolutionize AI. This is the story of Liang Wenfeng, the founder of Deepseek

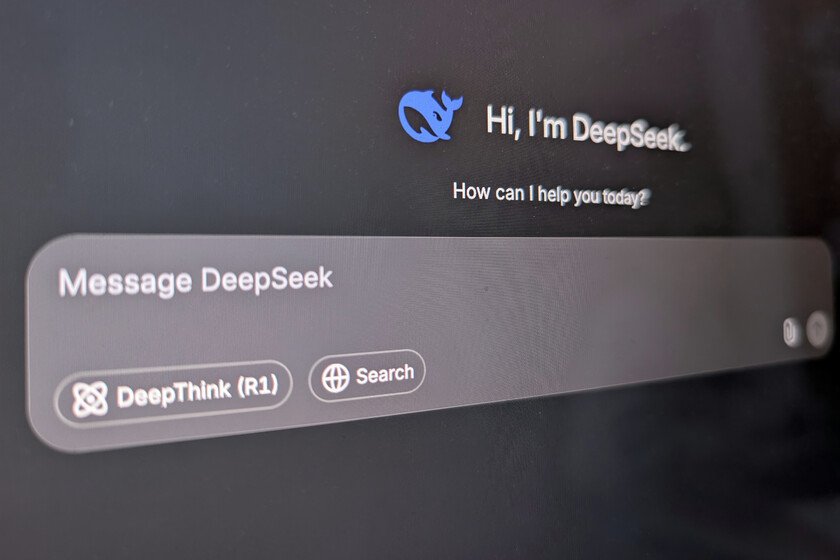

In just two weeks, an unknown 40 -year -old Chinese engineer has shaken Silicon Valley and has monopolized the world conversation about AI. It has even caused a global stock earthquake. Liang Wenfeng has achieved something that seemed impossible: Develop an AI model that rivals Openai to a fraction of its cost. If we move the focus, what has actually done is to launch an order to the American domain in Ia. Liang is not a technological entrepreneur to use. Born on the southern coast of China, in Zhanjiang, he began his career studying electronic engineering at the University of Zheijang. With Bright notes. In 2015 co -founded High-Flyera quantitative investment fund that managed more than $ 13,000 million using algorithms of Machine Learning To operate in the stock market. What distinguishes Liang is His unusual way of guiding his career. While most Chinese companies of AI focused on marketing products, He opted for research Pure and hard. “In the last thirty years, (the Chinese technology industry) has only emphasized to make money and ignored innovation,” he told China Waves as collected Reuters. “Innovation is not driven only by the business, it also needs curiosity and desire to create.” This vision materialized in 2021, when it began to accumulate thousands of Nvidia chips for a project without name, just before the United States restricted its sale to China. Two years later he founded Deepseek with just over one million dollars of initial capital. Today, They say local mediaDeepseek has only 140 employees. That is 10% of the OpenAI size, for example. Deepseek’s success He has driven Liang in his country. On January 20, he was in a closed door with Li Qiang, the prime minister. Liang was the youngest in the room. His meteoric ascent, going from a very limited fame to his field to being the epicenter of the global technological conversation in a few days, he has also put him in the trigger for those who question that Deepseek has been able to develop V3 and R1 only with the declared infrastructure. This is what Alexandr Wang, CEO of Scale AI, suggested in statements to CNBCwhen he assumed that his access to Chips had been much older but could not admit it for commercial restrictions. Dario Amodei, CEO of Anthropic, It was more comprehensive and even magnanimousbut not condescending. For Liang, The goal goes beyond competing with Silicon Valley. As explained to 36krseeks that China “gradually transit” to be a beneficiary to a taxpayer in the AI industry. “What we see is that China cannot always be in a follower,” he said. “We often say that there is a gap of one or two years between China and American AI, but the true gap is between originality and imitation.” With a discreet profile and a disagreement image That has nothing to do with Altman, Zuckerberg and company, some liang companions They have described it as a pragmatic leader most motivated by his curiosity than for wealth or fame. It fits with seen so far. His commitment to research rather than by commercial applications reveals A certain background of your personality: It puts curiosity for long -term knowledge to immediate benefits. And perhaps that is what has changed the role of China in the Global Race of AI. Outstanding image | X, Xataka, Deepseek In Xataka | I have tried Deepseek on the web and in my Mac. Chatgpt, Claude and Gemini have a problem