Google’s secret weapon against CUDA dominance is called TorchTPU. And it’s an NVIDIA waterline missile

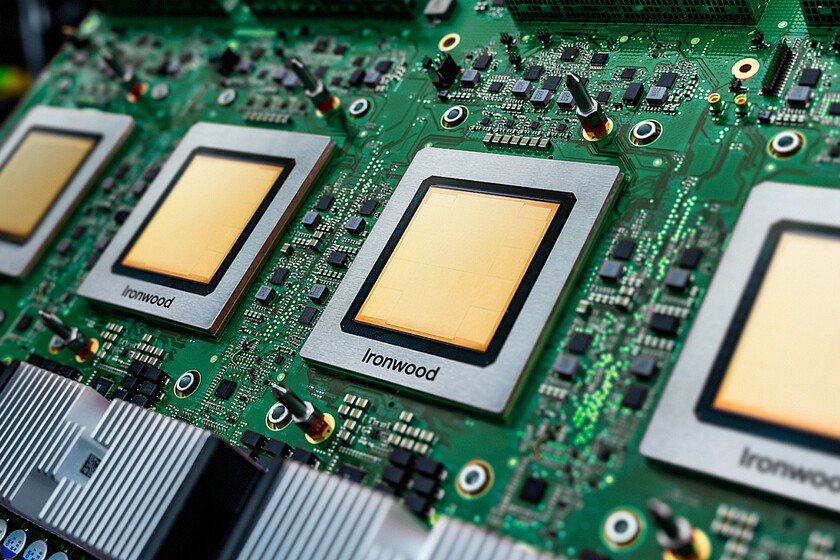

Google has launched an internal initiative called “TorchTPU” with a singular goal: to make their TPUs fully compatible with PyTorch. For the not so initiated, we translate it: what Google intends is to destroy once and for all the monopoly and absolute control that NVIDIA has with CUDA. Why is it important. NVIDIA has become the first company in the world by market capitalization for two big reasons. The first, for its AI GPUs. And the second, much more important, for CUDAthe software platform that is used by all AI developers and that has an important peculiarity: it only works on chips from NVIDIA itself. So if you want to work in AI with the latest of the latest, you have to jump through hoops… until now. What happens with Google and its TPUs. Google’s Tensor Processing Units (TPUs) were until now optimized for Jax, Google’s own platform that was similar to CUDA in its objective. However, the majority of the industry uses PyTorch, which has been optimized for years thanks to the aforementioned CUDA. That creates a barrier to entry for other chipmakers, which face a huge bottleneck in attracting customers. Goal is in the garlic. Anonymous sources close to the project indicate in Reuters that to achieve its goal and accelerate the process Google has partnered with Meta. This is especially striking because it was Meta who originally created PyTorch. Mark Zuckerberg’s company has ended up being just as much a slave to NVIDIA as its rivals, and is very interested in Google’s TPUs offering a viable alternative to reduce its own infrastructure costs. Google as a potential AI chip giant. The company led by Sundar Pichai has made an important change of direction with its TPUs, which were previously reserved exclusively for it. Since 2022, the Google Cloud division has taken control of their sale, and has turned them into a fundamental revenue driver because they are no longer only used by Google: Tell Anthropic. A spokesperson for this division has not commented specifically on the project, but confirmed to Reuters that this type of initiative would provide customers with the ability to choose. All against NVIDIA. This alliance is the last attempt to put an end to that great ace in NVIDIA’s sleeve. In these months we have seen how companies like Huawei prepare your own alternative ecosystem to CUDAbut they also participate in a joint effort of several Chinese AI companies for the same purpose. Hardware matters, software matters more. CUDA has become such a critical component for NVIDIA that if other semiconductor manufacturers have not been able to compete with it, it is not because of their chips, but because they cannot support CUDA natively. We have a great example in AMDwhich has exceptional AI GPUs. In fact, they are superior to NVIDIA in certain sections, but their software is not as powerful. In Xataka | Google’s TPUs are the first big sign that NVIDIA’s empire is faltering